Google Maps Website & Contact Extractor

Pricing

from $3.60 / 1,000 business-results

Google Maps Website & Contact Extractor

Extract Google Maps business listings and enrich them with lightweight website contact details such as emails, contact page URL, phone numbers, and social profile links.

Pricing

from $3.60 / 1,000 business-results

Rating

0.0

(0)

Developer

Delowar Munna

Maintained by CommunityActor stats

0

Bookmarked

7

Total users

5

Monthly active users

22 days ago

Last modified

Categories

Share

Extract Google Maps business listings by keyword + location and enrich each row with shallow website contact data — emails, contact page URL, website phone, and social profile links. Returns a clean, flat, CSV-friendly row per business — built for B2B lead generation, sales outreach, web design and SEO agencies, and local marketing.

V1 stays deliberately shallow on the website side (homepage + a small number of likely contact/about pages, capped by maxPagesPerWebsite) so runs are fast and cost-predictable. You pay one flat event per unique business row that passes your filters — same price whether or not the website extraction found extra contact data.

✨ Why this scraper

- Maps + website in one pass — 41 flat fields covering Maps card data plus emails, contact page, website phone, and social links.

- Shallow by design — homepage + up to

maxPagesPerWebsite-1extra contact/about pages per site. No deep crawling, no AI, no review scraping. - Pay-Per-Event — one flat event per saved business row, whether or not contact data was found. Duplicates and filtered rows are not charged.

- No login, no cookies, no sessions — just keyword + location.

- CSV-friendly output — flat structure, no nested objects, drops cleanly into Sheets/Excel/CRMs.

- Transparent contact-quality score — rule-based (no AI), explained below.

🚀 Quick start — sample inputs

Example 1 — single query

Example 2 — multi-query, email required, custom residential proxy via your own provider

The actor blocks Apify Residential proxy; if you need residential routing, supply your own provider via

proxyConfiguration.proxyUrlsas shown. See 🚦 Proxy policy below.

The

searchQueriesfield uses Apify's Key/Value form editor — the Key column is the business keyword (e.g.plumbers), the Value column is the location (e.g.Canberra ACT). Add one row per search.

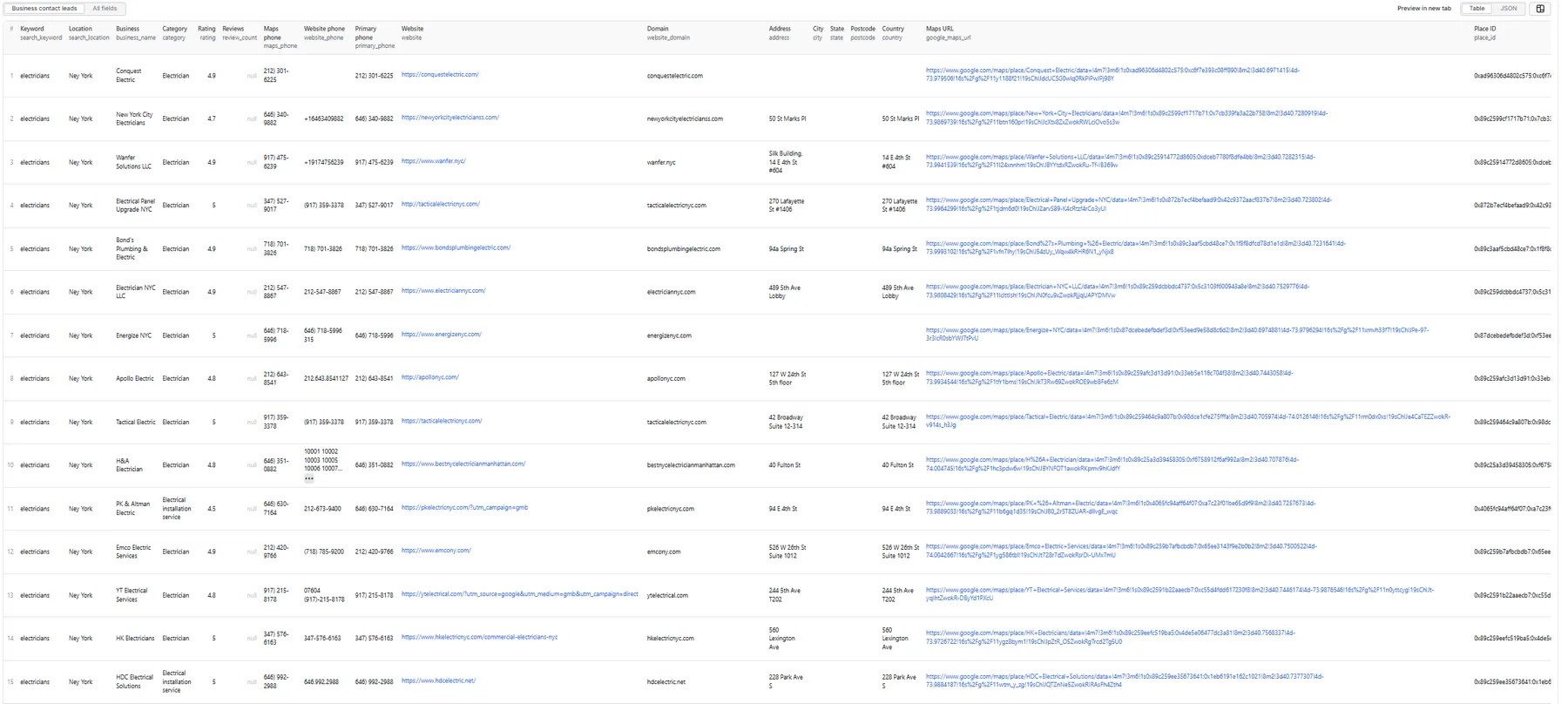

📦 Output

The dataset has one view: Business contact leads — a 41-column flat table.

Output fields (41)

search_keyword, search_location, business_name, category, rating, review_count, maps_phone, website_phone, primary_phone, website, website_domain, address, city, state, postcode, country, google_maps_url, place_id, latitude, longitude, opening_hours, has_website, has_maps_phone, has_website_phone, emails, primary_email, email_count, email_types, contact_page_url, facebook_url, instagram_url, linkedin_url, x_url, youtube_url, social_links_count, website_pages_scanned, website_status, contact_quality_score, contact_quality_label, contact_tags, scraped_at.

Sample record — Business contact leads

🎯 Contact-quality score

Transparent rule-based score (0–100) computed from extracted fields — no AI, no external enrichment.

| Signal | Points |

|---|---|

| Has website | +15 |

| Has Maps phone | +15 |

| Has website phone | +10 |

| Has at least one email | +30 |

| Has primary email | +10 |

| Has contact page URL | +10 |

| Has at least one social | +10 |

| Has address | +5 |

| Has category | +5 |

Score is capped at 100.

Labels: Excellent Contact Lead (80–100) · Good Contact Lead (60–79) · Basic Contact Lead (40–59) · Low Contact Data (0–39).

contact_tags includes contact_ready whenever primary_email or primary_phone is present — sort by this tag for a clean call/email list.

💰 Pricing

Pay-Per-Event. One flat event per saved row (final per-event price is configured on the Apify console):

| Event | Charged when |

|---|---|

business-result | Once per unique business row that passed all filters and was successfully written to the dataset — whether or not the website extraction found additional contact data. |

So your bill is simply results_saved × price_per_event. Rows where website enrichment found emails / contact pages / socials cost the same as Maps-only rows; the price is averaged across both kinds.

The actor honors the user-configured per-run spending cap (Apify eventChargeLimitReached) and stops cleanly when reached.

🚦 Proxy policy

Use Apify Datacenter proxy or no proxy for normal runs — both work reliably for Google Maps search and shallow website fetches at this actor's conservative concurrency.

Apify Residential proxy is not supported. The actor will fail at startup if proxyConfiguration.apifyProxyGroups includes RESIDENTIAL. Reason: in pay-per-event actors, residential bandwidth (~/GB) is billed to the developer, not the run user, so a single bandwidth-heavy run could exceed the per-result event revenue.

If you genuinely need residential routing, supply your own residential provider via the proxy editor's Custom proxy URLs field — that traffic goes through your provider, not Apify, and is unaffected:

Not charged:

- Duplicates (de-duplicated by

place_id, listing URL, website domain, or name+address/phone). - Rows filtered out by

websiteFilter,phoneRequired, oremailRequired. - Rows missing a

business_name. - Failed dataset pushes.

- Failed website fetches when no row was saved.

- Anything after the per-run spending cap is reached.

📊 Run summary

After each run, a RUN_SUMMARY entry is written to the key-value store:

business_events_charged always equals results_saved.

⚙️ Filters

| Filter | Stage | Effect |

|---|---|---|

websiteFilter | Pre-extraction | any / hasWebsite / missingWebsite. Default hasWebsite. |

phoneRequired | Post-extraction | If true, keep rows where maps_phone or website_phone is present. |

emailRequired | Post-extraction | If true, keep only rows where at least one website email was found. |

deduplicateResults | Both stages | Drop duplicates across queries (recommended ON). |

Filters are applied before dataset push or event charges.

🚧 Limitations (V1)

- Cards-only Maps extraction: V1 reads each business directly from the search results panel and does not click into individual place detail panels. Phone, website, full opening hours, and

place_idonly appear when Google surfaces them on the card itself; otherwise these fields are empty/null. - Shallow website extraction: homepage + up to

maxPagesPerWebsite-1extra pages (default 3 total). No deep crawling, no JS-heavy rendering by default. - No email verification, deliverability checks, or AI scoring.

- No full review text, sentiment, photos, menus, prices, or popular times.

- No login/cookie/session-based scraping.

- Address parsing into city/state/postcode is best-effort; the full

addressfield is the source of truth. - Per-query hard cap is 500 results; per-run hard cap is 5,000 results; per-website page cap is 5.

❓ FAQ

Why is email_count zero on some businesses?

Many local businesses don't publish an email publicly — they use a contact form instead. The actor extracts only emails visible in the page HTML (visible text + mailto: links). Set emailRequired: true if you want to keep only rows with at least one extracted email.

Why are city, state, postcode empty on some rows?

The Google Maps card sometimes surfaces only a single-line street address. The full address field is the source of truth; city/state/postcode populate only when Maps shows a comma-separated multi-segment address. For full address normalization, post-process with a geocoder of your choice.

Can I use Apify Residential proxy?

No — the actor rejects Apify Residential at startup. Apify Datacenter, no proxy, and user-supplied custom proxy URLs all work fine. If you genuinely need residential routing for a specific region or site, supply your own provider (IPRoyal, BrightData, Oxylabs, etc.) via the proxy editor's Custom proxy URLs field — that traffic bypasses Apify billing entirely. See the 🚦 Proxy policy section above.

Can I export to CSV?

Yes — every field is flat (no nested objects). Use Apify's CSV / Excel export from the dataset page, or call the dataset API with format=csv.

How am I billed for rows that don't have any extracted emails or socials?

The same as rows that do — one flat business-result event per saved row. The per-event price is averaged across both outcomes, so a run with high enrichment hit-rate and a run with low hit-rate cost the same per row. If you only need Maps directory data (no website enrichment at all), set includeWebsiteContactExtraction: false to skip the website fetches — billing is unchanged but the run is faster.

How do I get more results per query?

The per-query hard cap is 500 (per-run cap 5,000). For more, split your search into narrower geographies or sub-niches and run them as separate queries — the actor dedupes across queries within a run.

Will I get blocked?

The actor uses conservative concurrency (Maps min=1, max=3; website pool =5), HTTP-only website fetching, realistic headers, and respects retry/backoff. Default Apify Proxy is sufficient for typical lead-gen volumes. If a specific site or region blocks you, switch the proxy selector to Residential for that run.

What does website_status mean?

not_attempted— website extraction was disabled or there was no website to fetch.no_website— Maps had no website link for that business.success— homepage fetched successfully (regardless of whether emails were found).failed— the homepage returned an HTTP error or the network call failed.timeout— the request was cut off by the per-page (15s) or per-business (45s) timeout.

🛠️ Technical notes

- Stack: Node.js 18+ · Apify SDK 3 · Crawlee · Puppeteer (Maps) · Cheerio + native fetch (websites).

- Concurrency: Maps

min=1,max=3(conservative); website poolconcurrency=5. - Memory: 1 GB min · 2 GB default · 4 GB max.

- Proxy: Apify Proxy enabled by default; custom configs accepted.

- Diagnostics: On the first failed Maps render (no feed, or feed but zero cards), the actor saves the page HTML and URL to the key-value store as

debug-no-feed-html/debug-zero-cards-html.