Y Combinator News Scraper

Pricing

from $8.00 / 1,000 results

Y Combinator News Scraper

Get the latest news from the Y Combinator Hacker News page. The output fields are: title, score, author, timing, discussion link, and body. Only saves rows when the article text comes through. Pick 20–200 stories (default 100). Export CSV or JSON.

Pricing

from $8.00 / 1,000 results

Rating

0.0

(0)

Developer

Marco Rodrigues

Maintained by CommunityActor stats

0

Bookmarked

2

Total users

1

Monthly active users

9 days ago

Last modified

Categories

Share

🚀 Y Combinator News Scraper

Want the latest submissions from Y Combinator Hacker News together with the actual article text from each linked source? This actor does both in one run.

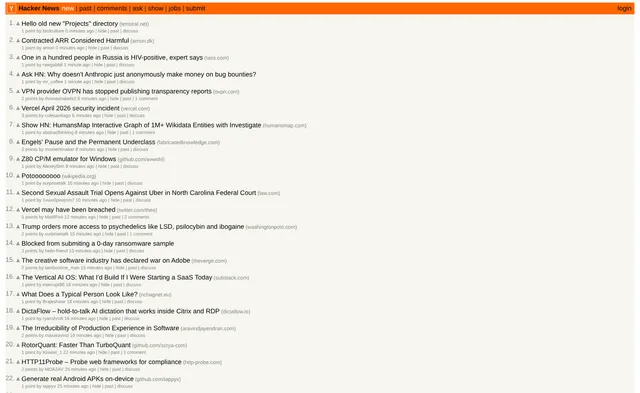

It always starts from Y Combinator’s HN “newest” feed at news.ycombinator.com/newest. There it reads titles, scores, authors, and each story’s outbound URL (GitHub, blogs, newspapers, another HN item page—whatever the submitter linked). Then it opens those destination sites in a browser and extracts main body text there. So the corpus is not “only HN HTML”: metadata comes from the YC-run listing; content depends on each submission’s source.

💡 Perfect for...

- Researchers & analysts: Track what’s being submitted and pull readable text from the original publisher or repo page.

- Newsletters & dashboards: Combine HN metadata (

points,author,hn_discuss_url) with article excerpts for digests. - 📚 RAG systems: Index

title,news_link, andcontentso answers can cite both the HN context and what the linked page actually says.

✨ Why you'll love this scraper

- 🧹 Clean saves: Rows are pushed to the dataset only when non-empty body text was extracted—blocked or empty pages are skipped, not stored as hollow rows.

- 👤 Structured HN fields: Every saved item includes ids, title, outbound link, site label, score, author, timestamps, discussion URL, plus

content.

📦 What's inside the data?

For every story that yields extractable text, you will get:

- HN listing:

id,title,news_link,site_domain,points,author,posted_at_iso,posted_at_human,hn_discuss_url - From the linked source:

content(plain article text from the destination page when extraction succeeds)

🚀 Quick start

- Decide how many stories you want (

max_news). The actor collects that many unique items from /newest (using More if needed). - Start the actor on Apify—no listing URL to paste; the feed URL is fixed.

- Export the default dataset as CSV, Excel, or JSON when the run finishes.

Tech details for developers 🧑💻

Input Example:

Output Example:

Parameters:

| Parameter | Type | Required | Description |

|---|---|---|---|

max_news | integer | No | Target number of unique stories from /newest (via More), then opened for content. Default 100, min 20, max 200 (see .actor/input_schema.json). |

Stack: Python, Apify SDK, Crawlee PlaywrightCrawler, Playwright, trafilatura. Article requests ignore 401 / 403 / 429 as session-killers so the handler can still attempt extraction; empty text still means no dataset row.

Local run: From this actor directory, install dependencies and run playwright install, then use apify run so input.json is applied, or wire input the way your environment expects for python -m src.