All-in-One X/Twitter Scraper

Pricing

from $0.20 / 1,000 tweet results

All-in-One X/Twitter Scraper

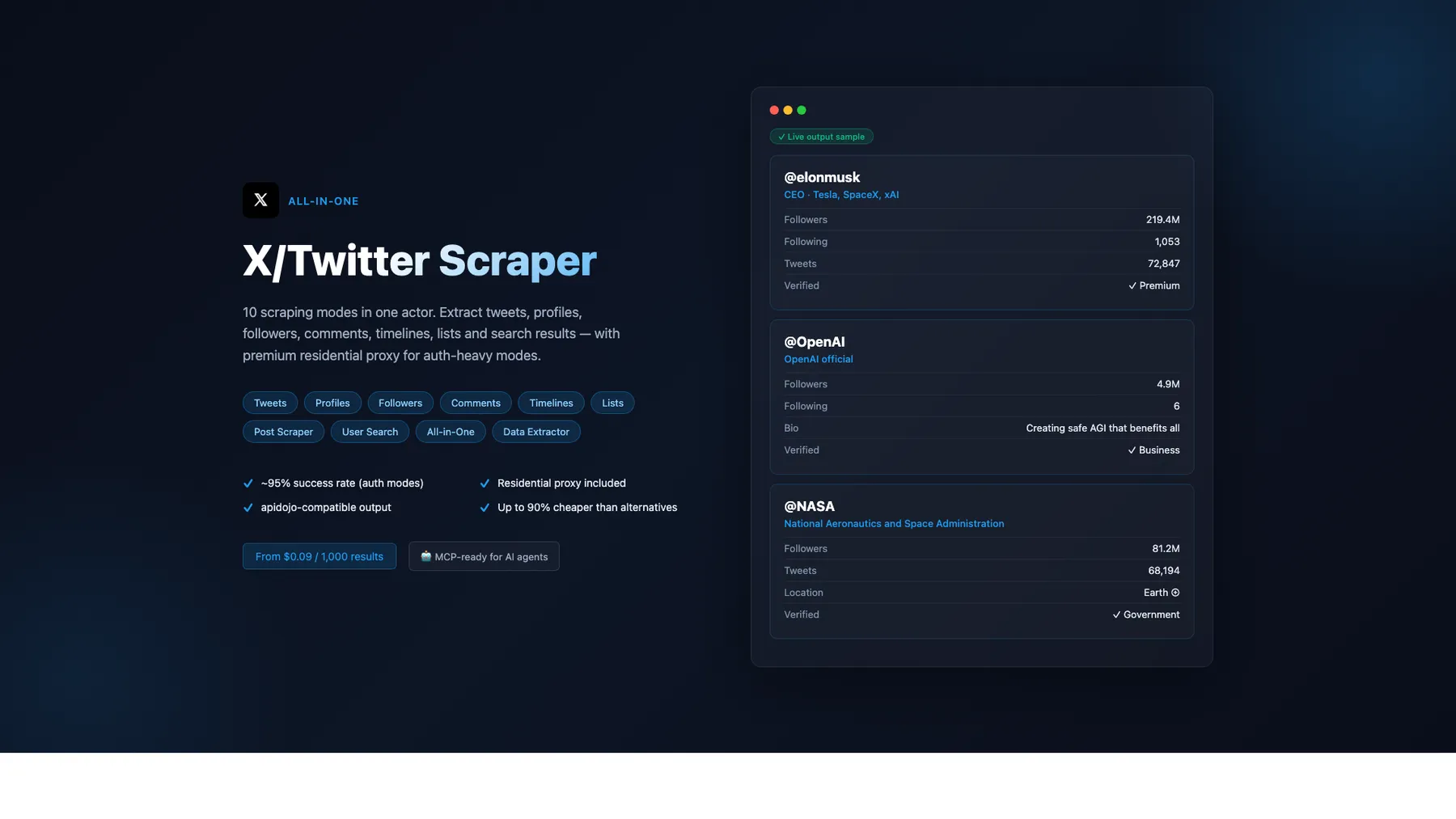

X/Twitter scraper — 10 modes: tweets, profiles, followers, comments, timelines, lists, search & more. From $0.09/1K — up to 90% cheaper than alternatives. Premium residential proxy (~95% success rate). apidojo-compatible output. MCP-ready for AI agents.

Pricing

from $0.20 / 1,000 tweet results

Rating

0.0

(0)

Developer

Japi Cricket

Maintained by CommunityActor stats

2

Bookmarked

90

Total users

21

Monthly active users

13 days ago

Last modified

Categories

Share

What does All-in-One X/Twitter Scraper do?

Scrape tweets, profiles, followers, timelines, comments, lists & more — residential proxy included, 128 MB memory. 12 modes, pay-per-result from $0.20/1K. Works with AI agents (Claude, GPT, Cursor) via MCP.

Three modes work without any login cookies (posts, profiles, data extractor).

Why this scraper (vs other Apify X / Twitter scrapers)

- 12 modes vs ~4 in competitor multi-mode actors — tweets, profiles, search, hashtag, timelines, followers, following, comments, lists, user-search, omnibus, data-extractor. 4 of these modes (hashtag / follower / following / user-search) have no standalone Apify Store competitor — unique market coverage.

- 40-50% cheaper — pricing from $0.20/1K results vs $0.40/1K on the leading competitor (apidojo Tweet Scraper V2). No subscription, no minimums beyond per-mode billing event.

- 19+ exclusive output fields that competitors don't expose — including

viewCount,bookmarkCount,isLongForm(Q1 long-form detection),editHistoryTweetIds/isEdited/editableUntilMsecs(Q3 edit-window),communityId(Q6),translation(Q7),article(Q8),affiliateBadge(Q9),verifiedSince(Q10),geoflattened object,isPinned,isConversationControlled,fastFollowersCount,pinnedTweetIds[],canHighlightTweets,professional,canDm,normalizedCreatedAtISO 8601. - Residential proxy included — Evomi-routed traffic on authenticated modes at no extra per-result cost (competitors typically charge separately or bundle into higher tiers).

- 128 MB memory — runs efficiently on minimal resources.

- Drop-in replacement for popular alternatives — every output item carries

id/fullText/possiblySensitive/extendedEntities/protected/inReplyToId/quoteId/inputSourcealiases so existing pipelines keep working with zero code changes. OptionalflattenAuthor: trueflag (x-scraper omnibus mode) adds top-levelauthorUserName/authorFollowers/ etc. for automation-lab/twitter-scraper compat. - ≥95% field accuracy — every mode validated against fresh Chrome ground truth (most recent: 2026-04-25 across 60 our-runs + 22 competitor-runs); ongoing daily-test pipeline against operator-curated GT corpus.

- AI-ready — works with Claude, GPT, and Cursor via MCP protocol.

- Run-level telemetry rows (build 0.1.110+) — every run emits

bandwidth_report+learning-loop-digest+_run_summaryrows so downstream orchestrators / analytics pipelines can detect crashes, drift, and cost anomalies. No competitor exposes this.

What's New (2026-05-18 — Day-22 competitive-parity closer)

Per-mode field-by-field audit against the top 5 X / Twitter scrapers on the Apify Store confirmed competitive lead on field coverage. Two narrow gaps closed in this build:

-

isConversationControlled— boolean on all 6 tweet-emitting modes (post / search / hashtag / timeline / comment / list / data-extractor when routed via GraphQL). True when the author has restricted reply visibility (X'slegacy.conversation_controlpolicy). Closes the last per-tweet competitive-parity gap vs apidojo Tweet Scraper V2. Null on syndication path (X's syndication API does not surface this field; we emit null rather than guess). -

flattenAuthor: trueopt-in input flag (x-scraper omnibus mode only). When enabled, copies key author fields to top-level:authorUserName,authorName,authorFollowers,authorFollowing,authorIsBlueVerified,authorIsVerified. Drop-in compatibility with automation-lab/twitter-scraper's flat schema. The nestedauthorobject is always retained — pure additive. Other modes have canonical emit shapes that are NOT changed by this flag.

After these two ships, no documented competitive field gap remains across the 12 modes. The remaining improvement axes are operational (reliability + GT corpus) + marketing (Apify Store positioning) — both off the field-coverage axis.

What's New (2026-05-14 — build 0.1.110 run-level telemetry)

Every successful run now emits a final _run_summary row as its last pushData. The row carries {schemaVersion, runId, build, mode, items, errors, incidents, healthy, finishedAt} and serves two downstream uses:

- Crash detection — if a run shows

status: SUCCEEDEDat the Apify level but the dataset has NO_run_summaryrow, the scraper crashed before reaching its final cleanup block. Orchestrators and pipelines can treat row absence as a definitive "scraper logic did not complete" signal, separate from the per-itemtype: 'error'rows that already exist. - Run-health at-a-glance —

healthy: truefires only when botherrors === 0andincidents === 0. False on any run that produced an error item OR fired any operational-telemetry incident (RATE_LIMITED / AUTH_EXPIRED / etc.).

The row's schema is additive (new _run_summary type with its own field set) — no existing tweet / user / comment / error row was changed. Schema is versioned via schemaVersion: 1 to make future shape changes safe.

Margin-below-target log line — runs that estimate <50% margin now emit a ⚠️ Estimated margin X% is below 50% target WARN line in the run log alongside the existing Cost + margin estimate info line. Operator-visible signal for the 50%-margin SLA target without parsing the full payload.

What's New (2026-05-02 — §62 X-only audit + drift detection)

Daily-test infrastructure caught X's overnight bundle bump within 36 hours of yesterday's drift-detection ship. 8 GraphQL queryIds rotated simultaneously (TweetDetail / TweetResultByRestId / SearchTimeline / UserTweets / UserTweetsAndReplies / Followers / Following / ListLatestTweetsTimeline). Pre-flight detection fired BEFORE fan-out — operator email-flagged immediately, scraper auto-rotates at runtime, push-and-baseline closes the loop within hours of detection.

12-mode gap matrix verified: read 1-5 dataset items per X mode from past 100 successful runs, diffed against EXPECTED_FIELDS_BY_PLATFORM_MODE.x. Result: 11 of 12 modes have zero EXPECTED-field gaps. The 1 exception (x-post-scraper) returned an error item from the rotation event itself — proving the drift-detection ship works end-to-end.

Ground-truth comparator extended back to 11 fields: joinedDate re-added to compareX with a ±2-month tolerance window after a 9-handle cross-check (8/9 match). The window absorbs UTC-vs-local-rendered TZ-flip artifacts while still catching genuine semantic divergence.

4 ambiguous-handle test cases surfaced for the X-team upstream-investigation case (handles where X's UserByScreenName returns a less-famous registered owner): aaronlevie / tim / obama / satya. X enforces handle uniqueness — these are not scraper bugs, just X-side data.

Full audit: docs/audit/2026-05-02-x-only-improvements.md. Plan section: §62 in ~/.claude/plans/new-daily-test-plan-moonlit-journal.md.

What's New (build 0.1.75 — 2026-04-26)

Sprint A (today, 2026-04-26): appended 4 final apidojo-compat naming aliases — inReplyToId (= inReplyToTweetId), inReplyToUsername (= inReplyToUserName), quoteId (= quotedTweet?.tweetId), and inputSource (= input on every item). With these, the actor is now a complete drop-in replacement for apidojo schema-wise on tweet and per-item fields.

Earlier (build 0.1.73 — 2026-04-25)

After a head-to-head competitive analysis vs. the top X-scraper actors on the Apify Store (60 baseline runs + 22 competitor benchmark runs), we shipped 9 additive output fields without changing any existing behaviour. Pure additive: every existing integration keeps working unchanged.

Tier 1 — apidojo-compat aliases + every-item metadata

| Field | Where | What it does |

|---|---|---|

id | every tweet + user item | Mirrors tweetId / twitterId. Drop-in compatibility for pipelines that reference item.id. |

fullText | tweet items | Mirrors text (carries the full long-form body when isLongForm: true). |

possiblySensitive | tweet items | Mirrors isSensitive. Always boolean. |

extendedEntities | tweet items | {media: [...]} wrapper for media. Always present (empty {media: []} when none). |

protected | user items | Lowercase alias for isProtected. |

scrapedAt | every item | UTC ISO 8601 stamp of when this snapshot was actually parsed from X's response. Useful for dataset cataloging, dedup-by-window, and audit trails. Cached items keep their original parse time. |

Tier 2 — real new signal fields

| Field | Where | What it does |

|---|---|---|

fastFollowersCount | user items | legacy.fast_followers_count from X — bot-prone signal for influencer / lead-quality analysis (high ratio of fast-followers to total = suggested-follow-driven, not organic). null when X omits the field. |

pinnedTweetIds | user items | Array form of pinned tweet IDs (matches the wider X scraper schema family). Empty [] when none. The legacy singular pinnedTweetId is preserved. |

isPinned | tweet items | true when this tweet's tweetId matches the author's pinned tweet ID. Useful for de-emphasising pinned tweets in chronological analysis. |

All 9 fields ship alongside the existing 35-43 output fields per item — nothing was removed or renamed.

Getting Started

- Click "Try for free" at the top of this page

- Choose a scraping mode (Post Scraper, Profile Scraper, Tweet Search, Hashtag Scraper, Timeline, Follower, Following, Comment, List, or Data Extractor)

- Paste tweet URLs, usernames, or enter a search query

- Click Start — results appear in the Dataset tab within seconds

- Download as JSON, CSV, or Excel — or connect via API, n8n, Make, or Zapier

Residential proxy is included — no setup required. 3 modes work without login cookies (post, profile, data-extractor).

Easiest Way to Start: Paste a URL

Just paste any X/Twitter URL into the "Start URLs" field and hit Start. The scraper auto-detects the type:

| URL Pattern | Auto-Detected Mode |

|---|---|

x.com/user/status/1234567890 | Post Scraper (tweet by URL) |

x.com/username | Profile Scraper |

x.com/i/lists/1234567890 | List Scraper |

For Tweet Search and User Search, enter keywords in the "Search Queries" field.

10 Scraping Modes

| Mode | Description | Auth Required | Best For |

|---|---|---|---|

| x-post-scraper | Scrape specific tweets by URL | None | Tweet archiving, engagement tracking |

| x-profile-scraper | Full user profiles with stats | None | Lead enrichment, influencer research |

| x-data-extractor | Lightweight tweet URL scraper | None | Quick tweet data extraction |

| x-search-scraper | Search tweets by keywords/phrases | Login cookies | Social listening, trend monitoring |

| x-hashtag-scraper | Search tweets by hashtag | Login cookies | Hashtag tracking, trend analysis |

| x-timeline-scraper | Scrape a user's tweet timeline | Login cookies | Content analysis, user monitoring |

| x-follower-scraper | Get followers of an account | Login cookies | Audience analysis, lead discovery |

| x-following-scraper | Get accounts a user follows | Login cookies | Network mapping, competitive research |

| x-comment-scraper | Get replies/conversation threads | Login cookies | Sentiment analysis, community insights |

| x-list-scraper | Scrape tweets from X/Twitter lists | Login cookies | Curated feed monitoring |

Standard vs Authenticated Mode

3 modes work without any login cookies. 7 modes require your X/Twitter cookies for full functionality.

What Works Without Cookies (Standard Mode)

No login, no risk. Just paste and scrape:

- Post Scraper: Full tweet data with engagement metrics, media, author info, quoted tweets

- Profile Scraper: Full user profile with followers, bio, verification, account stats

- Data Extractor: Same as Post Scraper (lightweight mode)

What Requires Cookies (Authenticated Mode)

Provide your auth_token and ct0 cookies to unlock search and social graph features:

| Mode | What It Unlocks |

|---|---|

| Tweet Search (x-search-scraper) | Search tweets by keywords, phrases, and advanced operators |

| Hashtag Scraper (x-hashtag-scraper) | Search tweets by hashtag (e.g., #AI, #crypto) |

| Timeline (x-timeline-scraper) | Scrape a user's full tweet history |

| Followers (x-follower-scraper) | Get an account's follower list |

| Following (x-following-scraper) | Get the accounts a user follows |

| Comments (x-comment-scraper) | Get replies and conversation threads |

| List Scraper (x-list-scraper) | Scrape tweets from curated lists |

When to Use Which Mode

| Your Goal | Recommended Mode | Cookies Needed? |

|---|---|---|

| Save a specific tweet with engagement data | x-post-scraper | No |

| Get a user's profile info and follower count | x-profile-scraper | No |

| Monitor brand mentions or trending topics | x-search-scraper | Yes |

| Track tweets under a specific hashtag | x-hashtag-scraper | Yes |

| Analyze what someone has been tweeting | x-timeline-scraper | Yes |

| Build a list of an account's followers | x-follower-scraper | Yes |

| See who an account follows | x-following-scraper | Yes |

| Analyze public reactions to a tweet | x-comment-scraper | Yes |

| Monitor a curated Twitter list | x-list-scraper | Yes |

Pricing — Pay Per Result, No Monthly Fee

| Mode | Price / 1K results (Starter) | Price / 1K results (Scale) | Price / 1K results (Business) |

|---|---|---|---|

| x-post-scraper | $0.24 | $0.22 | $0.20 |

| x-profile-scraper | $0.24 | $0.22 | $0.20 |

| x-search-scraper | $0.24 | $0.22 | $0.20 |

| x-hashtag-scraper | $0.24 | $0.22 | $0.20 |

| x-timeline-scraper | $0.24 | $0.22 | $0.20 |

| x-comment-scraper | $0.24 | $0.22 | $0.20 |

| x-list-scraper | $0.24 | $0.22 | $0.20 |

| x-follower-scraper | $0.24 | $0.22 | $0.20 |

| x-following-scraper | $0.24 | $0.22 | $0.20 |

| x-data-extractor | $0.30 | $0.27 | $0.25 |

Apify Subscription Discounts

Higher Apify subscription plans get automatic discounts on all modes:

| Apify Plan | Discount |

|---|---|

| Free / Starter | — |

| Scale | 5% off |

| Business | 10% off |

| Enterprise | 15% off |

Cost examples:

- 1,000 tweets from search: $0.24

- 500 user profiles: $0.12

- 10,000 followers: $2.40

- 100 tweet URLs: $0.024

You only pay for results delivered. Platform compute costs are included.

Why This X/Twitter Scraper?

- Among the cheapest on Apify — $0.24/1K results across all core modes — significantly below competitor medians

- 10 modes in one actor — tweets, profiles, search, timelines, followers, comments, lists, user search, all-in-one, data extractor — one integration to maintain

- Residential proxy included — Evomi traffic built in; unauthenticated modes auto-skip to datacenter for bandwidth savings

- HTTP-only — lightweight, no browser required

- 128 MB memory — runs efficiently on minimal resources

- ≥95% field accuracy verified against Chrome ground truth + backed by a 26-case parser regression suite that runs on every build (build 0.1.60, 2026-04-24)

- Active cookie rotation — multi-cookie

X_LOGIN_COOKIESpools cycle automatically when X burns an entry; emitsCOOKIE_EXHAUSTEDwhen all are burned - Cross-run cache (optional) — opt into a 24 h KV cache with

useCrossRunCache: trueso scheduled/polling runs skip re-scraping tweets and profiles that are already fresh - Sparse output (optional) — pass

fields: ["tweetId","text","likeCount"]to emit only the keys you need; shrinks payloads for Google Sheets / HubSpot / CSV integrations - Graceful rate-limit handling — honours X's

x-rate-limit-resetheader; backoff waits exactly as long as the server asked - Self-healing on doc-ID rotation — when X rotates a GraphQL

queryId, the scraper fetches the live bundle, resolves the new ID, and retries; still logs loudly for a follow-up PR - Circuit breaker — aborts a doomed batch after 10 same-category failures in a row (RATE_LIMITED / AUTH_EXPIRED / etc.) instead of burning CU on requests that are guaranteed to fail

- Resume capability — pick up where failed runs left off via

resumeFromDataset, or skip already-seen items viaonlyIfChangedSince: {dataset, since} - Optional deep user hydration —

hydrateFullUser: trueenriches follower / following / user-search results with the fields X trims on list endpoints (canHighlightTweets, verifiedSince, full professional) - Completion webhook — set

completionWebhookUrlto POST a summary to your own endpoint (Slack / n8n / Zapier) when the run finishes - Schema-versioned output — every item carries

schemaVersion: "1.0.0"so downstream consumers can pin behaviour - Clean text output —

text,description, and other user-visible string fields are HTML-entity decoded (&→&,'→') and stripped of zero-width / control characters, so output pastes straight into spreadsheets / CRMs without surprises - Auto-batch suggestions — on small runs (<5 results) the scraper logs a one-line tip pointing at the bulk-input fields;

summary.autoBatchTipcarries the same data for dashboards. No pricing change; just a nudge toward profitable batch sizes - MCP-compatible — works with AI agents (Claude, GPT, Cursor) out of the box

Cookies: bring your own (customer-supplied)

7 of 10 modes require X/Twitter login cookies. Here's how to get them:

- Open x.com in your browser and log in

- Open Developer Tools (F12 or Cmd+Opt+I)

- Go to Application > Cookies > x.com

- Copy the values of

auth_token(HttpOnly — visible in DevTools cookie panel) andct0 - Paste as:

auth_token=YOUR_TOKEN; ct0=YOUR_CT0

Modes that do NOT require cookies: x-post-scraper, x-profile-scraper, x-data-extractor.

Important: this actor does NOT include any X/Twitter cookies for customer runs

When a mode requires authentication, you must paste your own cookies into the loginCookies input field. There is no shared cookie pool, no built-in fallback, and no operator-side cookie injection — every authenticated run uses the cookies you provide, attributing the activity to your X account.

This is intentional. Account-flag risk lives with the supplied cookies, not with the actor author. Top-MAU X scrapers on Apify follow the same pattern.

Customer usage — supply cookies via input

Cookie rotation: for large runs, provide multiple cookies separated by |||. The scraper uses the first cookie by default and automatically cycles to the next entry whenever X burns the active one (AUTH_EXPIRED response). If every pooled cookie is burned during the same run, a COOKIE_EXHAUSTED error item is emitted (see Error Items):

Operator daily-testing only

The daily-test orchestrator passes the operator's cookies via input.loginCookies (same input field customers use). This keeps customer and operator paths uniform and prevents accidental operator-cookie leakage to customer runs. The X_LOGIN_COOKIES environment variable is no longer read by the scraper as of build 2026-05-08 — operator cookies live on the orchestrator and the cookie-health-check probe, never on this scraper actor.

Always use a dedicated burner X account for testing, never your primary personal account — X's anti-automation system can flag accounts that show sustained API access patterns.

Migration note (2026-05-08)

Earlier versions of this actor accepted an X_LOGIN_COOKIES env-var fallback when loginCookies was empty. That fallback has been removed for the customer-vs-operator cookie boundary. Customer runs without supplied cookies now fail cleanly for cookie-required modes instead of silently using whatever was in the env var.

Cookie Health Check

Every authenticated run validates your auth_token + ct0 against the X API at startup. The result is surfaced in the run logs:

If validation fails, you'll see:

No cookies or cookie fingerprints are stored anywhere — not in the run's key-value store, not in named KV stores. Cookies live only in the loginCookies input field per-run. This is an explicit policy: we persist no cookie-derived data, even hashed.

Typical cookie lifetime on X is 30–60 days. If your scheduled runs start failing with auth errors, refresh the cookies in your loginCookies input field from a logged-in browser session.

Residential Proxy (Included by Default)

The scraper defaults to proxyTier: "residential" — Evomi residential traffic is included in the per-result price, no extra setup. The unauthenticated modes (x-post-scraper, x-profile-scraper, x-data-extractor) automatically skip the residential proxy and use datacenter IPs because they hit syndication / guest-token endpoints that don't need residential routing — this saves bandwidth at zero accuracy risk.

Opt-out: Datacenter only

If you want every mode to run on datacenter IPs (e.g. you're scraping only tweet URLs and want absolute-minimum latency), set proxyTier to "none":

When Residential Matters

- Cookies expiring fast — residential IPs look more like real users, reducing session invalidation

- Rate limits on authenticated modes — tweet search, timeline, followers, comments

- Large-scale runs — scraping thousands of results per session with login cookies

These are exactly the modes that run on residential by default.

Alternative: Bring Your Own Proxy

If you already have a residential proxy subscription, set proxyTier to "custom" and paste your proxy URL into the Proxy Configuration field. Apify Proxy is not supported — this actor is Evomi-only by policy.

MCP Integration for AI Agents

This scraper works with AI agents via the Model Context Protocol (MCP). Connect it to Claude Desktop, Cursor, GPT, or any MCP-compatible client.

Setup:

- Go to mcp.apify.com

- Add "All-in-One X/Twitter Scraper" to your MCP server

- Ask your AI: "Find the latest tweets about AI from NASA"

Example prompts for your AI agent:

- "Scrape the profile of @elonmusk on X"

- "Search X for tweets about web scraping from the last week"

- "Get the followers of @apify on Twitter"

- "Find all replies to this tweet: https://x.com/..."

Works with Claude Desktop, Cursor, GPT via MCP, and any other MCP-compatible AI client.

Integrations

n8n

- Add the Apify node in your n8n workflow

- Select "All-in-One X/Twitter Scraper" as the actor

- Configure the mode and input parameters

- Connect the output to your CRM, Google Sheets, or database

Make.com (Integromat)

- Add the Apify module to your scenario

- Select "Run Actor" and choose this scraper

- Map the JSON output fields to your downstream modules

- Use for automated tweet monitoring, lead enrichment, or CRM syncing

Zapier

- Create a new Zap with Apify as the trigger or action

- Select "Run Actor" and configure with this scraper's actor ID

- Map output fields to Google Sheets, HubSpot, Salesforce, or Slack

- Trigger on schedule or from a webhook

REST API & SDKs

Use the Apify API, JavaScript SDK, or Python SDK for programmatic access. See the Python examples in each mode section below.

Mode 1: Post Scraper (x-post-scraper)

Scrape specific tweets by URL with full engagement data, media, author info, and quoted tweets. No login cookies required.

Pricing tip: batch ≥5 tweets per run to amortize the per-run actor-start fee. Single-item runs are ~4× less cost-efficient than 10-item batches because the fixed startup overhead dominates a 1-item run.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-post-scraper" |

tweetURLs | string[] | Yes | Tweet URLs or tweet IDs |

maxResults | integer | No | Max results (default: 100) |

Input Example

Output Fields

| Field | Type | Description | Example |

|---|---|---|---|

type | string | Always "tweet" | "tweet" |

tweetId | string | Unique tweet ID | "1728108619189874825" |

url | string | Full X.com URL | "https://x.com/elonmusk/status/..." |

twitterUrl | string | Legacy twitter.com URL | "https://twitter.com/elonmusk/status/..." |

text | string | Tweet content. HTML entities are decoded (& → &, ' → ', etc.) and zero-width / control characters are stripped. Newlines + emoji are preserved. | "More than 10 per human on average" |

retweetCount | integer | Retweet count | 8880 |

replyCount | integer | Reply count | 5931 |

likeCount | integer | Like count | 92939 |

quoteCount | integer | Quote tweet count | 2862 |

bookmarkCount | integer | Bookmark count | 605 |

viewCount | integer | View count | 37422895 |

createdAt | string | Tweet timestamp (X native format) | "Fri Nov 24 17:49:36 +0000 2023" |

normalizedCreatedAt | string | Same timestamp as createdAt in ISO 8601 (UTC). Additive — the native field is kept for backward compatibility. null only when X omits the timestamp. | "2023-11-24T17:49:36.000Z" |

lang | string | Language code (ISO 639-1) | "en" |

source | string | Posting app/device | "Twitter for iPhone" |

isReply | boolean | Is a reply | false |

isRetweet | boolean | Is a retweet | false |

isQuote | boolean | Is a quote tweet | true |

conversationId | string | Thread root ID | "1728108619189874825" |

inReplyToTweetId | string | Parent tweet ID (if reply) | null |

inReplyToUserId | string | Parent user ID (if reply) | null |

inReplyToUserName | string | Parent username (if reply) | null |

hashtags | array | Hashtag strings | ["AI", "space"] |

urls | array | URL objects | [{url, expandedUrl, displayUrl}] |

userMentions | array | Mention objects | [{userName, name, twitterId}] |

media | array | Media objects (photos, videos, GIFs). Each entry: {type, url, expandedUrl, videoUrl, durationMs, bitrate, videoVariants, width, height, aspectRatio, altText}. Video enrichment (duration / bitrate / aspectRatio) is populated when X provides them. | [{type:"video", url, videoUrl, durationMs:30580, bitrate:2176000, aspectRatio:1.7778}] |

geo | object | Flattened geolocation when the tweet is geotagged: {lat, lng, placeName, placeType, country}. null for untagged tweets. Complements the raw place object. | {lat: 37.7749, lng: -122.4194, placeName: "San Francisco, CA", country: "United States"} |

card | object | Link preview card | {type, title, description, url} |

place | object | Geolocation data | null |

author | object | Author profile | {userName, name, twitterId, followers, ...} |

quotedTweet | object | Quoted tweet (full object) | {tweetId, text, author, ...} |

isLongForm | boolean | X Premium long-form tweet (>280 chars via note_tweet). text carries the full body when true. | false |

isSensitive | boolean | X-marked possibly sensitive (NSFW/graphic). Always boolean (default false) to match X's UI — true only when X explicitly flags. | false |

isConversationControlled | boolean | (0.1.118 — Day-22) Author-driven reply restriction state. true when the author has limited reply visibility (X's legacy.conversation_control policy: Community / ByInvitation). false when unrestricted. null on syndication path (X does not surface this field on syndication). Emitted on all 6 tweet-emitting modes: post / search / hashtag / timeline / comment / list / data-extractor (when routed via GraphQL). | false |

editHistoryTweetIds | array | Tweet IDs forming the edit chain (current tweet is last entry). Contains at least [tweetId] whenever the tweet is still within its edit window; additional IDs appear after edits. null only when X does not expose any edit metadata for the tweet. | ["1728108619189874825"] |

isEdited | boolean | Derived: editHistoryTweetIds.length > 1. | false |

editableUntilMsecs | integer | Unix ms when the tweet stops being editable. null when not editable. | 1677538334000 |

communityId | string | ID of the X Community this tweet was posted in (if any). null for regular public tweets. | null |

translation | object | Auto-translation when X attached one. Shape: {text, sourceLang, targetLang, translator}. null when absent. | null |

article | object | X Article metadata when the tweet carries a longform article. Shape: {id, title, previewText, coverMediaKey, coverMediaUrl, wordCount}. null for non-article tweets. | null |

id | string | (0.1.73 alias) Mirrors tweetId for drop-in compatibility with pipelines that reference item.id. | "1728108619189874825" |

fullText | string | (0.1.73 alias) Mirrors text. Carries the full long-form body when isLongForm: true. | "More than 10 per human on average" |

possiblySensitive | boolean | (0.1.73 alias) Mirrors isSensitive. Always boolean. | false |

extendedEntities | object | (0.1.73) {media: [...]} wrapper. Always present (empty {media: []} when no media). | {"media": []} |

isPinned | boolean | (0.1.73) true when this tweet's ID matches the author's pinned tweet ID. Always false on syndication path (no author-pinned info available). | false |

scrapedAt | string | (0.1.73) UTC ISO 8601 stamp of when this snapshot was parsed. Cached items keep their original parse time. | "2026-04-25T10:40:33.131Z" |

Output Example

Use Cases

- Tweet archiving: Save important tweets with full engagement data before they're deleted

- Engagement tracking: Monitor how specific tweets perform over time

- Content research: Analyze viral tweets to understand what resonates

- Fact-checking: Capture tweet content with metadata for verification

How to Run

Python:

Mode 2: Profile Scraper (x-profile-scraper)

Scrape full X/Twitter user profiles with follower counts, bio, verification status, and account metadata. No login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-profile-scraper" |

twitterHandles | string[] | Yes | Usernames, @handles, or profile URLs |

maxResults | integer | No | Max results (default: 100) |

Input Example

Output Fields

| Field | Type | Description | Example |

|---|---|---|---|

type | string | Always "user" | "user" |

twitterId | string | User REST ID | "11348282" |

userName | string | @handle | "NASA" |

name | string | Display name | "NASA" |

url | string | X.com URL | "https://x.com/NASA" |

twitterUrl | string | Legacy twitter.com URL | "https://twitter.com/NASA" |

description | string | Bio text. Same HTML-entity decoding + control-char stripping as text so the bio pastes cleanly into downstream tools. | "The Moon is the mission..." |

location | string | Self-reported location | "Moonbound" |

website | string | Profile website URL | "https://t.co/9NkQJKAVks" |

isVerified | boolean | Legacy verified (pre-2023) | false |

isBlueVerified | boolean | X Premium subscriber | true |

verifiedType | string | Verification type | "Government", "Business", or null |

profilePicture | string | Avatar URL (400x400) | "https://pbs.twimg.com/..." |

coverPicture | string | Banner image URL | "https://pbs.twimg.com/..." |

followers | integer | Follower count | 90633649 |

following | integer | Following count | 117 |

statusesCount | integer | Total tweets posted | 73634 |

favouritesCount | integer | Total likes given | 16673 |

listedCount | integer | Lists the user is on | 96785 |

mediaCount | integer | Total media posted | 27827 |

createdAt | string | Account creation date (X native format) | "Wed Dec 19 20:20:32 +0000 2007" |

normalizedCreatedAt | string | Same timestamp in ISO 8601 (UTC) | "2007-12-19T20:20:32.000Z" |

isProtected | boolean | Private account | false |

canDm | boolean | DMs open | false |

professional | object | Professional account info — {type, category:[{name, iconName, id}]} | {type: "Creator", category: []} |

pinnedTweetId | string | Pinned tweet ID | "2040213736883892403" |

affiliateBadge | object | Badge when account is affiliated with a verified org (e.g. @Tesla staff get a Tesla badge). Shape: {labelType, description, badgeUrl, sourceUrl, sourceUserScreenName}. null otherwise. | {"labelType":"BusinessLabel","description":"X","badgeUrl":"https://pbs.twimg.com/..."} |

verifiedSince | string | Verification timestamp (ISO 8601). null when X does not expose a positive timestamp (common for legacy verifications and X-affiliate accounts). | "2017-10-05T08:46:27.580Z" |

canHighlightTweets | boolean | Whether this user has the Highlights tab enabled. Populated on dedicated profile lookups (x-profile-scraper, x-scraper); null on list-mode endpoints (followers / following / user-search) where X serves a trimmed user object that omits the flag. | true |

id | string | (0.1.73 alias) Mirrors twitterId for drop-in compatibility with pipelines that reference item.id. | "44196397" |

protected | boolean | (0.1.73 alias) Lowercase alias for isProtected. | false |

fastFollowersCount | integer | (0.1.73) legacy.fast_followers_count from X — number of accounts following this user via the suggested-follow path. High ratio of fast-followers to total followers is a useful bot-prone signal for influencer / lead-quality analysis. null when X omits the field. | 0 |

pinnedTweetIds | array | (0.1.73) Array form of pinned tweet IDs for cross-scraper schema parity. Currently always 0 or 1 element (X exposes one pin); array shape is forward-compat. Empty [] when none pinned. The legacy singular pinnedTweetId is preserved. | ["2047881966268117064"] |

scrapedAt | string | (0.1.73) UTC ISO 8601 stamp of when this snapshot was parsed. Cached items keep their original parse time. | "2026-04-25T10:40:33.705Z" |

Output Example

Use Cases

- Lead enrichment: Enrich CRM contacts with X profile data (bio, followers, verification)

- Influencer research: Identify key accounts by follower count and verification status

- Competitor tracking: Monitor competitor accounts for follower growth

- Account verification: Check if accounts are verified, professional, or protected

How to Run

Python:

Mode 3: Tweet Search (x-search-scraper)

Search tweets by keywords, phrases, and advanced operators with the Query Wizard. Supports date ranges, engagement filters, media filters, and geo-targeting.

Login cookies required. Provide

auth_tokenandct0cookies from x.com.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-search-scraper" |

searchQueries | string[] | Yes | Search queries with advanced syntax |

maxResults | integer | No | Max results per query (default: 100) |

sort | string | No | "Latest", "Top", or "Latest + Top" |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

languageFilter | string | No | ISO 639-1 language code (e.g., "en") |

start | string | No | Start date ("YYYY-MM-DD") |

end | string | No | End date ("YYYY-MM-DD") |

minimumFavorites | integer | No | Min likes filter |

author | string | No | From user (Query Wizard) |

Input Example

Output Fields

Same tweet fields as Mode 1 (Post Scraper). Plus optional searchTerm field when includeSearchTerms is enabled.

Output Example

Same format as Mode 1. Each tweet includes full engagement data, author profile, media, entities, and metadata.

Use Cases

- Social listening: Monitor mentions of your brand, product, or industry

- Trend monitoring: Track emerging topics and hashtags in real-time

- Competitive analysis: Monitor what competitors' audiences are saying

- Market research: Analyze public sentiment around events, launches, or announcements

How to Run

Python:

Mode 4: Timeline Scraper (x-timeline-scraper)

Scrape a user's tweet timeline including original tweets, retweets, and optionally replies.

Login cookies required for best results. Guest token works for basic access.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-timeline-scraper" |

twitterHandles | string[] | Yes | Usernames or profile URLs |

maxResults | integer | No | Max tweets per profile (default: 100) |

includeReplies | boolean | No | Include replies (default: false) |

loginCookies | string | Recommended | "auth_token=xxx; ct0=yyy" |

Input Example

Output Fields

Same tweet fields as Mode 1 (Post Scraper).

Use Cases

- Content analysis: Analyze a user's posting patterns, topics, and engagement

- User monitoring: Track what key accounts are tweeting about

- Research: Collect a user's tweet history for academic or business research

- Archiving: Save a user's tweet timeline for record-keeping

How to Run

Python:

Mode 5: Follower Scraper (x-follower-scraper)

Get the list of accounts that follow a specific X/Twitter account. For the following list (accounts a user follows), use Mode 9 (x-following-scraper).

Login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-follower-scraper" |

twitterHandles | string[] | Yes | Usernames or profile URLs |

maxResults | integer | No | Max followers per profile (default: 100) |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

hydrateFullUser | boolean | No | Default false. Set to true to run a follow-up UserByScreenName call per returned follower, filling canHighlightTweets, verifiedSince, and the fuller professional object that X trims from list-endpoint responses. Doubles GraphQL call count for this mode. |

Input Example

Output Fields

Same user profile fields as Mode 2 (Profile Scraper), plus:

| Field | Type | Description | Example |

|---|---|---|---|

followerOf | string | Source profile | "apify" |

Use Cases

- Audience analysis: Understand who follows a competitor or influencer

- Lead discovery: Find potential customers from a competitor's follower list

- Influencer marketing: Identify relevant followers for partnership outreach

- Network mapping: Map connections between accounts

How to Run

Python:

Mode 6: Comment Scraper (x-comment-scraper)

Get replies and conversation threads for specific tweets.

Login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-comment-scraper" |

tweetURLs | string[] | Yes | Tweet URLs to get replies for |

maxResults | integer | No | Max replies per tweet (default: 100) |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

Input Example

Output Fields

Same tweet fields as Mode 1, but with type: "comment" instead of type: "tweet".

Use Cases

- Sentiment analysis: Analyze public reactions to announcements or events

- Community insights: Understand what topics drive conversation

- Customer feedback: Monitor replies to brand tweets for support issues

- Trend analysis: Identify emerging opinions in reply threads

How to Run

Python:

Mode 7: Hashtag Scraper (x-hashtag-scraper)

Search X/Twitter for tweets containing a specific hashtag. Ideal for tracking trending topics, events, and campaigns by hashtag.

Login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-hashtag-scraper" |

searchQueries | string[] | Yes | Hashtags or hashtag-based queries (e.g., "#AI", "#crypto -filter:retweets") |

maxResults | integer | No | Max tweets per query (default: 100) |

sort | string | No | "Latest", "Top", or "Latest + Top" |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

Input Example

Output Fields

Same tweet fields as Mode 1 (Post Scraper).

Use Cases

- Trend tracking: Monitor what's being said under a specific hashtag in real-time

- Event coverage: Collect tweets from live events and conferences

- Campaign monitoring: Track your own or competitor hashtag campaigns

- Market research: Analyze public discussion around industry hashtags

How to Run

Python:

Mode 8: List Scraper (x-list-scraper)

Scrape tweets from X/Twitter lists (curated collections of accounts).

Login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-list-scraper" |

listURLs | string[] | Yes | Full list URLs — must be https://x.com/i/lists/{id} format |

maxResults | integer | No | Max tweets per list (default: 100) |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

Note on list URL format:

listURLsrequires the full URL (https://x.com/i/lists/1234567890). Bare list IDs are not supported.

Input Example

Output Fields

Same tweet fields as Mode 1 (Post Scraper).

Use Cases

- Curated feed monitoring: Track tweets from industry-specific lists

- News aggregation: Collect tweets from journalist or media lists

- Research: Monitor topic-specific lists for academic or market research

- Competitive intelligence: Track curated competitor lists

How to Run

Python:

Mode 9: Following Scraper (x-following-scraper)

Get the list of accounts a specific X/Twitter user follows. For the reverse (getting an account's followers), use Mode 5 (x-follower-scraper).

Login cookies required.

Input Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

scrapeMode | string | Yes | "x-following-scraper" |

twitterHandles | string[] | Yes | Usernames or profile URLs |

maxResults | integer | No | Max accounts per profile (default: 100) |

loginCookies | string | Yes | "auth_token=xxx; ct0=yyy" |

hydrateFullUser | boolean | No | Default false. Set true to enrich each returned user with canHighlightTweets, verifiedSince, and the full professional object via a follow-up UserByScreenName call. |

Input Example

Output Fields

Same user profile fields as Mode 2 (Profile Scraper), plus:

| Field | Type | Description | Example |

|---|---|---|---|

followingOf | string | Source profile | "apify" |

Use Cases

- Network mapping: Understand which accounts key players follow

- Influencer research: Discover who influencers are learning from

- Competitive intelligence: See which accounts your competitors follow

- Lead generation: Find prospects through shared-following analysis

How to Run

Python:

Mode 10: Data Extractor (x-data-extractor)

Lightweight tweet scraper for extracting data from tweet URLs. Same output as Mode 1. No login cookies required.

Pricing tip: batch ≥3 tweets per run for best MCP pricing. AI agents using MCP should prefer multi-tweet requests when possible — the per-run startup overhead is amortized across batched items.

Input Example

Output Fields

Same tweet fields as Mode 1 (Post Scraper).

Advanced Modes

Two additional scrapeMode values are exposed for power users and don't appear in the main mode table above:

x-scraper — All-in-One Auto-Router

Auto-detects URL type per item in startUrls and dispatches to the appropriate handler. Use this when you have mixed inputs (tweet URLs + profile URLs + list URLs in one batch). Each output item carries a type field (tweet or user) and its own input back-reference so you can correlate.

Optional opt-in flag (Day-22, 2026-05-18, build 0.1.118): "flattenAuthor": true copies the nested author.* fields on tweet items to top-level keys for drop-in compatibility with automation-lab/twitter-scraper's flat schema. Adds: authorUserName, authorName, authorFollowers, authorFollowing, authorIsBlueVerified, authorIsVerified — alongside the existing nested author object (pure additive, no breaking change). Only takes effect on x-scraper mode; other modes have canonical emit shapes that are NOT changed by this flag.

x-user-search-scraper — Search Users by Keyword

Uses X's SearchTimeline with product=People to return user profiles matching a search term. Cookies required. Accepts the optional hydrateFullUser flag to enrich each result with fields X trims on search endpoints (canHighlightTweets, verifiedSince, full professional).

Output is the same user object as Mode 2 (Profile Scraper). With hydrateFullUser: true the scraper makes an extra UserByScreenName call per returned user, so cost scales with the result count — use when you need the full profile shape, leave off (default) for lightweight enumeration.

Error Items

When an input can't be resolved to a real item, the scraper emits a structured {type:"error", ...} record instead of silently dropping it. Three error codes are emitted today:

NOT_FOUND — account or tweet deleted/suspended

For profile-consuming modes (profile, timeline, follower, following, all-in-one): the handle is shaped correctly but X says no such account exists (deleted, suspended, or never existed).

For tweet-consuming modes (post, data-extractor, all-in-one): the tweet URL/ID references a tweet that no longer exists on X.

INVALID_HANDLE — input failed validation before any network call

The input never resembled a scrapable X handle. X handles are at most 15 characters and only contain letters, digits, and underscores. The message field tells you why:

Message values you may see:

HANDLE_TOO_LONG (N chars; X handles max 15)INVALID_CHARACTERS (X handles allow A-Z, a-z, 0-9, underscore only)INVALID_URL_FORMAT (expected https://x.com/<handle> …)EMPTY_INPUT

INVALID_HANDLE error items only fire for profile-consuming modes (profile, timeline, follower, following, all-in-one). They let you distinguish typos from deleted accounts in downstream workflows.

Non-error item types

Beyond type: "tweet" | "user" | "comment" | "error", two control-plane item types are emitted in specific situations:

type | When it fires | Payload |

|---|---|---|

dry-run | You set dryRun: true on input | {type, scrapeMode, inputs: {profiles, tweetIds, listIds, searchQueries, activeCookies, proxyTier, cookiePoolSize}, inputProblems} — no scraping happened |

partial-summary | Graceful shutdown (SIGTERM / actor abort / migration) interrupted the run mid-batch | {type, reason, mode, durationSecs, stats, schemaVersion} — carries the counters collected before the interrupt |

Both are harmless for normal dataset consumers — filter them the same way you filter error items: type === "tweet" | "user" | "comment".

SHADOW_BANNED — tweet or account is restricted / limited-visibility

X distinguishes between deleted tweets (HTTP 404 → NOT_FOUND) and tweets that are still public but intentionally hidden — either because of a visibility limit or because the posting account has been restricted. When X returns a 403 with a message mentioning "restricted", "suspended", "limited", or "visibility", the scraper emits a SHADOW_BANNED item so you can treat these cases differently from genuine deletions.

RATE_LIMITED, DOC_ID_ROTATED, FORMAT_CHANGE, BLOCKED, AUTH_EXPIRED — operational telemetry

Persistent operational failures surface once per run as structured error items so downstream workflows (n8n, Zapier, a monitoring dashboard) can react. These mirror the errorsByCategory section of the run summary:

| Code | Meaning | Typical fix |

|---|---|---|

RATE_LIMITED | Hit X's rate-limit window. The scraper already respects the x-rate-limit-reset header internally; the item appears only when the run genuinely exhausted the window. | Rerun after the reset window, or split the batch across multiple schedules. |

DOC_ID_ROTATED | X rotated a GraphQL queryId. The scraper auto-resolves the new ID for the current run — this error item signals a PR is needed to update src/utils/constants.js. | Run tools/rotate-doc-ids.js or the drift-detection CI that opens an issue automatically. |

FORMAT_CHANGE | A parser couldn't read a key it expected. Rare; usually means X shipped a schema change. | Report via a GitHub issue with a sample input. |

BLOCKED | X returned a 403 unrelated to visibility (e.g. account flagged). | Rotate cookies or cool down the source. |

AUTH_EXPIRED | Current cookie was invalidated mid-run (single-cookie setups). | Refresh X_LOGIN_COOKIES. For multi-cookie pools, see COOKIE_EXHAUSTED. |

COOKIE_EXHAUSTED — every cookie in the pool burned mid-run

Fires at most once per run. When authenticated modes are in use and X invalidates your auth_token (cookie burn), the scraper rotates to the next cookie in X_LOGIN_COOKIES (separated by |||). If every pooled cookie is burned during the same run, a single COOKIE_EXHAUSTED item is pushed at the end so downstream workflows can alert on it.

This is distinct from the startup cookie-health check — COOKIE_EXHAUSTED means cookies became invalid mid-run, not that they were bad going in. A single-cookie setup bypasses rotation entirely and will simply fail authenticated calls with the usual AUTH_EXPIRED error category.

Reacting to errors downstream

Output Controls (optional)

Sparse output — fields

Pass an array of top-level keys to keep only those in every output item. Empty (default) returns the full object. Useful when exporting to Google Sheets, HubSpot, or CSV — smaller payloads, less downstream parsing.

Always preserved regardless of the allow-list: type, input, error, message, username, searchTerm, followerOf, followingOf. That way error items are never silently truncated.

Incremental scraping — onlyIfChangedSince

Skip items that are already in a prior dataset or are older than a given timestamp. Huge cost-saver for hourly monitoring.

Accepts:

{ "since": "2026-04-20T00:00:00Z" }— drop items older than the cutoff{ "dataset": "DATASET_ID" }— read tweetIds + latest createdAt from a prior dataset and filter against both{ "since": "...", "dataset": "..." }— combine; the newer cutoff wins

Completion webhook — completionWebhookUrl

POST the run summary to your own endpoint when the run finishes. Non-blocking; failures are logged but never fail the run.

Payload:

Structured JSON logs — jsonLogs: true

Emit newline-delimited JSON to stdout (one event per line) instead of the default free-text logs. Drops directly into Cloudwatch / Datadog / Loki without regex parsing.

Sample line:

Per-input ledger — emitPerInputLedger: true

When enabled, the run summary carries a perInput array with each input's outcome — great for batches where you scrape hundreds of handles and need to know exactly which ones succeeded.

Ledger caps at 500 entries; overflow is reported as summary.perInputOverflow. The ledger lives on the run's summary key-value record — read it via await Actor.openKeyValueStore().then(s => s.getValue('summary')) or from the Apify run UI.

Dry run — dryRun: true

Validate inputs, check cookies, resolve doc IDs, emit a single {type:"dry-run", ...} item, then exit WITHOUT any network scraping. Pre-production smoke test / CI validation.

Bulk CSV input — inputCsv

Provide an HTTPS URL of a CSV file. Each non-empty row is auto-routed to the matching input array:

| Row shape | Routes to |

|---|---|

https://x.com/<user>/status/<id> | tweetURLs |

https://x.com/<user> or @user or user | profiles |

| Anything else | searchQueries |

Header row is auto-detected (any of username, handle, tweet_url, profile_url, query, search, url). Lets you batch thousands of handles without hitting Apify's input-size limit.

Cross-run cache — useCrossRunCache

Opt-in 24 h KV cache for tweets + profiles. When enabled, scheduled/polling runs that re-scrape the same URLs within 24 h serve from cache instead of hitting X again.

- Store name:

x-scraper-xrun-cache(auto-created per actor). - TTL: 24 hours, enforced on read.

- Cache key:

tweet:<tweetId>/profile:<handle>. - Keys are case-insensitive for handles.

- Summary logged at end of run:

Cross-run cache: hits=2 misses=0 writes=0. - Default off — no behaviour change for existing callers.

Great fit for:

- Hourly brand-monitoring runs

- Lead-enrichment pipelines that revisit the same profiles

- Dashboard refresh jobs

Not useful for one-off backfills or queries that need fresh engagement numbers.

Engagement Fields: null vs 0

All engagement fields (likeCount, retweetCount, viewCount, replyCount, quoteCount, bookmarkCount) follow a strict convention:

null— the field is not applicable for this content type. For example, retweets don't carry independent engagement counts, and pre-2023 tweets have no view tracking. A null value means the data genuinely does not exist.0— the platform reported zero engagement. The tweet was seen/liked/retweeted exactly zero times.

This prevents false zeros from polluting your data. If a field is null, the engagement data was unavailable — not zero. Never treat null and 0 as equivalent.

Query Wizard

Build complex search queries without learning advanced syntax. These fields auto-append to your search queries:

| Field | What it does | Equivalent syntax |

|---|---|---|

| From User | Tweets from specific user | from:username |

| In Reply To | Replies to specific user | to:username |

| Mentioning User | Mentions of user | @username |

| Start Date | Tweets after date | since:YYYY-MM-DD |

| End Date | Tweets before date | until:YYYY-MM-DD |

| Minimum Likes | Min like threshold | min_faves:N |

| Minimum Retweets | Min RT threshold | min_retweets:N |

| Minimum Replies | Min reply threshold | min_replies:N |

| Language Filter | Filter by language | lang:xx |

| Only Verified | Verified users only | filter:verified |

| Only Images | Tweets with attached photos (strict — card-only matches are dropped) | filter:images |

| Only Videos | Tweets with attached videos or GIFs (strict — card-only matches are dropped) | filter:videos |

| Only Quotes | Quote tweets only | filter:quote |

| Geotagged Near | Location filter | near:"city" |

| Within Radius | Radius from location | within:10km |

Advanced Search Syntax

Combine operators: from:NASA #space since:2024-01-01 min_faves:100 lang:en -filter:retweets

| Operator | Example | Description |

|---|---|---|

from:user | from:NASA | Tweets from specific user |

to:user | to:support | Replies to specific user |

@user | @OpenAI | Mentions of specific user |

#hashtag | #AI | Tweets with hashtag |

$cashtag | $TSLA | Tweets with cashtag |

since:date | since:2024-01-01 | Tweets after date |

until:date | until:2024-12-31 | Tweets before date |

min_faves:N | min_faves:100 | Minimum likes |

min_retweets:N | min_retweets:50 | Minimum retweets |

lang:xx | lang:en | Filter by language |

filter:media | filter:media | Only tweets with media |

filter:images | filter:images | Only tweets with images |

filter:videos | filter:videos | Only tweets with videos |

filter:verified | filter:verified | Only verified users |

filter:blue_verified | filter:blue_verified | Only X Premium users |

-filter:retweets | -filter:retweets | Exclude retweets |

-filter:replies | -filter:replies | Exclude replies |

filter:quote | filter:quote | Only quote tweets |

conversation_id:ID | conversation_id:123 | Thread replies |

near:"city" | near:"San Francisco" | Geotagged tweets |

within:radius | within:10km | Within radius |

Technical Details

- Stack: Node.js 20, Apify SDK 3, Impit (Chrome TLS fingerprinting)

- Memory: 128 MB (HTTP-only, no browser) — peak RSS ~50–85 MB in typical runs

- Speed: ~5–25 seconds per run depending on mode, max 5000 results per run

- Residential proxy (Evomi) included on authenticated modes by default; unauthenticated modes (post, profile, extractor) auto-route via datacenter IPs to save egress

- Active cookie rotation —

X_LOGIN_COOKIEScan contain multiple|||-separated cookies; the scraper cycles to the next entry when X burns the current one mid-run, and emits a singleCOOKIE_EXHAUSTEDitem when every entry is burned - In-run + cross-run request caches — duplicates in the same batch reuse in-flight promises; with

useCrossRunCache: true, parsed tweets + profiles are cached in a named KV store for 24 h so scheduled polling runs skip re-scraping fresh data - Rate-limit aware — honours

x-rate-limit-reseton 429 and waits the server-requested duration (capped at 60 s per retry) - Self-healing doc IDs — on

DOC_ID_ROTATED, the scraper fetches x.com's main JS bundle, resolves the newqueryIdfor the failing operation, and retries once this run. Weekly GitHub Action (x-bundle-drift.yml) opens an issue when drift is detected - Optional deep hydration —

hydrateFullUser: trueon follower / following / user-search modes enriches each returned user with Q10 fields X trims on list endpoints - Sparse output — pass

fields: ["tweetId","text","likeCount"]to emit only the specified keys (type + input + error fields are always preserved) - Resume capability via

resumeFromDataset(skip already-scraped items from a prior dataset) - Custom output via

customMapFunction - Progress log — runs emitting >25 results print a per-batch status line so long runs don't look silent

- Parser regression suite —

npm testruns 61 tests (53 unit tests against hand-crafted X response fixtures + 8 integration tests replaying a recorded live GraphQL response); every field fix shipped through every audit is pinned down by at least one test, including the 9 Tier 1 + Tier 2 field additions in build 0.1.73 - Field accuracy: every mode ≥95% null-aware field-fill against Chrome ground truth (last verified 2026-04-25 against 60 our-runs + 22 competitor benchmark runs on build 0.1.71; build 0.1.73 then added 9 additive fields without changing parser correctness — see docs/audit/2026-04-25-x-competitive-analysis.md). Parser live-sample corpus in docs/audit/q1-q10-live-samples.json tracks 6/10 Q1–Q10 enrichment fields with organically-sourced URLs

- Mutation testing:

npm run test:mutateruns Stryker against the parsers + helpers to grade how well the test suite would catch real bugs. Surviving mutants are bug templates; target trajectory is ≥75% mutation score across the parsers - Predeploy gate:

apify pushrunsnpm run check:modes && npm run test:parsers && npm run check:drift— catches mode-consistency regressions, parser regressions, and X bundle drift before a build ships - Gzip-compressed POST bodies on authenticated GraphQL requests when the payload shrinks ≥10% — cuts upload bandwidth for search/follower/timeline runs

- Graceful shutdown: SIGTERM / abort / migrate events trip the circuit breaker, flush caches, and emit a

{type:"partial-summary", reason, mode, durationSecs, stats, schemaVersion}item before exit — you never lose accumulated state to a timeout - Text normalisation: every string field exposed to the user (

text,description, etc.) is run through an HTML-entity decoder (named + numeric entities) and a control-character stripper before emission. Newlines and emoji are preserved. Pasting output into Google Sheets / HubSpot / a CSV no longer leaves stray&or invisible zero-width characters - Cross-run guest-token cache: for unauthenticated modes, the IP-bound guest token is reused from a 4-hour KV cache across runs — saves the ~500 ms activation round-trip on every subsequent run that hits the same Evomi exit IP. Transparent: the underlying token still rotates per X's TTL

- Lazy module loading: the cross-run cache module is only imported when

useCrossRunCache: trueis set, trimming a few milliseconds off cold-start for the typical run that doesn't enable it

Troubleshooting

Getting no results?

- Check that your search query isn't too restrictive

- Try

sort: Topinstead ofLatest— X sometimes returns fewer results with Latest - Remove

until:date filters if getting low counts

Authentication errors?

- Your cookies may have expired. Get fresh

auth_tokenandct0from x.com - Both

auth_tokenandct0are required - Format:

auth_token=YOUR_TOKEN; ct0=YOUR_CT0

Missing tweets?

- Some tweets are shadow-banned or filtered by X. This is outside our control.

- Try different date ranges or search terms

Want to resume a failed run?

- Copy the dataset ID from the previous run

- Add it to

resumeFromDataset— already-scraped items will be skipped

Support

Found a bug or need help? Open an issue on the Issues tab.