Pay $3.50 for 1,000 posts

Twitter Scraper

Pay $3.50 for 1,000 posts

Scrape tweets from any Twitter user profile. Top Twitter API alternative to scrape Twitter hashtags, threads, replies, followers, images, videos, statistics, and Twitter history. Export scraped data, run the scraper via API, schedule and monitor runs or integrate with other tools.

ℹ️ This Twitter scraper only collects data that’s publicly available. This means data that’s accessible without logging in to Twitter and without accepting Twitter’s terms of use. Please note that if you accepted Twitter’s terms of use, your ability to scrape Twitter data may be limited. If that’s the case, please review the terms and make an informed decision yourself.

⚠️ This scraper cannot scrape the latest tweets, only the most liked ones. To scrape the latest tweets, try other Twitter scrapers in Apify Store, for example: Twitter Scraper 🔗 or 🏯 Tweet Scraper V2 🔗.

🐦 What data can Twitter Scraper extract?

Twitter Scraper crawls specified Twitter profiles and Twitter post URLs, and extracts:

🔍 User tweets: tweet text, timestamp and URL

🏞 Tweet media: images, videos, gifs, hashtags

🦸♂️ Tweet author data: screen name, follower/following count, description, verified status, user mentions

📊 Statistics for each tweet: favorites, replies, and retweets for each tweet

What are the limitations of Twitter Scraper?

Due to the recent changes on Twitter, there are new limitations when it comes to viewing and therefore scraping tweets on this platform:

- The Actor extracts only publicly available information

- For each profile, it is possible to scrape up to 100 tweets

- This scraper cannot scrape the latest tweets due to the changes on Twitter website

- Instead, the scraped tweets are ordered by the number of likes (see the output sample)

Keep in mind that this might affect your experience if you're trying to scrape an older tweet or follow a chronological order of their posts. If these limitations are a deal breaker for you, we recommend switching to other Twitter scrapers in Apify Store, for example: Twitter Scraper 🔗 or Twitter Profile Scraper 🔗.

📚 How do I use Twitter Scraper?

It’s quite easy: pick Twitter Scraper, choose a profile or a tweet to scrape, and click the Start button. If you need guidance on how to run the scraper, you can read our step-by-step tutorial or watch a short video tutorial ▷ on YouTube.

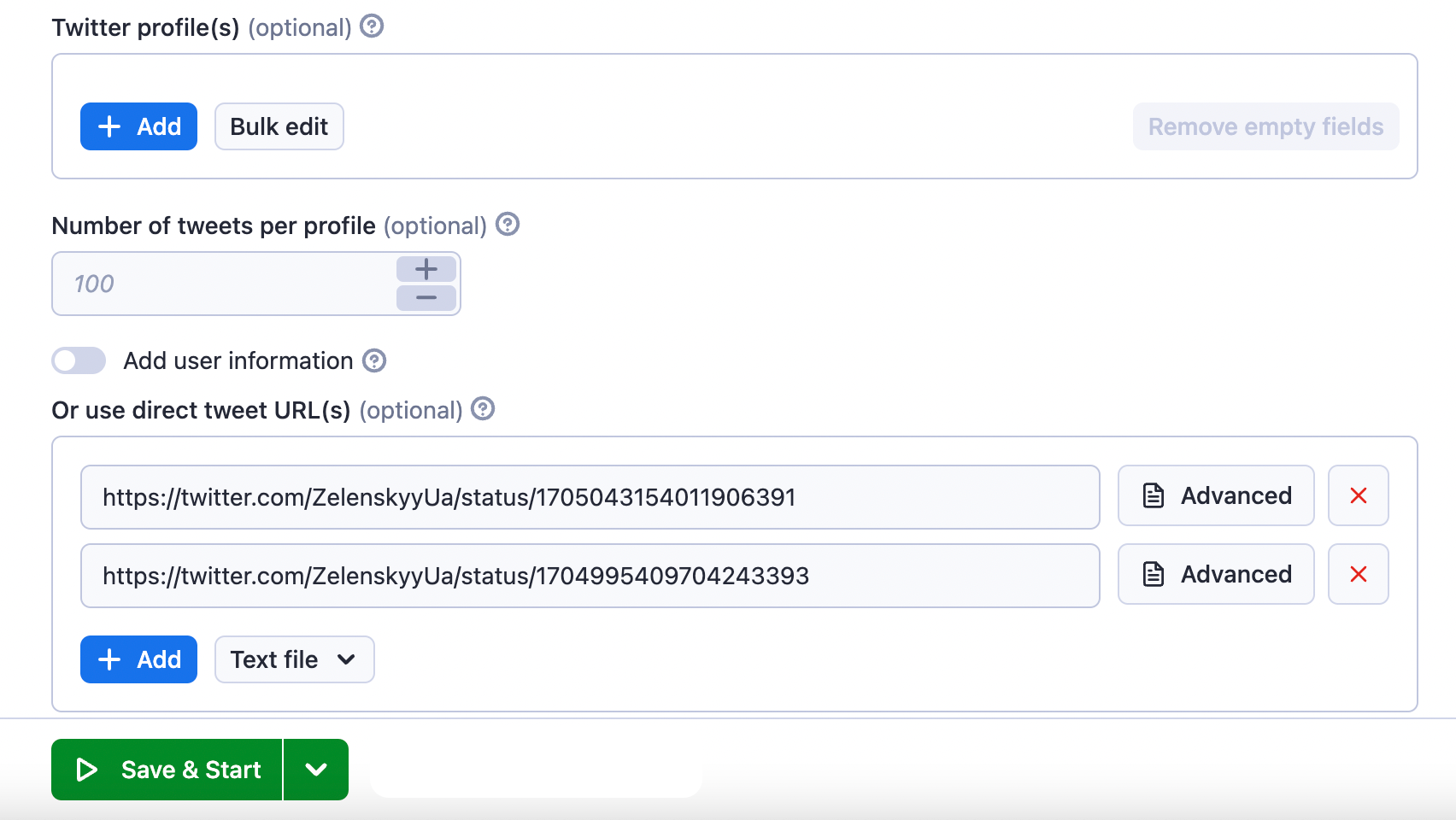

⬇️ Input example

You can scrape Twitter either by using Twitter usernames (handles) or by Tweet URLs. If you want to use the URL option, these are the supported Twitter URL types ⬇️

- Profiles: https://twitter.com/ZelenskyyUa

- Tweets: https://twitter.com/ZelenskyyUa/status/1694240409428299869

This is what the input options of the Twitter Scraper look like:

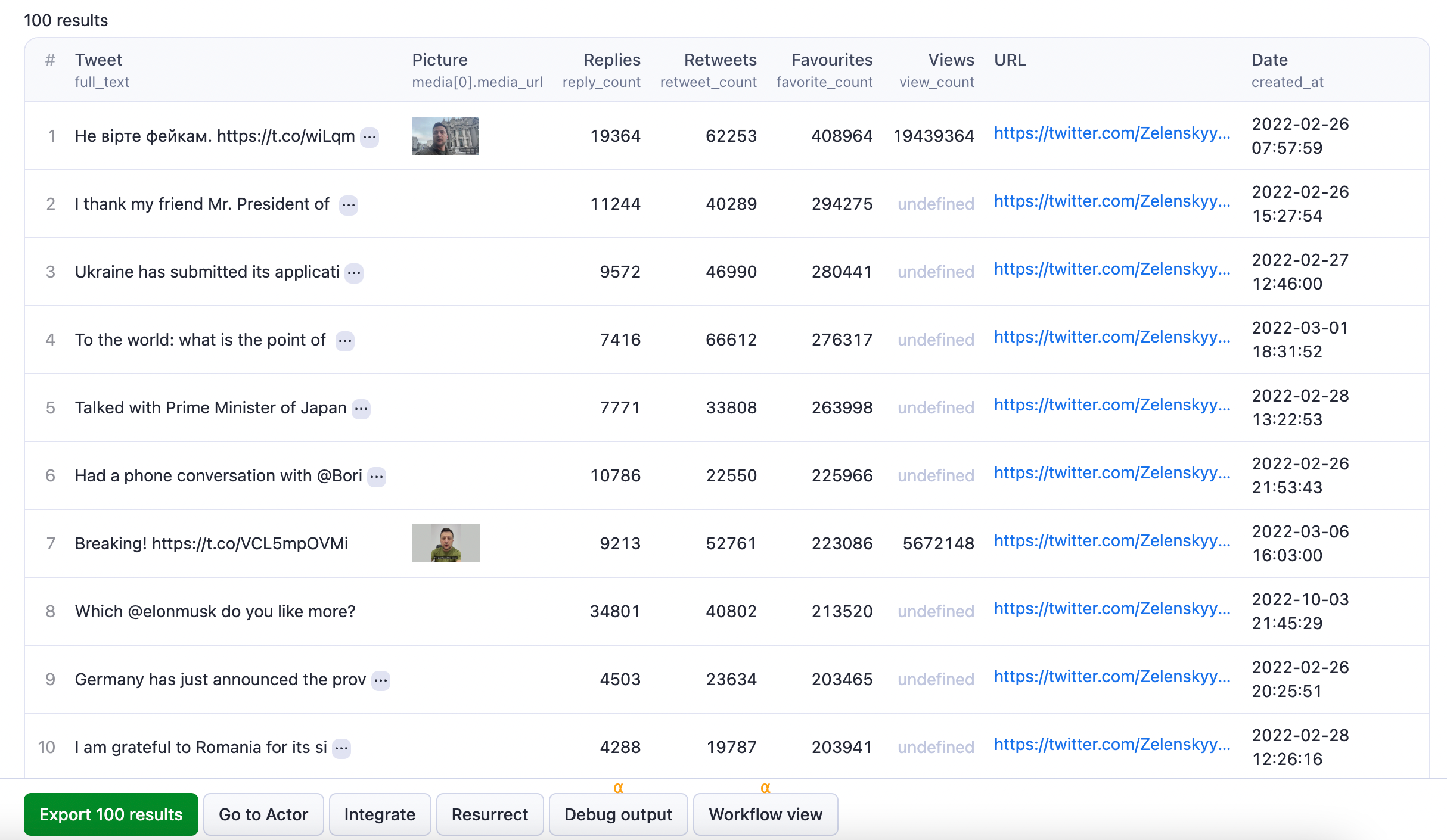

⬆️ Twitter data output

The results will be wrapped into a dataset which you can find in the Storage tab. Here's an excerpt from the dataset you'd get if you apply the input parameters above:

You can download the resulting dataset in various formats such as JSON, HTML, CSV or Excel. Each item in the dataset will contain a separate tweet following this format:

1[ 2 { 3 "user": { 4 "created_at": "2019-04-23T10:21:15.000Z", 5 "default_profile_image": false, 6 "description": "Президент України", 7 "fast_followers_count": 0, 8 "favourites_count": 46, 9 "followers_count": 7385672, 10 "friends_count": 1, 11 "has_custom_timelines": true, 12 "is_translator": false, 13 "listed_count": 17521, 14 "location": "Україна", 15 "media_count": 1541, 16 "name": "Володимир Зеленський", 17 "normal_followers_count": 7385672, 18 "possibly_sensitive": false, 19 "profile_banner_url": "<https://pbs.twimg.com/profile_banners/1120633726478823425/1692773060>", 20 "profile_image_url_https": "<https://pbs.twimg.com/profile_images/1585550046740848642/OpGKpqx9_normal.jpg>", 21 "screen_name": "ZelenskyyUa", 22 "statuses_count": 3968, 23 "translator_type": "none", 24 "url": "<https://t.co/ctVL0atMBQ>", 25 "verified": false, 26 "verified_type": "Government", 27 "withheld_in_countries": [], 28 "id_str": "1120633726478823425" 29 }, 30 "id": "1497450853380280320", 31 "conversation_id": "1497450853380280320", 32 "full_text": "Не вірте фейкам. <https://t.co/wiLqmCuz1p>", 33 "reply_count": 19364, 34 "retweet_count": 62253, 35 "favorite_count": 408964, 36 "hashtags": [], 37 "symbols": [], 38 "user_mentions": [], 39 "urls": [], 40 "media": [ 41 { 42 "media_url": "<https://pbs.twimg.com/ext_tw_video_thumb/1497450795532439554/pu/img/b7JxO8OAXonSfaHv.jpg>", 43 "type": "video", 44 "video_url": "<https://video.twimg.com/ext_tw_video/1497450795532439554/pu/vid/848x480/dxXxEuM6ZGNLRPjZ.mp4?tag=12>" 45 } 46 ], 47 "url": "<https://twitter.com/ZelenskyyUa/status/1497450853380280320>", 48 "created_at": "2022-02-26T05:57:59.000Z", 49 "view_count": 19439365, 50 "quote_count": 12199, 51 "is_quote_tweet": false, 52 "is_retweet": false, 53 "is_pinned": false, 54 "is_truncated": false, 55 "startUrl": "<https://twitter.com/ZelenskyyUa/with_replies>" 56 }, 57 { 58 "user": { 59 "created_at": "2019-04-23T10:21:15.000Z", 60 "default_profile_image": false, 61 "description": "Президент України", 62 "fast_followers_count": 0, 63 "favourites_count": 46, 64 "followers_count": 7385672, 65 "friends_count": 1, 66 "has_custom_timelines": true, 67 "is_translator": false, 68 "listed_count": 17521, 69 "location": "Україна", 70 "media_count": 1541, 71 "name": "Володимир Зеленський", 72 "normal_followers_count": 7385672, 73 "possibly_sensitive": false, 74 "profile_banner_url": "<https://pbs.twimg.com/profile_banners/1120633726478823425/1692773060>", 75 "profile_image_url_https": "<https://pbs.twimg.com/profile_images/1585550046740848642/OpGKpqx9_normal.jpg>", 76 "screen_name": "ZelenskyyUa", 77 "statuses_count": 3968, 78 "translator_type": "none", 79 "url": "<https://t.co/ctVL0atMBQ>", 80 "verified": false, 81 "verified_type": "Government", 82 "withheld_in_countries": [], 83 "id_str": "1120633726478823425" 84 }, 85 "id": "1497564078897774598", 86 "conversation_id": "1497564078897774598", 87 "full_text": "I thank my friend Mr. President of 🇹🇷 @RTErdogan and the people of 🇹🇷 for their strong support. The ban on the passage of 🇷🇺 warships to the Black Sea and significant military and humanitarian support for 🇺🇦 are extremely important today. The people of 🇺🇦 will never forget that!", 88 "reply_count": 11244, 89 "retweet_count": 40289, 90 "favorite_count": 294263, 91 "hashtags": [], 92 "symbols": [], 93 "user_mentions": [ 94 { 95 "id_str": "68034431", 96 "name": "Recep Tayyip Erdoğan", 97 "screen_name": "RTErdogan", 98 "profile": "<https://twitter.com/RTErdogan>" 99 } 100 ], 101 "urls": [], 102 "media": [], 103 "url": "<https://twitter.com/ZelenskyyUa/status/1497564078897774598>", 104 "created_at": "2022-02-26T13:27:54.000Z", 105 "quote_count": 8290, 106 "is_quote_tweet": false, 107 "is_retweet": false, 108 "is_pinned": false, 109 "is_truncated": false, 110 "startUrl": "<https://twitter.com/ZelenskyyUa/with_replies>" 111 }, 112 { 113 "user": { 114 "created_at": "2019-04-23T10:21:15.000Z", 115 "default_profile_image": false, 116 "description": "Президент України", 117 "fast_followers_count": 0, 118 "favourites_count": 46, 119 "followers_count": 7385672, 120 "friends_count": 1, 121 "has_custom_timelines": true, 122 "is_translator": false, 123 "listed_count": 17521, 124 "location": "Україна", 125 "media_count": 1541, 126 "name": "Володимир Зеленський", 127 "normal_followers_count": 7385672, 128 "possibly_sensitive": false, 129 "profile_banner_url": "<https://pbs.twimg.com/profile_banners/1120633726478823425/1692773060>", 130 "profile_image_url_https": "<https://pbs.twimg.com/profile_images/1585550046740848642/OpGKpqx9_normal.jpg>", 131 "screen_name": "ZelenskyyUa", 132 "statuses_count": 3968, 133 "translator_type": "none", 134 "url": "<https://t.co/ctVL0atMBQ>", 135 "verified": false, 136 "verified_type": "Government", 137 "withheld_in_countries": [], 138 "id_str": "1120633726478823425" 139 }, 140 "id": "1497885721931268103", 141 "conversation_id": "1497885721931268103", 142 "full_text": "Ukraine has submitted its application against Russia to the ICJ. Russia must be held accountable for manipulating the notion of genocide to justify aggression. We request an urgent decision ordering Russia to cease military activity now and expect trials to start next week.", 143 "reply_count": 9572, 144 "retweet_count": 46990, 145 "favorite_count": 280441, 146 "hashtags": [], 147 "symbols": [], 148 "user_mentions": [], 149 "urls": [], 150 "media": [], 151 "url": "<https://twitter.com/ZelenskyyUa/status/1497885721931268103>", 152 "created_at": "2022-02-27T10:46:00.000Z", 153 "quote_count": 2687, 154 "is_quote_tweet": false, 155 "is_retweet": false, 156 "is_pinned": false, 157 "is_truncated": false, 158 "startUrl": "<https://twitter.com/ZelenskyyUa/with_replies>" 159 }, 160...

🔍 Want more tools for scraping Twitter?

Use our fast dedicated scrapers if you want to scrape specific Twitter data. Each of them is built particularly for the relevant Twitter scraping case be it URLs, videos, spaces or user profiles. Feel free to browse them:

| 🅇 X Twitter | 🐦 Twitter Scraper | 📱 Tweet Scraper |

| 🔗 Twitter URL Scraper | 🎥 Twitter Video Downloader | ❌ Best Twitter Scraper |

| 🧞♂️ Twitter Profile Scraper | 🛰️ Twitter Spaces Scraper | 🧭 Twitter Explorer |

❓ FAQ

Is it legal to scrape Twitter?

It is legal to scrape Twitter to extract publicly available information, but you should be aware that the data extracted might contain personal data. Personal data is protected by GDPR in the European Union and by other regulations around the world. You should not scrape personal data unless you have a legitimate reason to do so. If you're unsure whether your reason is legitimate, consult your lawyers. You can also read our blog post on the legality of web scraping.

How many results can you scrape with Twitter scraper?

Twitter is currently limiting the tweets per profile to 100 tweets (as of August 2023).

Why use Twitter Scraper?

Scraping Twitter will give you access to more than 500 million tweets posted every day. You can use that data in lots of different ways:

🔍 Track discussions about your brand, products, country, or city.

👥 Monitor your competitors and see how popular they really are, and how you can get a competitive edge.

🔎 Keep an eye on new trends, attitudes, and fashions as they emerge.

🧠 Use the data to train AI models or for academic research.

😊 Track sentiment to make sure your investments are protected.

🚫 Fight fake news by understanding the pattern of how misinformation spreads.

✈️ Explore discussions about travel destinations, services, and amenities, and take advantage of local knowledge.

💰 Analyze consumer habits and develop new products or target underdeveloped niches.

Not your cup of tea? Build your own scraper

Twitter scraper doesn’t exactly do what you need? You can always build your own! We have various scraper templates in Python, JavaScript, and TypeScript to get you started. Alternatively, you can write it from scratch using our open-source library Crawlee. You can keep the scraper to yourself or make it public by adding it to Apify Store (and find users for it).

Or let us know if you need a custom scraping solution.

Can I integrate Twitter Scraper with other apps?

Last but not least, Twitter Scraper can be connected with almost any cloud service or web app thanks to integrations on the Apify platform. You can integrate with Make, Zapier, Slack, Airbyte, GitHub, Google Sheets, Google Drive, and more. Or you can use webhooks to carry out an action whenever an event occurs, e.g. get a notification whenever Twitter Scraper successfully finishes a run.

Can I use API with Twitter Scraper?

Yes. You can use the Apify API which gives you programmatic access to the Apify platform. The API is organized around RESTful HTTP endpoints that enable you to manage, schedule, and run Apify actors. The API also lets you access any datasets, monitor actor performance, fetch results, create and update versions, and more.

To access the API using Node.js, use the apify-client NPM package. To access the API using Python, use the apify-client PyPI package.

Check out the Apify API reference docs for full details or click on the API tab for code examples.

Your feedback

We’re always working on improving the performance of our Actors. So if you’ve got any technical feedback for Twitter Scraper or simply found a bug, please create an issue on the Actor’s Issues tab in Apify Console.

- 1.2k monthly users

- 38.1% runs succeeded

- 6.9 days response time

- Created in Jun 2019

- Modified 17 days ago

Quacker

Quacker