Commit Historian Agent

Pricing

Pay per event

Commit Historian Agent

Simple tool to help analyze Github repository commits. It checkouts the repository and get all relevant commit messages. It uses OpenAI to answer questions asked by the user. This is done through PydanticAI framework.

Pricing

Pay per event

Rating

0.0

(0)

Developer

Josef Procházka

Maintained by CommunityActor stats

0

Bookmarked

2

Total users

1

Monthly active users

a year ago

Last modified

Categories

Share

Commit Historian Agent

Simple tool to help analyze Github repository commits. It checkouts the repository and gets all relevant commit messages. It uses OpenAI to answer questions asked by the user. This is done through PydanticAI framework.

How to run it

You can pick this actor from Apify store and run it on the Apify platform.

Enter repository name and your question and start the Actor. Optionally you can choose a specific branch if your question is not related to the default branch of the repository.

If you do not input your own OpenAI API key then the actor will use our own API key, which will cause additional costs for running the actor. You can pass your own OpenAI API key to significantly reduce the actor run costs.

Example

Inputs:

prompt: Show several most complicated changes done last month.

repository: apify/crawlee-python

Result:

Here are some of the most complicated changes from last month in the

apify/crawlee-pythonrepository:

Status Code Handling Update: This refactor involved removing parameters and methods related to HTTP error status codes in HTTP clients, moving logic to a different class, and updating tests to ensure proper handling of session blocking status codes and error codes that require retries or retires. This was a significant change due to the impact on multiple components such as

Session,SessionPool,PlaywrightCrawler, andHttpCrawlerdetails here.Session Cookie Management: The approach to handling cookies in a session was changed from using a plain dictionary to a more sophisticated

SessionCookiesclass incorporatingCookieJar. This supports basic cookie parameters and multiple domains, requiring extensive updates to tests and support for multi-domain scenarios details here.Fingerprint Integration: Integration of the

browserforgepackage to enable fingerprint and header generation inPlaywrightCrawlerwas implemented. This added significant functionality to enhance the crawling process by using generated fingerprints details here.These complex changes involved substantial modifications to multiple parts of the codebase, including handling complex data structures, refactoring logic spread across different modules, and careful testing to ensure stability.

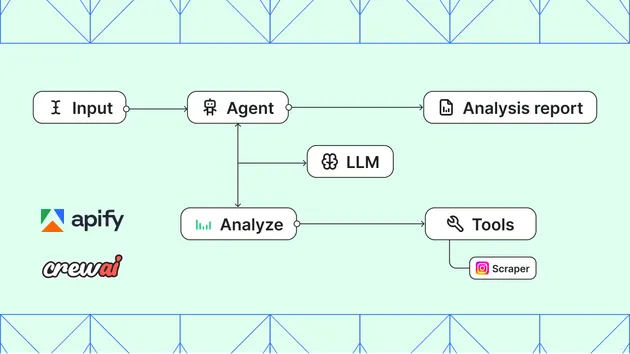

How does it work

This actor defines one main AI agent that is responsible for processing the prompt and return desired output. It uses one tool that gets the commit summaries for the main agent.

The tool for getting the commit summaries is responsible for suggesting the relevant time scope of the prompt, getting the raw commit messages in the relevant time scope and prefilter the commits based on whether they seem relevant for the main prompt or not. It is using two different AI agents through what is described in PydanticAI documentation as programatic agent hand-off:

- Agent responsible for suggesting time scope of the prompt.

- Agent responsible for deciding whether individual commit is relevant for the prompt.