Google Search Scraper — SERP Results, 2x Cheaper

Pricing

Pay per usage

Google Search Scraper — SERP Results, 2x Cheaper

Scrape Google search results (SERPs). Get organic results, People Also Ask, related searches, featured snippets. Perfect for SEO analysis, keyword research. Works with residential proxies. $0.003/search.

Pricing

Pay per usage

Rating

0.0

(0)

Developer

Ken Digital

Maintained by CommunityActor stats

0

Bookmarked

12

Total users

5

Monthly active users

2 months ago

Last modified

Categories

Share

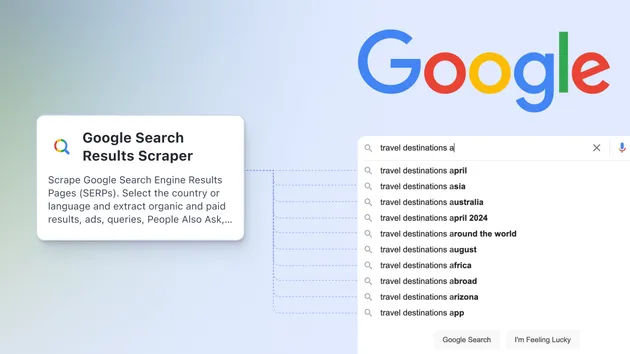

🔍 Google Search Scraper — SERP Results

Scrape Google Search Engine Results Pages (SERPs) for any query. Extract organic results, People Also Ask, related searches, featured snippets, and knowledge panels.

2x cheaper than competitors. Pay just $0.003 per search — most alternatives charge $0.006–$0.012+.

✨ What You Get

| Result Type | Extracted Fields |

|---|---|

| Organic Results | Title, URL, description, position |

| People Also Ask | Questions from the PAA box |

| Related Searches | Suggested searches at bottom of SERP |

| Featured Snippets | Answer box content + source URL |

| Knowledge Panel | Entity title + description |

🚀 Use Cases

- SEO Analysis — Track keyword rankings across countries and languages

- Keyword Research — Discover related searches and PAA questions to target

- Competitor Monitoring — See who ranks for your target keywords

- Content Strategy — Find featured snippet opportunities

- Market Research — Analyze search landscapes for any industry

- Lead Generation — Find businesses ranking for specific services

💰 Pricing Comparison

| Actor | Price per Search |

|---|---|

| This actor | $0.003 |

| Competitor A | $0.006 |

| Competitor B | $0.008 |

| Competitor C | $0.012 |

Save 50%+ on your SERP data costs.

📥 Input

| Parameter | Type | Default | Description |

|---|---|---|---|

queries | array | required | Search queries to scrape |

resultsPerPage | number | 10 | Results per page (1–100) |

language | string | "en" | Google interface language (en, fr, de, es…) |

country | string | "us" | Country for localized results (us, uk, fr, de…) |

maxPages | number | 1 | SERP pages per query (1–10) |

📤 Output Example

🌍 Supported Countries & Languages

Works with any Google-supported country code (gl parameter) and language code (hl parameter):

- Countries: us, uk, ca, au, de, fr, es, it, br, in, jp, kr, and 100+ more

- Languages: en, fr, de, es, pt, it, nl, ja, ko, zh, ar, and 50+ more

⚡ Features

- GDPR bypass — Automatically handles EU consent screens

- Anti-blocking — Rotating user agents, random delays, exponential backoff on rate limits

- Pagination — Scrape multiple SERP pages per query

- Lightweight — Minimal dependencies (httpx only, no browser needed)

- Fast — HTTP-based scraping, no headless browser overhead

- Pay per event — Only pay for successful searches, not compute time

🔧 Technical Details

- Uses direct HTTP requests (no Playwright/Puppeteer)

- Parses raw HTML with regex for zero-dependency performance

- Handles HTTP 429 with exponential backoff (up to 3 retries)

- Random delays between requests (1–4s) to avoid detection

- Supports Google's various HTML layouts and class names

📋 Tips

- Start small — Test with 1 query before running bulk jobs

- Use pagination wisely — Most useful results are on page 1

- Localize — Set country + language to get region-specific results

- Batch queries — More efficient than running separate tasks per query

- Monitor results — Google's HTML structure changes occasionally; report issues

🐛 Limitations

- Google may serve CAPTCHAs or JS-only pages under heavy use or from datacenter IPs — the actor retries but can't solve them. On Apify's infrastructure with residential proxies, this works reliably.

- HTML class names change — we use multiple fallback patterns to cover various layouts, but Google's markup evolves.

- Knowledge panel extraction may be incomplete for complex entities.

- Featured snippets vary widely in format; we extract the visible text but structured data is not guaranteed.

- No guaranteed scraping — Use at your own risk; Google's TOS applies.

🧪 Local Testing

Important: Google often serves JavaScript-only pages to datacenter IPs, which will result in no parseable results when running locally on a VPS. The actor is designed to run on Apify's platform with residential proxies, where it works reliably.

To test the parser locally with a saved HTML file:

Or write a quick script:

📦 What's Inside

🔒 Disclaimer

This actor is provided as-is. Use responsibly and respect Google's Terms of Service. The author is not responsible for any blocks, CAPTCHAs, or legal issues arising from misuse.

📄 License

MIT

📄 License

MIT

🔗 Integration Examples

Python

Node.js

Make / Zapier / n8n

Use the Apify integration — search for this actor by name in the Apify app connector. No code needed.

🔗 More Scrapers by Ken Digital

| Scraper | What it does | Price |

|---|---|---|

| YouTube Channel Scraper | Videos, stats, metadata via official API | $0.001/video |

| France Job Scraper | WTTJ + France Travail + Hellowork | $0.005/job |

| France Real Estate Scraper | 5 sources + DVF price analysis | $0.008/listing |

| Website Content Crawler | HTML to Markdown for AI/RAG | $0.001/page |

| Google Trends Scraper | Keywords, regions, related queries | $0.002/keyword |

| GitHub Repo Scraper | Stars, forks, languages, topics | $0.002/repo |

| RSS News Aggregator | Multi-source feed parsing | $0.0005/article |

| Instagram Profile Scraper | Followers, bio, posts | $0.0015/profile |

| Google Maps Scraper | Businesses, reviews, contacts | $0.002/result |

| TikTok Scraper | Videos, likes, shares | $0.001/video |

| Google SERP Scraper | Search results, PAA, snippets | $0.003/search |

| Trustpilot Scraper | Reviews, ratings, sentiment | $0.001/review |

⚠️ Important: Residential Proxies Required

This actor targets platforms with strict anti-bot protection (Cloudflare, fingerprinting). To successfully scrape data, you must enable Apify Residential Proxies in the actor settings. Go to the Apify Console → Actor → Settings → Proxy configuration → select Residential proxy pool. Without residential proxies, the actor will return 0 results or errors. ✅ Pro tip: The actor is configured for pay-per-event pricing, so you only pay for successful results.