Reddit Wiki, Emojis & Widgets V1 (6 endpoints)

Pricing

from $1.99 / 1,000 results

Reddit Wiki, Emojis & Widgets V1 (6 endpoints)

Pull a subreddit's wiki — list pages, fetch any page, browse revision history and discussions — plus grab custom emojis and the sidebar widgets payload. Bring your own Reddit bearer + matching proxy.

Pricing

from $1.99 / 1,000 results

Rating

0.0

(0)

Developer

Red Crawler

Maintained by CommunityActor stats

0

Bookmarked

4

Total users

1

Monthly active users

3 days ago

Last modified

Categories

Share

Reddit Wiki, Emojis & Widgets

Pull a subreddit's full wiki, custom emojis, and sidebar widgets. Six self-contained endpoints. Bring your own Reddit Token V2 + matching proxy — Reddit gates these reads behind a logged-in account and binds the Token V2 to the IP that minted it.

Pick the endpoint, fill the matching section, paste your Token V2 + proxy, hit Start.

What you can fetch

Subreddit names accept python, r/python, or the full subreddit URL — paste whichever you have. Wiki page names accept slashes (e.g. config/sidebar, config/description) — pass them verbatim.

1. Wiki Pages — list every page

Lists every wiki page in a subreddit's wiki.

Input: subreddit name.

Returns per page: subreddit, page slug.

Use it when: mapping a community's full documentation, building a wiki index, finding pages worth scraping in detail.

Example

Input

Output (one record per page)

2. Wiki Page — content of one page

The full markdown content + metadata of a single wiki page.

Inputs:

- Subreddit — community name.

- Page — wiki page slug. Slashes allowed (e.g.

config/sidebar). Default:index.

Returns: subreddit, page slug, full markdown content (content_md), revising user, revision date, may-revise flag (whether your Token V2's account can edit), and any other metadata Reddit returns for the page.

Use it when: capturing community rules, FAQ docs, megathread bodies, side-by-side wiki diffs, pulling full text into a knowledge base or chatbot.

Example

Input

Output (one record — content_md truncated for readability; the full record contains the entire wiki markdown)

3. Wiki Page Revisions — revision history

Revision history for a single wiki page.

Inputs:

- Subreddit.

- Page — wiki page slug (default

index). - Limit — 1 to 100 (default 25).

Returns per revision: subreddit, page, revision ID, timestamp, page name, reason (the editor's note), revision-hidden flag, author.

Use it when: auditing changes, tracking mod activity, monitoring rule rewrites, compliance / change logs.

Example

Input

Output (one record per revision — author shortened for readability)

4. Wiki Discussions — discussion threads about one page

Reddit threads referencing a specific wiki page (the public discussions tab on the wiki).

Inputs:

- Subreddit.

- Page — wiki page slug (default

index). - Limit — 1 to 100 (default 25).

Returns per thread: subreddit, page, thread ID, fullname, title, author, score, comment count, created timestamp, permalink, URL, body / selftext.

Use it when: tracking conversation around community docs, finding feedback on rule changes, finding pinned discussion megathreads.

Example

Input

Output (typical record when discussions exist — most wiki pages return zero discussion threads, in which case the run succeeds with no records pushed)

5. All Emojis — custom emojis

A subreddit's full set of custom emojis.

Input: subreddit name.

Returns per emoji: subreddit, emoji name, image URL, user-flair-allowed flag, post-flair-allowed flag, mod-flair-only flag, created-by user.

Use it when: branding capture, mirroring a community's identity, building flair pickers in your own UI, archiving custom emoji sets.

Example

Input

Output (one record per emoji)

6. Get Widgets — sidebar widgets

The sidebar widgets payload — one record per widget (rules, mod list, calendar, custom widget, image widget, button widget, etc.).

Input: subreddit name.

Returns per widget: subreddit, widget ID, kind (rules / moderators / calendar / custom / image / button / etc.), short name, styles object, plus the widget-specific payload (e.g. mod list, calendar entries, custom HTML).

Use it when: theme audits, sidebar mirroring, capturing a community's full visual identity, building branded clones.

Example

Input

Output (one record per widget — calendar data array shortened to one event for readability)

Reddit user auth — required for every endpoint

Every endpoint in this actor requires:

- Token V2 (

token_v2cookie) — your personal Reddit access token. - Proxy — the proxy you used to mint the Token V2. Reddit binds the Token V2 to the IP that created it, so the proxy IP must match.

Why: Reddit gates wiki / emoji / widget reads behind a logged-in account for many subreddits. Anonymous calls return USER_REQUIRED or 403.

Credential lifetimes

| Credential | Lifetime | When to refresh |

|---|---|---|

Token V2 (token_v2 cookie) | ~24 hours | Daily — or save a Reddit Session in the Reddit Vault and let it auto-refresh |

Reddit Session (reddit_session cookie) | ~180 days | Roughly twice a year, or when a run reports unauthorized |

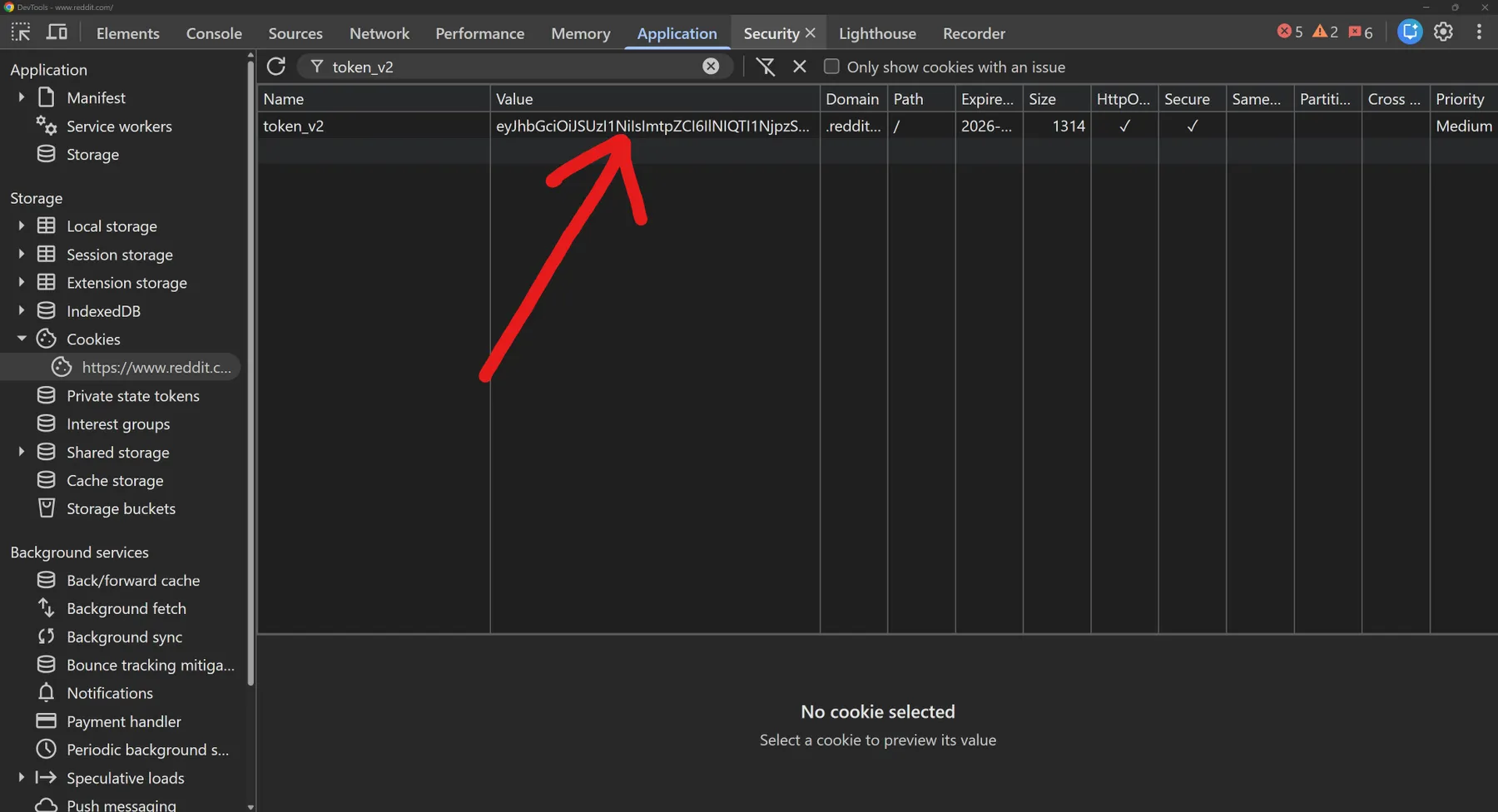

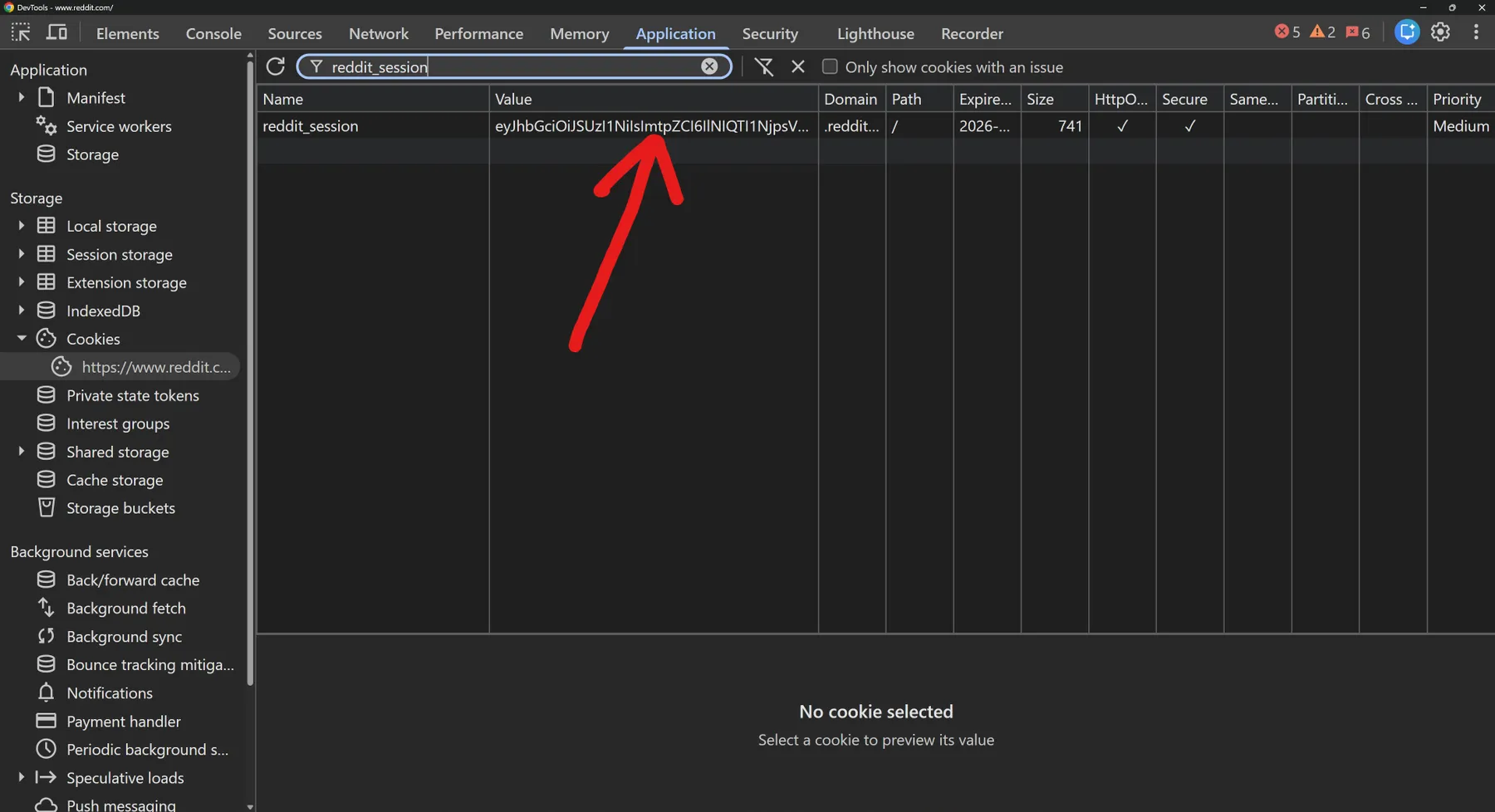

How to extract these from your browser: open Reddit in Chrome / Brave / Edge / Firefox, then DevTools → Application → Cookies →

https://www.reddit.com. Filter bytoken_v2(the Token V2) orreddit_session(the Reddit Session) and copy the Value column.

Proxy formats accepted:

ip:port:user:passhttp://user:pass@ip:portsocks5://ip:port

If the proxy IP doesn't match the Token V2's mint IP, Reddit returns 401 / 403 and the run fails.

Both fields are stored as secrets by Apify and not echoed back in logs.

How to run

- Pick an endpoint in the "What to fetch" dropdown.

- Open the matching section and fill its fields. Each section is independent — fields outside your chosen section are ignored.

- Scroll to the Reddit user auth section and paste your Token V2 + proxy (or pick a saved account from the Reddit Vault).

- Click Start.

Default subreddit is python so the actor runs out of the box (once your Token V2 + proxy are filled in).

Output

Results are pushed to the actor's default dataset. View as a table or download as JSON / CSV / Excel / XML.

- Wiki Page / All Emojis / Get Widgets push one record per page / emoji / widget.

- Wiki Pages pushes one record per page slug.

- Wiki Page Revisions / Wiki Discussions push up to your limit records.

Every record is tagged with endpoint so you can tell rows apart at a glance. The most useful columns are placed first so the dataset Table view is readable without scrolling.

Common edge cases

- Token V2 + proxy mismatch — Reddit binds the Token V2 to the IP it was minted on. If the proxy IP doesn't match, Reddit returns 401 / 403.

- Mod-restricted wikis — return Reddit's

WIKI_DISABLED/403rather than a record. Use a public-wiki community for best results, or use a Token V2 from an account that holds mod rights for the target community. - Custom emojis on some subs require login — when Reddit returns

USER_REQUIRED, the actor pushes a single note record explaining the response (rather than failing the whole run). - Slashes in page names —

config/sidebar,config/description, etc. are passed through verbatim. No special escaping needed. - Suspended account / revoked Token V2 — Reddit returns 401 immediately. Refresh your Token V2 (or save a Reddit Session in the vault for auto-refresh) and rerun.

- Empty results — return zero records. The actor reports an empty result rather than failing.

Status & error reference

Run status (Apify-side, shown on the run page)

| Status | Apify message | Meaning | What to do |

|---|---|---|---|

| "Actor succeeded with N results in the dataset" | Run finished. Some or zero records pushed. | Open the dataset to view results. | |

| "The Actor process failed…" | Validation error or upstream Reddit fault. | Check the run log. You are NOT charged for failed runs. | |

| "The Actor timed out. You can resurrect it with a longer timeout to continue where you left off." | Run exceeded its timeout. Rare for this actor at default 300 s. | Re-run; consider narrowing inputs or lowering limit. | |

| "The Actor process was aborted. You can resurrect it to continue where you left off." | You stopped the run manually. | No charge for unpushed results. |

Common in-run conditions (visible in run log)

| Condition | Cause | Result |

|---|---|---|

| Empty result set | Wiki / emoji / widget set is empty for that subreddit. | Run SUCCEEDED, 0 records, no charge. |

WIKI_DISABLED / 403 | Wiki is mod-restricted or disabled. | Run SUCCEEDED, note record explains the response. |

USER_REQUIRED | Subreddit requires login to view custom emojis. | Run SUCCEEDED, note record explains the response. |

| 401 / Token V2 + proxy mismatch | Reddit binds the Token V2 to its mint IP; proxy IP differs. | Run FAILED. Refresh Token V2 from the same IP. |

| 401 / suspended or revoked Token V2 | Account suspended, password changed, or token revoked. | Run FAILED. Mint a new Token V2 (or use the vault session). |

Validation error: missing subreddit | Required input not provided. | Run FAILED immediately, no charge. |

Why this actor is fast

- Speed — 1–3 seconds per call, end-to-end. Pure HTTP to Reddit's API. No browser to boot, no Playwright / Selenium / Puppeteer overhead. Competing browser-based scrapers typically take 15–60 seconds per call.

- Reliability — zero browser flakiness. No headless-Chromium crashes. No JS-render timeouts. No captcha pages. No surprise mid-run failures from a browser quirk.

- Footprint — under 100 MB RAM per run. Most browser-based scrapers need 1–4 GB. Built for reliability behind the scenes — just paste your inputs and run.

Pricing

Pay-per-result. You're only charged for records actually pushed to the dataset — failed runs, validation errors, and empty results cost nothing.

| Event | Trigger | Price (per 1,000) |

|---|---|---|

result | Each wiki page / emoji / widget / revision / discussion record pushed | $1.99 |

Need a different shape of data?

- Reddit Subreddits — about / rules / sidebar / popular-feed / autocomplete — anonymous, no Token V2 needed

- Reddit Scraper V2 — 11 single-record reads for posts, comments, profiles, communities

- Reddit Bulk Scrape V2 — paste up to 1500 IDs / names / URLs in a single run

- Reddit Search V2 — search posts / comments / subreddits / users with full filters

- Reddit Users V2 — single-user lookups (profile, trophies, posts, comments)

- Reddit Posts V1 — front-page feed, crosspost duplicates, pinned posts

- Reddit Vault — save Reddit accounts once, call them by name from this actor (free)

Support and feedback

Found a bug, want a feature, or hit a Reddit error code we don't translate clearly? Open an issue via the actor's Apify Console feedback link, or reach out at the RedCrawler support channel.

Reddit Wiki, Emojis & Widgets is part of the RedCrawler family of Reddit actors. RedCrawler is independent — not affiliated with, endorsed by, or sponsored by Reddit, Inc. Use it within Reddit's API terms.