Google Maps Extractor

Pricing

from $2.10 / 1,000 scraped places

Google Maps Extractor

Extract data from hundreds of places fast. Scrape Google Maps by keyword, category, location, URLs & other filters. Get addresses, contact info, opening hours, popular times, prices, menus & more. Export scraped data, run the scraper via API, schedule and monitor runs, or integrate with other tools.

What is Google Maps Extractor?

Google Maps Extractor is a web scraping tool that enables you to extract Google Maps place details. It’s a stripped-down version of the original Google Maps Scraper. Just enter a keyword/category/URL and location and scrape various data like price, geolocation, place name, contact info, and more at great speed and predictable price.

We recommend trying out Google Maps Scraper, as well, since it extracts even more than Google Maps Extractor can and at the same speed, but at a lower price.

What can this Google Maps Extractor do?

- Find and scrape places in Google Maps by search query

- Extract places in Google Maps by category, for example “parking lot” or “bar”

- Scrape Google Maps by location (country, city, county, or zip code)

- Narrow down search areas by using coordinates or by search URL

- Get past Google Maps' limitations, such as showing no more than 120 places per map

- Export Google Maps data in Excel, CSV, JSON, HTML, and other formats

- Use API in Python and Node.js, API Endpoints, webhooks, and integrations with other apps

What data can this Google Maps Extractor extract?

| 🥡 Place name and URL | 💲 Price | 🏷️ Category |

| 🌍 Country code and phone number | 🏠 Address, neighborhood, street, city, postal code, state | 🌐 Website |

| ✅ Claim this business | 🧭 Location coordinates | 🚫 Permanently or temporarily closed |

| ⭐ Total score | 🆔 Place ID | 🗓️ Scraped at |

| 📊 Reviews count | 🏷️ Review tags | 🖼️ Image categories |

| 📸 Photos count | 🔖 Place tags | 🍔 Google food URL |

| ⌚ Opening hours | 👀 People also search | ⛽️ Gas prices |

| 🚫 Promoted status | ♿ Accessibility info | |

| 🏢 Company contacts enrichment (emails, phone numbers, and social media links from business website) | 👥 Business leads enrichment (full name, work email, phone number, job title, and LinkedIn profile) | 📱 Social media profile enrichment (follower counts, descriptions, and verification status for Facebook, Instagram, YouTube, TikTok, X) |

Data Google Maps Extractor can’t extract

This web scraper does not extract the following data from Google Maps:

- Images

- Reviews

To scrape images or reviews, we recommend you try Google Maps Scraper, instead, which is a more comprehensive solution than Google Maps Extractor.

How much does it cost to extract Google map data?

Google Maps Extractor uses a pay-per-event pricing model, where you're charged based on specific actions taken during scraping. This provides transparent, predictable pricing based on actual usage.

Base pricing

The foundation of costs includes:

- Place scraped (

place-scraped): Cost varies by plan tier (see table below). This event is triggered for every place whose basic details are scraped from Google Maps.

Optional add-ons

Additional chargeable events include:

-

Filter applied (

filter-applied): Cost varies by plan tier (see table below). This is charged when you use category filters to narrow down your search results. Note that multiple categories count as one filter application per place. -

Additional place details (

place-details-scrapedwithscrapePlaceDetailPageenabled): $0.002 per place. This add-on extracts additional details beyond the basics, such as reviews distribution, image categories, popular times, opening hours, and more. Enabling thescrapePlaceDetailPageinput option is required to scrape the reviews count.

Pricing by plan tier

| Plan Tier | Place Scraped (per 1,000) | Filter Applied (per 1,000) |

|---|---|---|

| Free | $5.00 | $1.00 |

| Starter | $4.00 | $1.00 |

| Scale | $3.00 | $0.75 |

| Business | $2.10 | $0.55 |

On the free plan you get $5 in credit, meaning you can scrape 1,000 places on Google Maps without it costing you a penny (or fewer if you use add-ons).

Subscribing to one of our paid plans adds more credit to your account and reduces per-event costs.

Why pay-per-event pricing?

The pay-per-event model offers several benefits:

- Transparent pricing: You only pay for what you use, with no hidden costs

- Predictable costs: Know exactly what each action costs before you start

- No idle charges: Unlike compute-time pricing, you're not charged for waiting or processing time

Google Maps Scraper offers more flexible pricing with additional add-on options, allowing you to customize exactly which data points you want to extract and pay for.

How do I use Google Maps Extractor to scrape map data?

This Google Maps Extractor was designed for an easy start, even if you've never extracted map data from the web before. Learn more about using Google Maps Extractor by watching this video tutorial:

⬇️ Input

The input for Google Maps Extractor should be either a Google Maps URL or a location in combination with a search term. You can provide keywords, URLs, and categories either one by one or in bulk. You can provide the location as a simple city name, a full postal address, or as a polygon consisting of multiple coordinates.

Here's a simple input example, scraping 1,000 parking lots in New York City:

Click on the input tab for a full explanation of input in JSON.

Search terms

Using multiple similar search terms can increase the number of scraped places, but it also increases the time a run takes. We recommend using a combination of search terms that are distinct or overlap only slightly in meaning. Using a long list of duplicate search terms will just increase the time of a run without providing more results.

Example of a good list of search terms: [restaurant, bar, pub, cafe, buffet, ice cream, tea house]

Example of a bad list of search terms: [restaurant, restaurants, chinese restaurant, cafe, coffee, coffee shop, takeout]

While Google search results often include categories adjacent to your search, e.g. restaurant might also capture some cafe or bar places, but you will get better results if you use them as separate search terms, as well.

Categories

Using categories can be dangerous!

Search terms can introduce false positives, extracting some irrelevant places. Categories can be used to narrow down the results to just the ones you select.

Categories can also be dangerous because they can cause false negatives, excluding places you might want in the results. Google has thousands of categories, and many are synonymous. You must list all the categories you want to match, including all synonyms; for example, Divorce lawyer, Divorce service, and Divorce attorney are three distinct categories. Some places might be classified as only one of them, meaning you should input all of them. For this reason, we recommend going through the categories list carefully. For some use cases, you might want to select as many as 100 categories to ensure you don't miss any relevant places.

To help with this, Google Maps Extractor tries to increase the chance of a match by doing the following:

- If any category of a place (each can have several categories) matches any category from your input, it will be included.

- If all words from your input are contained in a category name, it will be included. E.g.

restaurantwill matchChinese restaurantandPan Asian restaurant.

⚠️ If categories are used without search terms, they will be used both as search terms and as category filters. However, for the above reasons, using categories without search terms is not recommended. We generally recommend using fewer search terms and more categories.

Search without geolocation

Rather than using the standard search term and location inputs, you may also opt to use only the search term (e.g. "restaurants in berlin") or a direct Google Maps search URL (e.g. https://www.google.com/maps/search/restaurants/@52.5190603,13.388574,13z/) without the location input field. However, this approach will limit the number of results to a maximum of 120 because it only opens a single map screen on Google with a finite scroll. We only recommend skipping location input if you don't need more than 120 results, you need the lowest possible latency, or you want to get the results in the same order as Google would provide.

⬆️ Output example

The results will be wrapped into a dataset found in the Output or Storage tab. Note that the output is organized in tables and tabs for your convenience. You can view results as a table, JSON, or as a map.

Once the run is finished, you can also download the dataset in various data formats (JSON, CSV, Excel, XML, HTML). Before exporting, you can pick or omit specific output fields; alternatively, you can also choose to download the whole view, which includes thematically connected data.

Table view

The table view can be manipulated in different ways. There is a general overview, but you can also sort the table by contact info, location rating, reviews, or other fields.

JSON file

Here's the amount of data you'd get for a single scraped place:

🏢 Company contacts enrichment

When the company contacts enrichment add-on is enabled, each place result will include social media links and contact details found on the business website:

👥 Business leads enrichment

When business leads enrichment is enabled, each place result will include an array of employee leads with contact and company details:

⚠️ The number of leads you request is per place found. Setting this to a high number can significantly increase your costs. For example, requesting 10 leads for a search that finds 1,000 places will result in an attempt to find 10,000 leads. You will only be charged for leads that are successfully found.

📱 Social media profile enrichment

When social media profile enrichment is enabled, Google Maps Extractor enriches discovered social media URLs with detailed profile information (follower counts, descriptions, and verification status). The enriched profiles are included directly in the place output.

Important notes:

- Social media profile enrichment requires the company contacts enrichment feature to be enabled (this is automatically enabled when you enable social media profile enrichment)

- Each enriched social media profile is a separate billable event

- You can enable enrichment for specific platforms only (e.g., only Facebook and Instagram)

- All enrichment options are disabled by default

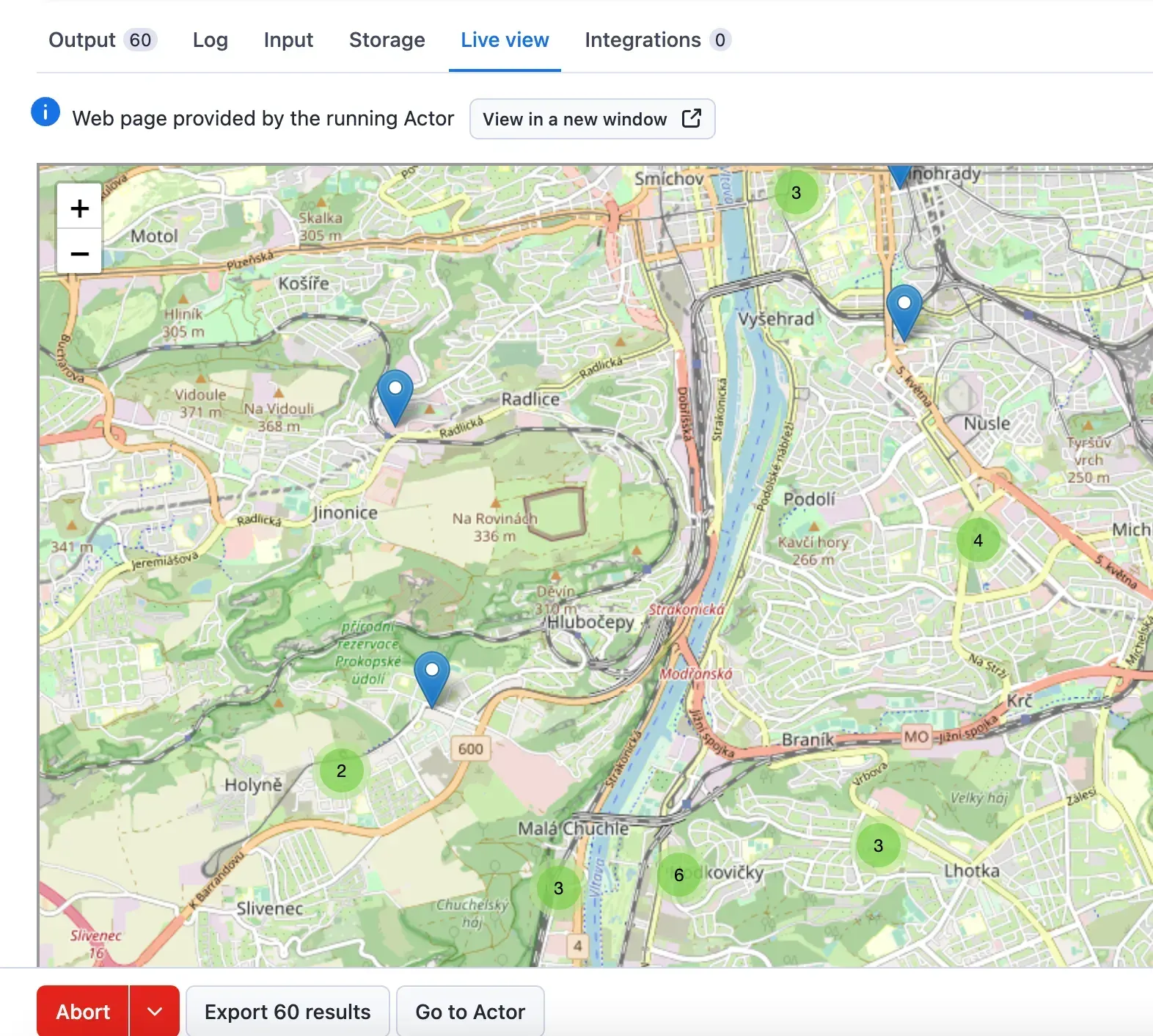

Map view

Google Maps Extractor provides a zoomable map that shows all the places scraped. The map is shown in the Live View tab on the Actor run page and also stored in the Key-Value Store as results-map.html record.

What are other tools for scraping Google Maps?

For more comprehensive Google Maps data, we recommend using Google Maps Scraper. It uses the same input options, but has a lot more options and is able to extract many more different types of data.

For more specific use cases, we recommend the following:

- Google Maps Reviews Scraper, which focuses on Google Maps reviews

- AI Text Analyzer for Google Reviews, which can help you figure out keywords from review batches

- Google Maps Scraper Orchestrator, which lets you run multiple Google Maps Scraper instances concurrently

- Competitive Intelligence AI Agent, which can figure out competitors’ strengths and weaknesses

- Market Expansion AI Agent, which can help you determine where best to expand to

Frequently asked questions

How can I extract Google Maps data by coordinates?

If you want to customize your location for a specific area, you'll be happy to use the 🛰 Custom search area section of this tool. You’ll have to provide coordinate pairs for an area and the scraper will create start URLs out of them. There are several types of search area geometry that you can use in Google Maps Extractor: Polygon, MultiPolygon and Point (Circle). We’ve found the polygons and circle to be the most useful ones when it comes to extracting data from Google Maps.

Feel free to consult with this guide or its equivalent in video form.

What are the disadvantages of the Google Maps API?

With the Google Maps API, you get $200 worth of credit usage every month free of charge. That means 28,500 map loads per month. However, the Google Maps API caps your search results to 60, regardless of the radius you specify. So, if you want to scrape data for bars in New York, for example, you'll get results for only 60 of the thousands of bars in the area. Google Maps Scraper imposes no rate limits or quotas and provides more cost-effective, comprehensive results.

Can I integrate Google Maps Extractor with other apps?

Yes. The Google Maps Scraper can be connected with almost any cloud service or web app thanks to integrations on the Apify platform. You can integrate your Google Maps data with Zapier, Slack, Make, Airbyte, GitHub, Google Sheets, Asana, LangChain and more.

You can also use webhooks to carry out an action whenever an event occurs, for example, get a notification whenever Google Maps Scraper successfully finishes a run.

Can I use Google Maps Extractor as its own API?

Yes, you can use the Apify API to access Google Maps Extractor programmatically. The API allows you to manage, schedule, and run Apify actors, access datasets, monitor performance, get results, create and update actor versions, and more.

To access the API using Node.js, you can use the apify-client NPM package. To access the API using Python, you can use the apify-client PyPI package.

For detailed information and code examples, see the API tab or refer to the Apify API documentation.

Can I use this Google Maps API in Python?

Yes, by using Apify API. To access the Google Places API with Python, use the apify-client PyPI package. You can find more details about the client in our Docs for Python Client.

Is it legal to scrape Google Maps data?

Web scraping is legal if you are extracting publicly available data which is most data on Google Maps. However, you should respect boundaries such as personal data and intellectual property regulations. You should only scrape personal data if you have a legitimate reason to do so, and you should also factor in Google's Terms of Use.

Your feedback

We’re always working on improving the performance of our Actors. So if you’ve got any technical feedback for Google Maps Extractor or simply found a bug, please create an issue on the Actor’s Issues tab.