Green Acres Scraper

Pricing

from $15.00 / 1,000 results

Green Acres Scraper

Green Acres (est. 2004, Paris) is an international property marketplace. This actor scrapes your search URL into structured data: pricing, location, specs, agency details, full description, image URL, contact phone, and many more fields. Scrape property listings anywhere in the world.

Pricing

from $15.00 / 1,000 results

Rating

0.0

(0)

Developer

Marco Rodrigues

Maintained by CommunityActor stats

0

Bookmarked

2

Total users

1

Monthly active users

a month ago

Last modified

Categories

Share

🏠 Green Acres Property Scraper

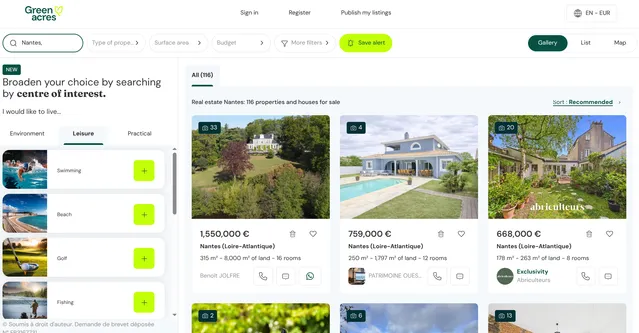

Green Acres is an international real estate marketplace founded in 2004 and headquartered in Paris, France. Listings span homes, apartments, land, and commercial property across many countries. You can start from a search or region URL in France, Portugal, the UK, or elsewhere and still get consistent, structured records.

This actor turns those listing and detail pages into structured, machine-ready data without manual copying. Paste a Green Acres search or category URL, set how many properties you want, and the scraper walks the grid, opens each advert, and extracts price, location, specs, agency details, the full description—and agency contact phone numbers when the site exposes them (often the first thing people want for follow-up, lead lists, or CRM imports).

💡 Perfect for…

- Buyers & investors: Compare asking prices, surface areas, and price-per-m² across regions in one dataset instead of dozens of open tabs—and call agencies directly using the extracted

contact_phonenumbers when available. - Market analysts & researchers: Track supply by locality, bedroom count, or price band; join outputs with other datasets using

url,address_*, and timestamps. - Prop-tech & CRM tools: Feed clean property records into your product.

contact_phoneis ideal for diallers, outreach, and matching leads to listings; combine it withagency_name,agency_address, andimage_urlfor full enrichment workflows. - Data & ML pipelines: Run NLP or classification on

description; usesurface_area,rooms,bedrooms, andadditional_featuresas structured features. - RAG & assistants: Chunk

descriptionand index withurlandaddress_localityso answers cite the exact listing page.

✨ Why you'll love this scraper

- 🎯 Start from any listing URL: Use the same filters you use on the site (location, property type, price range), copy the address bar URL into

input_url, and the scraper follows pagination to collect more cards until it reaches your cap. - ⚙️ Rich detail pages: Each record includes main characteristics (address, surface, land, rooms, bedrooms), parsed numeric fields where applicable, agency block (reference, mandate, fees, price/m²), popularity-derived published date, and the long-form description.

- 📞 Agency phone numbers: Each advert pulls the call number exposed on the page (the same line agencies use for inbound enquiries) into

contact_phone—so you can reach sellers or agencies without copying numbers manually from every listing. - 🖼️ Media: Captures the hero image URL when the gallery is present.

- 🌍 Multi-country: Green Acres serves listings worldwide;

currencyis the symbol shown next to the price on the page (e.g. €, £, $) as returned by the site, not a guessed label.

📦 What’s inside the data?

For every property detail page scraped, you get:

| Field | Description |

|---|---|

url | Canonical advert URL on Green Acres |

address_country | Country from the listing (e.g. from schema.org address) |

address_locality | City / area label (e.g. neighbourhood or town) |

surface_area | Habitable surface as float (e.g. m²), parsed from the main characteristics |

land_area | Land size as shown (string), when present |

rooms | Number of rooms as integer, when present |

bedrooms | Number of bedrooms as integer, when present |

additional_features | Extra bullet lines from “main features” text (excluding duplicates of the icon row) |

agency_name | Agency or seller name |

agency_kind | Role line (e.g. “Agency”) when shown |

agency_address | Agency address as a single string (multi-line where applicable) |

agency_reference | Listing reference when shown |

agency_mandate_number | Mandate number when shown |

agency_fees | Fee text when the agency block includes a “Fees” row |

price_per_m2 | Price per m² as float, when present in the agency details |

view_count | View count from the popularity block, when parsed |

published_date | First-seen date as ISO 8601 UTC timestamp string, when parsed from popularity |

contact_phone | Agency / listing phone for inbound calls (from the site’s call button, when shown)—high-value for outreach and CRM |

price | Asking price as float |

currency | Currency symbol from the price block (same as on the listing; e.g. €, £, $)—whatever the site renders |

image_url | URL of the primary gallery image when found |

description | Full visible description text from the advert body |

🚀 Quick start

- Open Green Acres (or a country site such as

.fr,.pt,.co.uk) and run a search: choose region, property type, budget, or other options until you see the results you care about. - Set

max_propertiesto how many property detail pages you want in the output (the scraper loads listing cards, turns pages when it can, then opens that many adverts). Per run, this is capped between 10 and 80; the default is 50 if you leave the field on the platform default. - Start the run from the Apify Console (or call the API) and export your dataset as JSON, CSV, or Excel.

Building your input URL (filters and sort)

The scraper does not expose Green Acres’ filters in a separate form. Instead, you define the search on the website: whatever appears in the address bar after you search is what the actor uses. That keeps the workflow identical to browsing.

- Search and narrow results in your browser—location, property type, price range, surface, bedrooms, new build, etc. Use whatever filter panels or chips the site offers for that country.

- Sort the list if you care about order (e.g. price ascending, newest first). Sorting usually updates the URL or the order of results the listing page will show; the scraper follows the same result list you see.

- When the grid of listings matches what you want to scrape, select the full URL in the address bar (

https://…) and copy it. - Paste that string into

input_url:- Apify Console: Input tab →

input_urlfield, then save and run. - Apify API / clients: Pass the same value in the JSON body as

"input_url"when starting a run (e.g.POSTto the runs endpoint with your actor ID and input payload).

- Apify Console: Input tab →

If you change filters or sort later, copy the new URL again—each combination has its own link, so you always know exactly which slice of the market you are exporting.

Tech details for developers 🧑💻

Input Example

Output Example

Parameters

| Parameter | Type | Required | Default | Min | Max | Description |

|---|---|---|---|---|---|---|

input_url | string | Yes | https://www.green-acres.pt/property-for-sale/funchal-municipality | — | — | Full Green Acres search results URL from your browser after you apply filters and sort (see Building your input URL). |

max_properties | integer | Yes | 50 | 10 | 80 | How many property detail pages to scrape and push to the dataset in a single run. |