1

2import { Actor, log } from 'apify';

3

4import { CheerioCrawler } from 'crawlee';

5

6

7

8

9

10

11await Actor.init();

12

13const proxyConfiguration = await Actor.createProxyConfiguration();

14

15const firstReq = {

16 url: `https://api.apify.com/v2/store?&limit=1000`,

17 label: 'PAGINATION',

18 userData: { offset: 0 },

19};

20

21interface ActorStore {

22 name: string;

23 url: string;

24}

25

26const crawler = new CheerioCrawler({

27 proxyConfiguration,

28 requestHandler: async ({ request, $, json }) => {

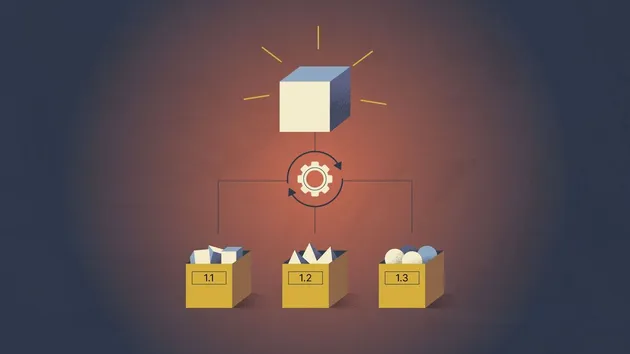

29 if (request.label === 'PAGINATION') {

30 const { items } = json.data;

31

32 log.info(`Loaded ${items.length} Actors from page ${request.url}`);

33

34 const toEnqueue = items.map((item: ActorStore) => {

35 return {

36 url: item.url,

37 label: 'DETAIL',

38 userData: { item },

39 };

40 });

41 await crawler.addRequests(toEnqueue);

42

43 if (items.length >= 1000) {

44 const offset = request.userData.offset + 1000;

45 await crawler.addRequests([{

46 url: `https://api.apify.com/v2/store?limit=1000&offset=${offset}`,

47 label: 'PAGINATION',

48 userData: { offset },

49 }]);

50 }

51 } else if (request.label === 'DETAIL') {

52 const { item } = request.userData;

53 const isOpenSource = $('[data-test-url="source-code"]').length > 0;

54

55 if (isOpenSource) {

56 await Actor.pushData({

57 ...item,

58 isOpenSource,

59 });

60 }

61

62 log.info(`${item.name} is open source: ${isOpenSource}`);

63 }

64 },

65});

66

67await crawler.run([firstReq]);

68

69

70await Actor.exit();