Facebook Pages Scraper

Pricing

from $6.60 / 1,000 pages

Facebook Pages Scraper

Extract basic data from multiple Facebook Pages or Profiles. Extract Facebook page details, website, email, address, messenger, likes, followers, rating, ad running status, and other public data. Export scraped data, schedule scraper via API, integrate with other tools or AI workflows.

Pricing

from $6.60 / 1,000 pages

Rating

4.9

(36)

Developer

Apify

Actor stats

421

Bookmarked

41K

Total users

2.6K

Monthly active users

17 hours

Issues response

9 hours ago

Last modified

Categories

Share

What is Facebook Pages Scraper?

It's a simple but powerful data extraction tool that allows you to scrape basic data from Facebook pages and profiles. To get that data, just insert page URLs and click "Save & Start" button. With this web scraping tool, you can go beyond the limitations of the Facebook API:

🗂 Scrape a bunch of Facebook Pages at once

👥 Scrape both Pages and Profiles (see the difference in data)

📇 Get contact details, likes, followers, ratings, and more

⚡ Get 500 pages for free in less than 2 minutes

🔓 No limitations on requests or number of calls

💾 Export Facebook data in JSON, CSV, Excel, or HTML

🔗 Export via SDKs (Python & Node.js), use API Endpoints, webhooks, or integrate with apps & AI workflows

🔍 Explore 20+ other Facebook scraping tools

What Facebook pages data can I extract?

With this Facebook API, you will be able to extract the following data from Facebook pages or profiles:

| 📝 Page title | 🔗 Page URL |

| 📮 Address | 📞 Contact details (phone number) |

| 🌐 Website | 💬 Messenger link (if available) |

| 📝 Intro (bio/description) | 📍 Number of check-ins and mentions |

| ⭐ Rating and rating count | 📅 Page creation date |

| 📚 Page Ad Library ID | 📢 Ad status |

| 👥 Number of followers | 👍 Number of likes |

| 🎨 Categories | 🌄 Profile and cover photo URL |

How do I use Facebook Pages Scraper?

Facebook Pages Scraper was designed to be easy to start with even if you've never extracted data from the web before. Here's how you can scrape Facebook data with this tool:

- Create a free Apify account using your email.

- Open Facebook Pages Scraper.

- Add one or more Facebook Page URLs to scrape its info.

- Click "Start" and wait for the data to be extracted.

- Download your data in JSON, XML, CSV, Excel, or HTML.

For a step-by-step guide on how to scrape Facebook Pages, follow our Facebook Pages Scraper tutorial 📝.

How much will scraping Facebook pages cost you?

Scraping data from Facebook pages costs $10 for every 1,000 pages, or $0.01 per page. If you're on Apify Free plan, you will be able to scrape up to 500 pages before needing to upgrade.

For more frequent or extensive data scraping, consider upgrading to the $29/month Starter plan, which can get you up to 2,900 Facebook pages per month. For scalable Facebook scraping, check out $199/month Scale or $999/month Business plan.

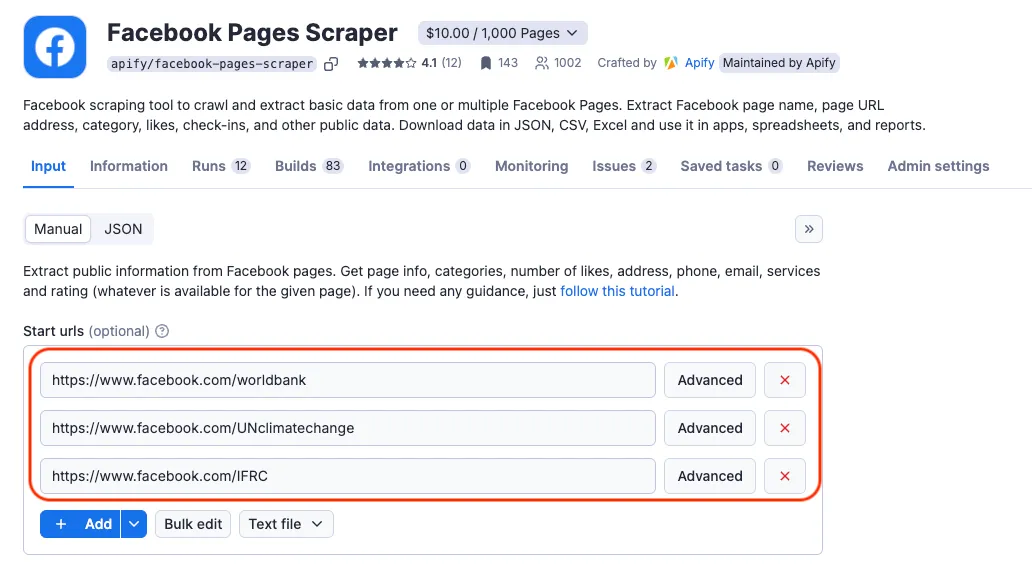

⬇️ Input

The input for Facebook Pages Scraper should be Facebook Page URLs such as https://www.facebook.com/humansofnewyork/. You can insert the page URLs one by one, paste a prepared list, or set the input via API.

Click on the input tab for a full explanation of an input example in JSON.

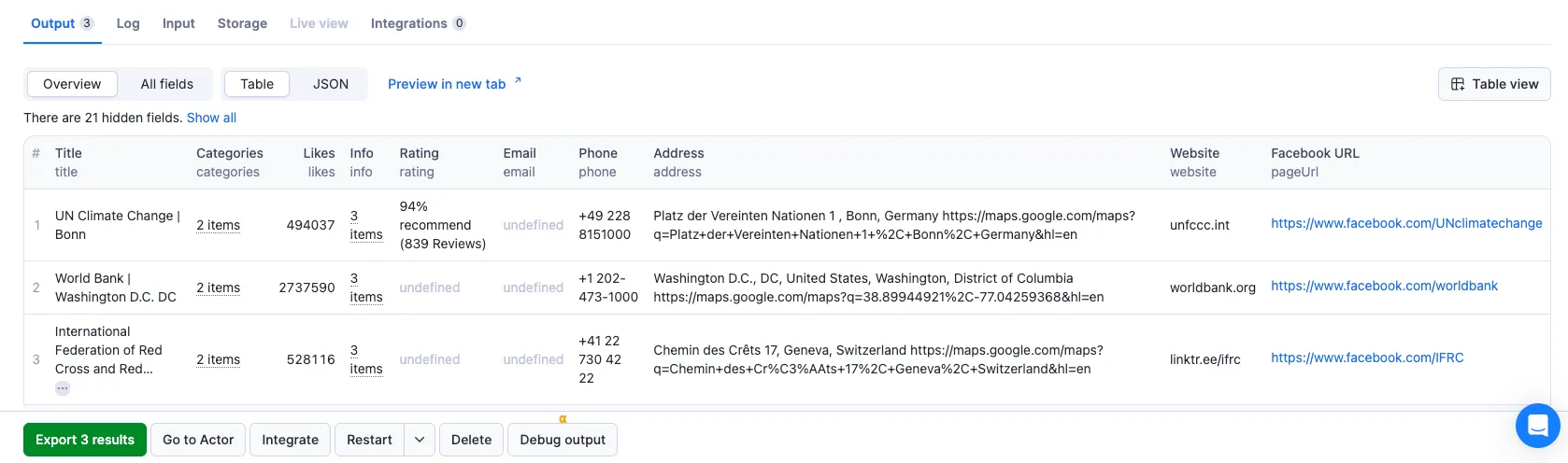

⬆️ Output

The results will be wrapped into a dataset which you can find in the Storage tab. Here's an excerpt from the dataset you'd get if you apply the input parameters above:

📘 Extracted Facebook pages sample

What is the best Facebook scraper?

You can use any of the dedicated scrapers below if you want to scrape specific Facebook data. Each of them is built particularly for the relevant Facebook scraping case be it groups, reviews, comments or ads. Feel free to browse them:

❓ FAQ

Is there a difference between scraping a Facebook page and Facebook profile?

Yes, there is. Facebook Pages are created for businesses, companies, non-profits, and other social causes. Those are the Facebook accounts that strive to provide "public-first" content and are often managed by a group of people. An easy way to distinguish between a Facebook profile and a Facebook page is that pages usually have a category of content and activity they would like to be associated with (news, software, hospitality, etc.) Facebook Pages that are particularly popular often include a blue verification badge. Facebook profiles are created for personal use.

Facebook Pages Scraper can extract information from both Facebook pages and Facebook profiles. However, the data provided by each differs. Generally, Facebook profiles contain less data. From Facebook profile, you can typically expect: facebookUrl, pageName, pageId, pageUrl, title, facebookId, coverPhotoUrl, and personalProfile (name, gender, profile photos: small, medium, large).

Why do I see less information for some Facebook pages?

Some Facebook pages have restrictions set either by Facebook or by the page owners, which can limit the information that's publicly available. Without logging into an account, access to certain details may be restricted. Logging into a Facebook account can help verify whether that's the case or whether there's an issue with the scraper itself.

Can I export Facebook Pages data using API?

Yes, you can access the extracted Facebook data through the Apify API. You’ll need an Apify account and your API token (available under Integrations settings in Console). Apify API is organized around RESTful HTTP endpoints that enable you to manage, schedule, and run Apify Actors. The API also lets you access any datasets, monitor actor performance, fetch results, create and update versions, and more. To access the API using Node.js, use the apify-client NPM package. To access the API using Python, use the apify-client PyPI package.

Click on the API tab for code examples or check out the Apify API reference docs for full detail.

Can I use Facebook Pages Scraper through an MCP Server?

With Apify API, you can use Facebook Pages Scraper within your AI workflows. You can connect to the MCP Server using clients like ClaudeDesktop and LibreChat or build your own. Here's you can se up 📘 Facebook Scraper via Model Context Protocol (MCP) server, you should:

- Start a Server-Sent Events (SSE) session to receive a

sessionId. - Send API messages using that

sessionIdto trigger the scraper. - The message starts the 📘 Facebook Pages Scraper with the provided input.

- The response should be:

Accepted.

Do I need proxies to scrape data from Facebook Pages?

You need proxies in general but you don't need to do anything extra to apply them if you run the scraper on the Apify platform. For successful Facebook scraping, we run residential proxies in the background which are included in Apify's monthly Starter plan ($29).

Can I integrate data from Facebook Pages Scraper with other apps?

Yes. Facebook Pages Scraper can be connected with almost any cloud service or web app thanks to integrations on the Apify platform. You can integrate with Make, Zapier, ChatGPT, Slack, Airbyte, GitHub, Google Sheets, Asana, Google Drive, Keboola, MCP Servers, and more.

You can also use webhooks to carry out an action whenever an event occurs, e.g., get a notification whenever Facebook Pages Scraper successfully finishes a run.

Is it legal to scrape Facebook Pages data?

Our Facebook scrapers are ethical and do not extract any private user data. They only extract what the user has chosen to share publicly. However, you should be aware that your results could contain personal data. You should not scrape personal data unless you have a legitimate reason to do so.

If you're unsure whether your reason is legitimate, consult your lawyers. You can also read our blog post on the legality of web scraping and ethical scraping.

Your feedback

We’re always working on improving the performance of our Actors. So if you’ve got any technical feedback for Facebook Pages Scraper or simply found a bug, please create an issue on the Actor’s Issues tab.