Agentic Document Extractor

Pricing

Pay per event

Agentic Document Extractor

Extract RAG-ready chunks with provenance from PDFs, scans, images, DOCX, XLSX, PPTX, CSV, TXT, and Markdown using a local-first Apify Actor.

Pricing

Pay per event

Rating

0.0

(0)

Developer

Solutions Smart

Maintained by CommunityActor stats

0

Bookmarked

2

Total users

1

Monthly active users

2 months ago

Last modified

Categories

Share

Agentic Document Extractor

Extract public documents into clean, RAG-ready chunks with provenance.

This Actor downloads documents from public URLs, converts them into normalized semantic blocks, and outputs structured chunks that are ready for vector databases, search pipelines, LLM retrieval, and downstream automation. It is designed for practical ingestion workflows where you want deterministic extraction, traceable source context, and clean machine-readable output instead of raw OCR dumps.

Why use it

- Converts common business documents into structured chunks, not just plain text blobs

- Preserves provenance with page ranges and bounding boxes when available

- Handles mixed document sets in one run

- Exposes stable

SUMMARYandMANIFESTrecords for orchestration and monitoring - Works well as a preprocessing step for RAG, indexing, classification, and enrichment pipelines

🧾 Supported formats

- Images: PNG, JPG, JPEG, TIFF, WEBP, GIF

- DOCX

- XLSX

- CSV

- PPTX

- TXT

- Markdown

How extraction works

- PDFs use the embedded text layer first for speed and accuracy

- Sparse or scanned PDFs can fall back to OCR depending on

ocrFallbackMode - Images are processed with OCR

- DOCX files are converted into headings, paragraphs, lists, and tables

- XLSX and CSV files are converted into sheet-aware table blocks

- PPTX files prefer LibreOffice-to-PDF conversion and fall back to XML text extraction when needed

- Chunking is deterministic and based on structure, page boundaries, tables, size limits, and overlap

🎯 Typical use cases

- Preparing document corpora for RAG or vector search

- Normalizing invoices, reports, slide decks, and spreadsheets before AI processing

- Building ingestion pipelines that need both chunk text and source provenance

- Converting legacy documents into structured JSON for automation workflows

📥 Input example

Use documents to provide public file URLs and tune chunking or OCR behavior as needed.

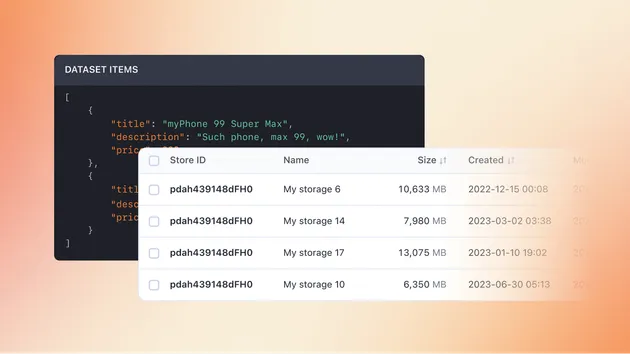

📤 Output

The Actor writes one dataset item per chunk and also stores two stable records in the default key-value store:

SUMMARYfor run-level metrics 📊MANIFESTfor per-document status, warnings, and failure reporting 🗂️

Each chunk item includes:

documentId,sourceUrl,fileTypechunkId,chunkIndex,chunkTypetext,markdownpageStart,pageEndsectionPathbboxcharCount,tokenEstimatelanguageextractionMode

🧩 Example dataset item

🛠️ Operational notes

- Public URLs only in v1

- Runs are deterministic and do not require an LLM provider

- OCR quality depends on the source file and available OCR tooling

- PPTX conversion uses LibreOffice when available and falls back gracefully when it is not

🚧 Current limitations

- Public URLs only in v1. No cookies, auth headers, or private file fetch support.

- Advanced form semantics, checkbox state extraction, and layout-aware table reconstruction are intentionally limited.

- Scanned PDF OCR depends on rasterization tooling being available.

Price

The Actor charges only after successful extraction and stops starting new documents once the charge limit is reached for a configured event.