Restaurant Review Aggregator

Pricing

Pay per event

Restaurant Review Aggregator

Add restaurant names and get reviews from Yelp, Google Maps, Doordash, UberEats, Tripadvisor, and Facebook. Extract review text, place address, rating, date, reviewer's name. Export reviews in JSON, CSV, HTML, use API, schedule and monitor runs or integrate reviews data with other tools.

Pricing

Pay per event

Rating

4.3

(4)

Developer

Tri⟁angle

Actor stats

23

Bookmarked

567

Total users

14

Monthly active users

4 months ago

Last modified

Categories

Share

🍽️ What is Restaurant Reviews Aggregator?

Restaurant Reviews Aggregator is designed to scrape restaurant reviews across 6 restaurant review sites: Tripadvisor, Yelp, Google Maps, Facebook, DoorDash and UberEats. The scraper extracts the reviews based on your search query + location or place URL. It is an Actor Bundle created by combining scrapers from six most popular restaurant review platforms (see the detailed list ⬇️).

What can you accomplish with this aggregator tool?

🍤 Extract restaurant reviews data by keywords, names or specific URLs

⭐️ Extract review text, place address, rating, date, reviewer's name in one go

🍱 Aggregate reviews from multiple platforms into one dataset

👀 Choose how many platforms to scrape reviews from — just a few or all six at once

🎯 Choose location and narrow down the keyword search to match the restaurant name

🗓 Prefilter scraped reviews by date

☄️ Get more than 1,600 results for free

🦾 Use scraped data as restaurant reviews API

⬇️ Download reviews data in Excel, CSV, JSON, XML, and other formats

💸 Is this reviews aggregator free?

Yes. Apify provides you with $5 free usage credits every month on the Apify Free plan, allowing you to scrape 1,600 restaurant reviews within those limits.

For regular data extraction needs, consider getting an Apify subscription. We recommend our $49/month Starter plan for extensive scraping.

🛎 How to use Restaurant Review Aggregator

It's easy to extract reviews across different review sites with Restaurant Reviews Aggregator. Follow these steps:

- Find Restaurant Review Aggregator on Apify Store and click the Try for free button.

- Add the search queries and location.

- Add a number of reviews to be scraped from that area by that keyword.

- Alternatively, add Google Maps URLs of restaurants (as a starting point).

- Choose the target review websites you want to scrape reviews from.

- Click "Start" and wait for the data to be extracted.

- Export your reviews dataset in JSON, XML, CSV, Excel, or HTML or using API.

⬇️ Input

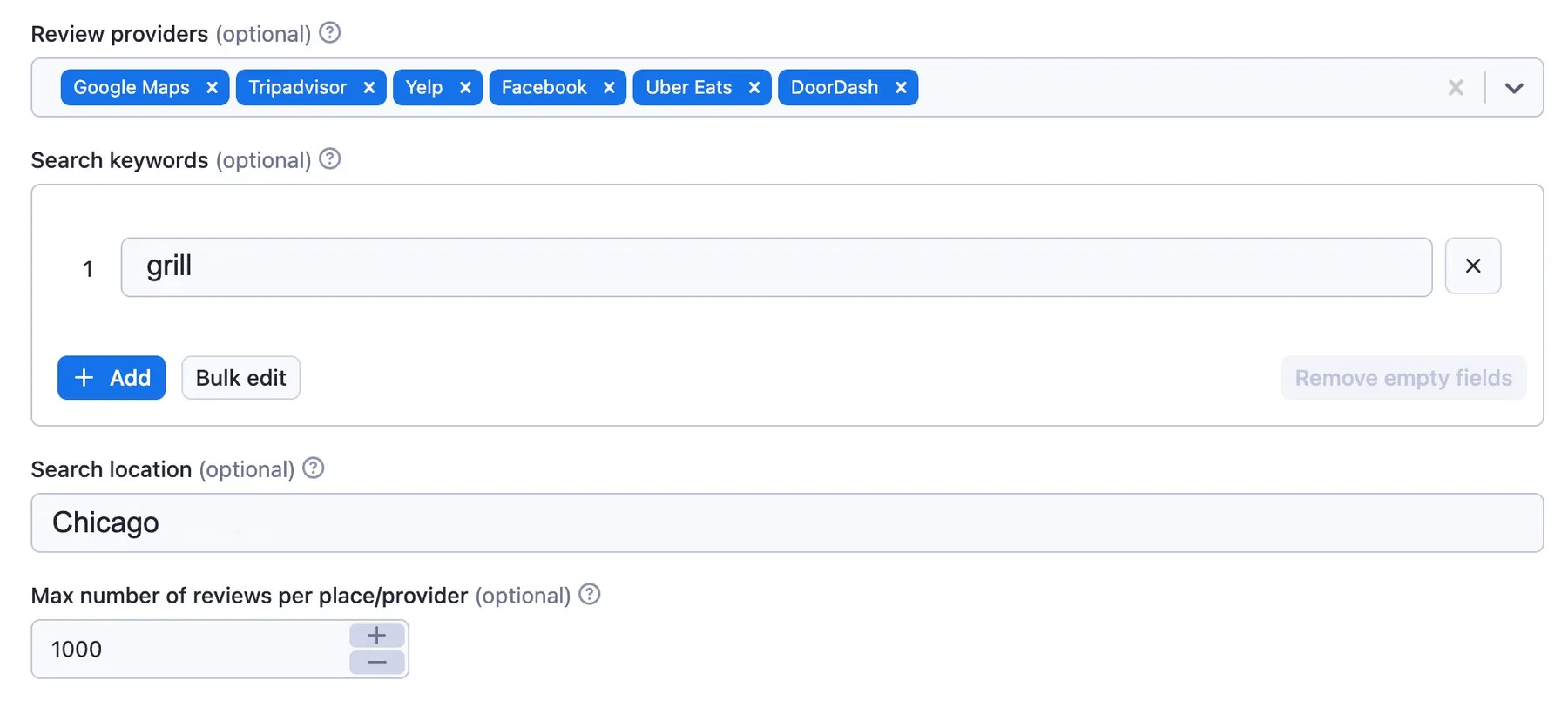

To search for restaurant reviews, the input for Restaurant Reviews Aggregator should be search queries or restaurant names, location or URLs. You can add queries or URLs one by one or all at once. Here's an example of an input for the keyword "grill" in Chicago, for all 6 review sites for the past year.

You can input data by filling out fields, using JSON, or programmatically via an API. For more details on how to configure input in JSON, see the input tab.

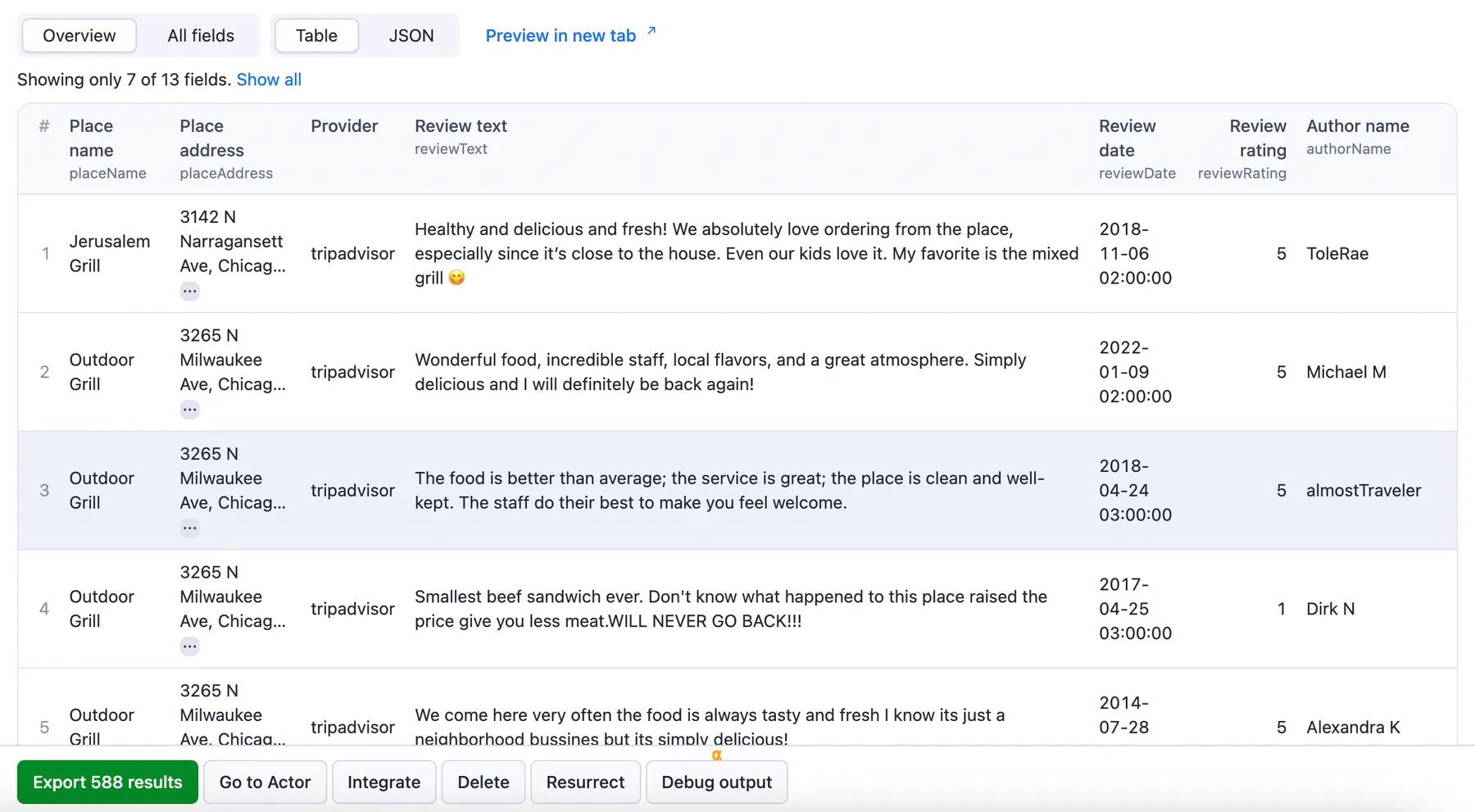

⬆️ Output sample

The results will be wrapped into a dataset which you can find in the Output tab. The full information about each review comes from the target review website. In case the review for some reason is not available on the target review site, the review will be scraped from Google Maps. Each place is uniquely identified through the googleMapsPlaceId.

You can preview all the fields in the Storage tab and choose the format in which to export the restaurant reviews you've extracted: JSON, CSV, Excel, or HTML table. Here below is the same sample dataset in JSON:

You can preview all the fields in the Storage tab and choose the format in which to export the restaurant reviews you've extracted: JSON, CSV, Excel, or HTML table. Here below is the same sample dataset in JSON:

Some additional information is saved in the KeyValueStore, for instance:

- the external Actors' run IDs;

- the places scraped from each provider;

- the addresses' geocodes.

🍴 Want more tools for scraping restaurant reviews?

This scraper is an Actor Bundle, named so because it combines six different Actors into one. You can of course scrape each restaurant review site separately using a designated Actor. Restaurant Review Aggregator combines the results of scrapers from the following websites:

| Reviews site | Scraper |

|---|---|

| 🥂 Yelp | Yelp Scraper |

| 📍 Google Maps | Google Maps Reviews Scraper |

| 🍔 UberEats | UberEats Reviews Scraper |

| 🌴 Tripadvisor | Tripadvisor Reviews Scraper |

| Facebook Reviews Scraper | |

| 🍽️ DoorDash | DoorDash Reviews Scraper |

If you want to check out more Bundles, you might be interested in 🤔 Social Media Sentiment Analysis Tool and 📱 Social Media Finder.

If you want to check out more Actor bundles, you might be interested in 🤔 Social Media Sentiment Analysis Tool and 📱 Social Media Finder.

❓FAQ

How does Restaurant Review Aggregator work?

First, it identifies places from Google Maps according to your input. The scraper takes places found Google Maps as the source of truth: therefore, each extracted review will refer to a place found on Google Maps, at the very least. Then, the Aggregator takes the places found on Google Maps as the new input and scrapes their reviews on other review sites.

How does the Aggregator extract restaurant reviews from Facebook?

Scraping restaurant reviews from Facebook in particular has a special approach:

- Once the Aggregator finds each place on Google Maps, it then searches for their respective Facebook Pages using Google Search.

- The Aggregator scrapes the Facebook URLs it found to get the necessary information from each page, such as the place address.

- The addresses from Google Maps and the Facebook Pages are then geocoded (using their coordinates on Google Maps) and compared.

- The places with the matching addresses are eventually scraped for reviews.

Is it legal to scrape restaurant reviews?

Our scrapers are ethical and do not extract any private user data. They only extract what the user has chosen to share publicly. However, you should be aware that your results could contain personal data such as names. You should not scrape personal data unless you have a legitimate reason to do so.

If you're unsure whether your reason is legitimate, consult your lawyers. You can also read our blog post on the legality of web scraping and ethical scraping.

Can I use this Review Aggregator as a Restaurant Review API?

Yes, you can use the Apify API to access data scraped by Restaurant Review Aggregator programmatically. The API allows you to manage, schedule, and run Apify Actors, access datasets, monitor performance, get results, create and update Actor versions, and more.

To access the API using Node.js or Python, you can use the apify-client in the NPM package or PyPI package. There are also API endpoints available for extracting data without a client. For detailed information and code examples, see the API tab or refer to the Apify API documentation.

Can I integrate Restaurant Reviews Aggregator with other apps?

Yes. Restaurant Reviews Aggregator can be connected with almost any cloud service or web app thanks to the integrations available on the Apify platform. You can integrate your reviews data with Zapier, Slack, Make, Airbyte, GitHub, Google Drive, LangChain, and more.

You can also use webhooks to carry out an action whenever an event occurs, e.g., get a notification whenever Restaurant Reviews Aggregator successfully finishes a run.

Your feedback

We’re always working on improving the performance of our Actors. So if you’ve got any technical feedback for this Review Aggregator or simply found a bug, please create an issue on the Aggregator's Issues tab.