Ai Search Visibility Tracker

Pricing

from $8.00 / 1,000 query results

Ai Search Visibility Tracker

Monitor how AI answer engines mention your brand across prompts and countries. Detect target/competitor mentions, extract cited domains/URLs, and export clean datasets for weekly tracking and reporting on Apify.

Pricing

from $8.00 / 1,000 query results

Rating

0.0

(0)

Developer

Delowar Munna

Maintained by CommunityActor stats

0

Bookmarked

16

Total users

1

Monthly active users

3 months ago

Last modified

Share

Track AI answer visibility for your brand across 500 prompts, weekly, by country — including which sources get cited and where you're missing.

- ✅ Target present/absent per query

- ✅ Competitors detected

- ✅ Cited domains + URLs extracted

What This Actor Does

- Monitors brand mentions in AI answers — Checks Perplexity, Google AI Overview, and more to see if your brand appears when people ask AI search engines the questions that matter to your business.

- Extracts cited sources — Captures every URL and domain the AI engine cites, so you know which sites are getting the visibility you want.

- Detects competitors — See which competitors show up in the same AI answers, and how often.

- Delivers structured, analysis-ready data — Every result is a clean JSON row with classification, match type, sources, and metadata — ready for spreadsheets, dashboards, or API workflows.

Why Not Just Ask ChatGPT?

Manually asking an AI engine one question at a time doesn't scale. This Actor solves three problems:

- Scale — Test hundreds of prompts across multiple engines and markets in a single run.

- Repeatability — Schedule weekly or daily runs and track how your visibility changes over time.

- Structured outputs — Get machine-readable rows with classification, competitor detection, and source extraction — not just a chat transcript.

Key Features

- Batch prompts — Run 1 to 500+ queries per run

- Query templates — Auto-generate prompts from seed keywords using preset packs (agency, SaaS, ecommerce, local)

- Country & language targeting — 30 countries and 19 languages supported via dropdown selects

- Competitor detection — Track which rivals appear alongside or instead of you

- Citation extraction — Full source URLs, domains, and positions

- Query groups — Label queries by group for agency-friendly reporting

- Scheduling-ready — Connect to Apify Schedules for automated recurring monitoring

Templates

Choose a template to auto-generate queries from your seed keywords, or use custom to provide your own.

| Template | Best For | Generated Patterns |

|---|---|---|

custom | Full control | No generation — uses your queries as-is |

agency | Broad funnel monitoring | "best {k}", "top {k} tools", "{k} vs {competitor}", "alternatives to {competitor}", "how to {k}", "best {k} for small business", "best {k} in {country}" |

saas | Competitive SaaS visibility | "best {k} software", "{brand} alternatives", "{competitor} alternatives", "{brand} vs {competitor}", "best {k} for startups", "best {k} for agencies", "pricing comparison for {k} tools" |

ecommerce | Product/category prompts | "best {k}", "top {k} brands", "best {k} for your needs", "{k} reviews", "where to buy {k}" |

local | City-specific services | "best {k} in {city}", "top-rated {k} near me in {city}", "{k} cost in {city}", "emergency {k} {city}", "{k} reviews {city}" |

Placeholders

| Placeholder | Resolves To |

|---|---|

{k} | Each seed keyword |

{brand} | Your targetName |

{competitor} | Each competitor (patterns skipped if none provided) |

{country} | The country input value |

{city} | Each location (local template only) |

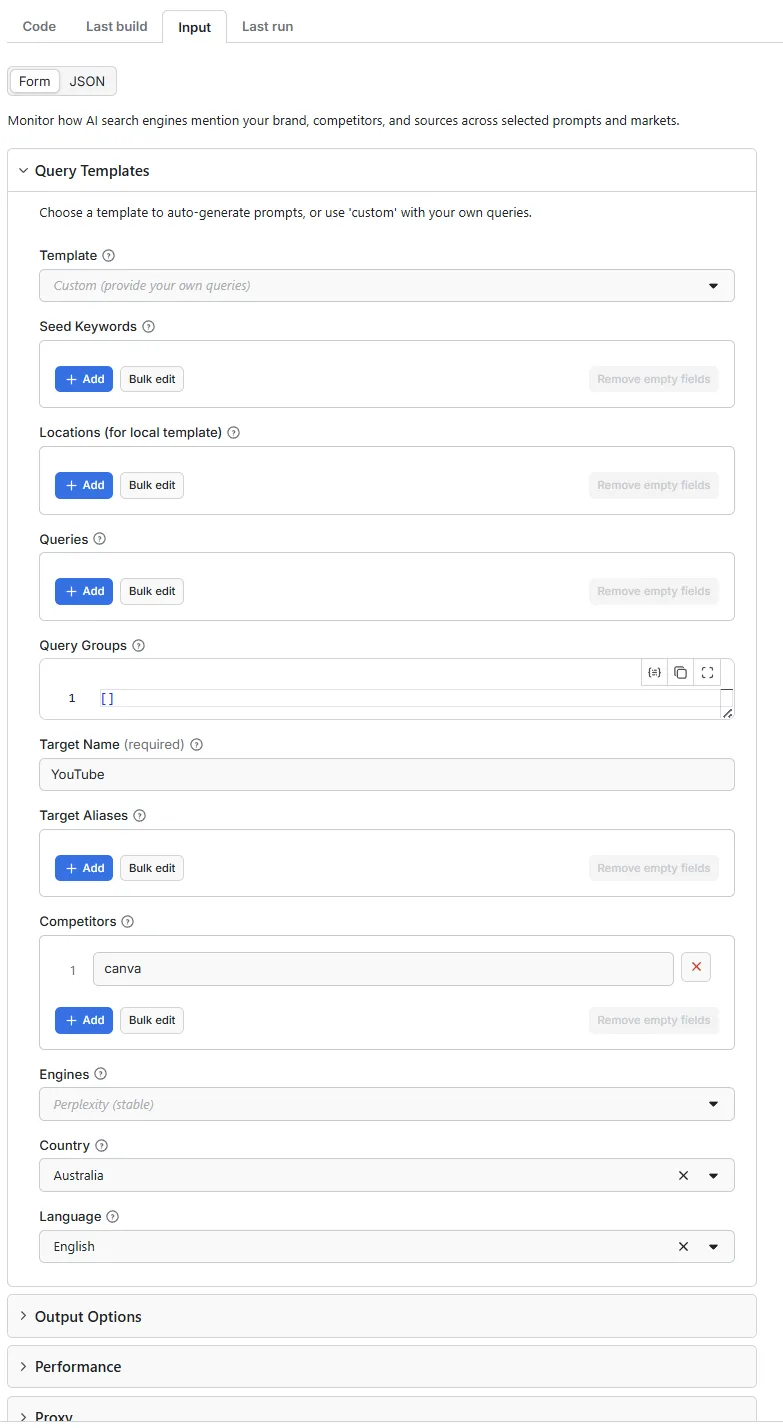

Input

Quick Start Examples

1. SaaS Template with Query Groups

Use a template with grouped queries for organized reporting. When queryGroups is provided, it takes priority over queries and template generation.

2. SaaS Template with Multiple Query Groups

Scale up with multiple query groups across different topics.

3. Custom — Manual Queries

Provide your own queries for full control.

4. Local Template — City-Specific Services

Monitor AI visibility for local services across multiple cities.

Input Parameters

| Field | Type | Required | Default | Description |

|---|---|---|---|---|

template | string | No | "custom" | Preset prompt pack: custom, agency, saas, ecommerce, local |

seedKeywords | array | When using template | [] | Keywords to expand into queries via template patterns |

locations | array | For local template | [] | Cities/areas for local query generation |

queries | array | When custom | — | Prompts/questions to test against AI engines |

queryGroups | array | No | [] | Grouped queries for agency reporting: [{ groupName, queries[] }] |

targetName | string | Yes | — | Brand, product, or topic to track |

targetAliases | array | No | [] | Alternate names, spelling variants |

competitors | array | No | [] | Competitor names/domains to detect |

engines | array | No | ["perplexity"] | AI engines to query |

country | string | No | "US" | Country (30 countries supported) |

language | string | No | "en" | Language (19 languages supported) |

includeAnswerText | boolean | No | true | Include full AI answer text |

includeSources | boolean | No | true | Include cited sources |

includeCompetitors | boolean | No | false | Enable competitor detection |

deduplicateSources | boolean | No | true | Remove duplicate source URLs |

saveScreenshot | boolean | No | false | Save page screenshots to KVS |

saveHtml | boolean | No | false | Save page HTML to KVS |

maxConcurrency | integer | No | 1 | Max concurrent browser pages (1–5) |

navigationTimeoutSecs | integer | No | 60 | Page load timeout in seconds |

maxRetries | integer | No | 2 | Retries for failed pages |

proxyConfiguration | object | No | — | Optional proxy settings |

debugMode | boolean | No | false | Verbose logging + debug info in output |

Query Resolution Precedence

The Actor resolves queries in this order:

queryGroupsprovided → uses grouped queries, ignoresqueriesand template generationqueriesprovided → uses them as-is,templateonly affects metadata labeling- Neither → generates queries from

template+seedKeywords(+locationsfor local)

Output

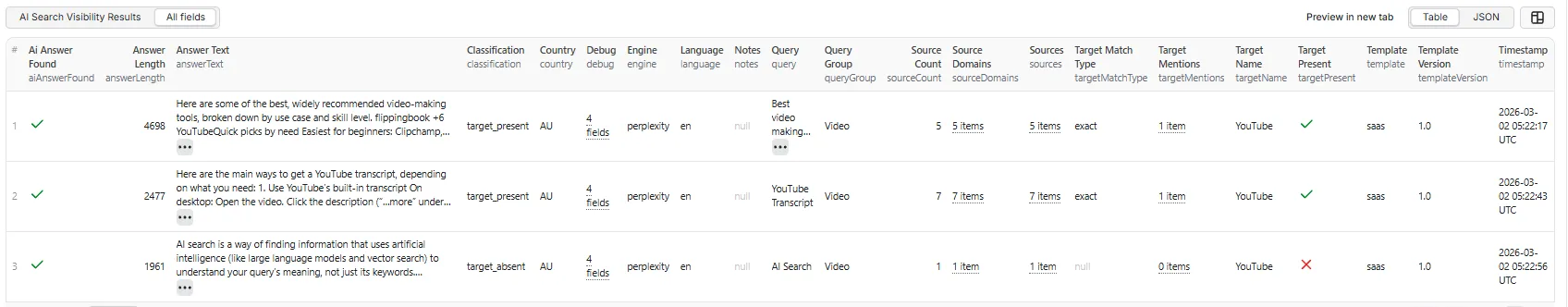

Table View

JSON Output

Each result row contains structured data like this real output from a production run:

Full Result Row Fields

| Field | Type | Description |

|---|---|---|

query | string | The prompt that was tested |

engine | string | Engine used (e.g., perplexity) |

country | string | Country code |

language | string | Language code |

timestamp | string | ISO 8601 timestamp |

aiAnswerFound | boolean | Whether an AI answer block was detected |

answerText | string | Full extracted AI answer text |

answerLength | number | Character count of answer |

targetName | string | Brand being tracked |

targetPresent | boolean | Whether target was found |

targetMatchType | string | How target was matched: exact, normalized, alias, domain |

targetMentions | array | Specific strings that matched |

competitorsChecked | array | Competitors that were checked |

competitorsPresent | array | Competitors found in answer |

classification | string | Result classification (see below) |

sources | array | Cited source objects with domain, url, title, position |

sourceDomains | array | Unique domains from sources |

sourceCount | number | Number of sources found |

template | string | Template used for this run |

templateVersion | string | Template version (e.g., "1.0") |

queryGroup | string | Group label if using queryGroups |

notes | string | Warnings or error messages |

debug | object | Debug info (when debugMode: true) |

Classification Values

| Value | Meaning |

|---|---|

target_present | AI answer found, target brand detected |

target_absent | AI answer found, target brand NOT detected |

no_ai_answer_found | Page loaded but no AI answer block found |

partial_extraction | Extraction completed with warnings |

error | Request failed entirely |

Run Summary

Each run saves a RUN_SUMMARY to the Key-Value Store with aggregated metrics:

visibilityRate— Fraction of successful AI answers where the target was presenttopCompetitors— Competitor mention counts (sorted)topSourceDomains— Most frequently cited domains (top 20)template,templateVersion,queryGroupCount— Template metadata- Result counts: present, absent, no AI answer, errors

Interpreting Results

- Visibility rate —

targetPresentCount / (results - errors - noAiAnswer). A rate of 0.40 means your brand appears in 40% of AI answers where an answer was found. - Top cited domains — The

topSourceDomainsin the run summary shows which sites AI engines reference most. If your domain isn't there, consider improving content authority. - Missing prompts — Filter for

classification = "target_absent"to find the queries where your brand is NOT being recommended. These are your content gaps. - Query groups — When using

queryGroups, filter byqueryGroupto compare visibility across different topic categories (e.g., "Brand awareness" vs "Competitor comparison").

Pricing

This Actor uses pay-per-event pricing: 1 event per query-engine pair processed (event name: query-result).

| Scenario | Events |

|---|---|

| 10 queries × 1 engine | 10 |

| 10 queries × 2 engines | 20 |

| SaaS template, 3 keywords, 2 competitors, 1 engine | ~21 |

Cost tips:

- Start with 1 engine (Perplexity) to validate prompts before adding more

- Use fewer seed keywords during testing

- Set

includeAnswerText: falseif you only need presence/absence classification (reduces storage)

Limitations

- Engine availability may vary — AI search engines can change their page structure or add bot detection at any time. The Actor includes retry logic, but some requests may still fail.

- Experimental engines — Engines labeled "experimental" (e.g., Google AI) have lower extraction reliability due to inconsistent AI Overview availability and aggressive bot detection.

- No LLM-based extraction — Answers are extracted via DOM selectors, not by calling an LLM API. This keeps costs predictable but means extraction depends on page structure.

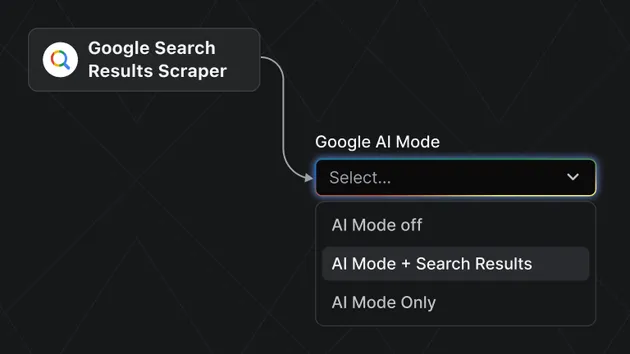

Engine Support

| Engine | Key | Status | Notes |

|---|---|---|---|

| Perplexity | perplexity | Stable | Primary engine, reliable extraction |

| Google AI Overview | google_ai | Experimental | Inconsistent availability, aggressive bot detection |

Proxy

The Actor does not bundle proxies. If you need proxy support:

- Provide your own proxy configuration via the

proxyConfigurationinput - Residential proxies recommended for Google AI to reduce blocking