Indeed Scraper

Pricing

from $3.00 / 1,000 job listings

Indeed Scraper

Scrape jobs posted on Indeed. Get detailed information from this job portal about saved and sponsored jobs. Specify the search based on location with the output attributes position, location, and description.

What does Indeed Scraper do?

Indeed Scraper allows you to extract job data from Indeed's job listings: job title, description, company name, location, salary, ratings, employment type, and more. To get that data, just type in the job title and location and click "Save & Start" button. Alternatively, provide search URLs or a URL to scrape company jobs in the format of https://www.indeed.com/cmp/Google/jobs

What job listing data can I extract?

With this Indeed API, you will be able to extract the following data from Indeed:

| 🧑💼 Job title | ⏱ Time of posting |

| 📃 Job description | 🔗 URLs |

| ⭐️ Reviews and ratings | 📍 Company name and location |

| 💵 Salary | 🕐 Employment type |

Can I use Indeed API to scrape Indeed job postings?

As of June 2023, Indeed provides a range of APIs for developers at no cost. However, it appears that these APIs are primarily geared towards the hiring side of the platform. While they can be valuable for integrating Indeed into applicant tracking systems, tracking applicant conversions, or scheduling interviews, they are not suitable for job searching purposes.

Previously, the Publisher Jobs API (Get Job and Job Search) used to be available and geared specifically for job searching, but they have been deprecated. Specifically, the Job Search API allowed users to gather data such as position names, company names, location, time of posting, and job descriptions. These changes have led users to seek out alternatives to Indeed APIs, such as this Indeed Scraper.

Why scrape Indeed?

🕵️ Conduct job market research or analysis by salary, rating, newness, etc.

✨ Monitor hiring trends for skills and technology gaps

🗂 Create a custom database for available positions

📩 Automate the job search to speed up and simplify the process

💸 Salary benchmarking

🤺 Competition tracking

How do I use Indeed Scraper?

Indeed Scraper was designed for an easy start even if you've never extracted data from the web before. Here's how you can scrape Indeed job data with this tool:

- Create a free Apify account using your email.

- Open Indeed Scraper.

- Add one or more job search URLs to scrape their info.

- Click "Start" and wait for the data to be extracted.

- Download your data in JSON, XML, CSV, Excel, or HTML.

If you need guidance on how to run the scraper, you can follow our step-by-step tutorial 🔗 or video guide ▷ on YouTube.

Input

The input for Indeed Scraper should be a job position, location and number of results. For a fuller explanation of input in JSON, head over to the input tab.

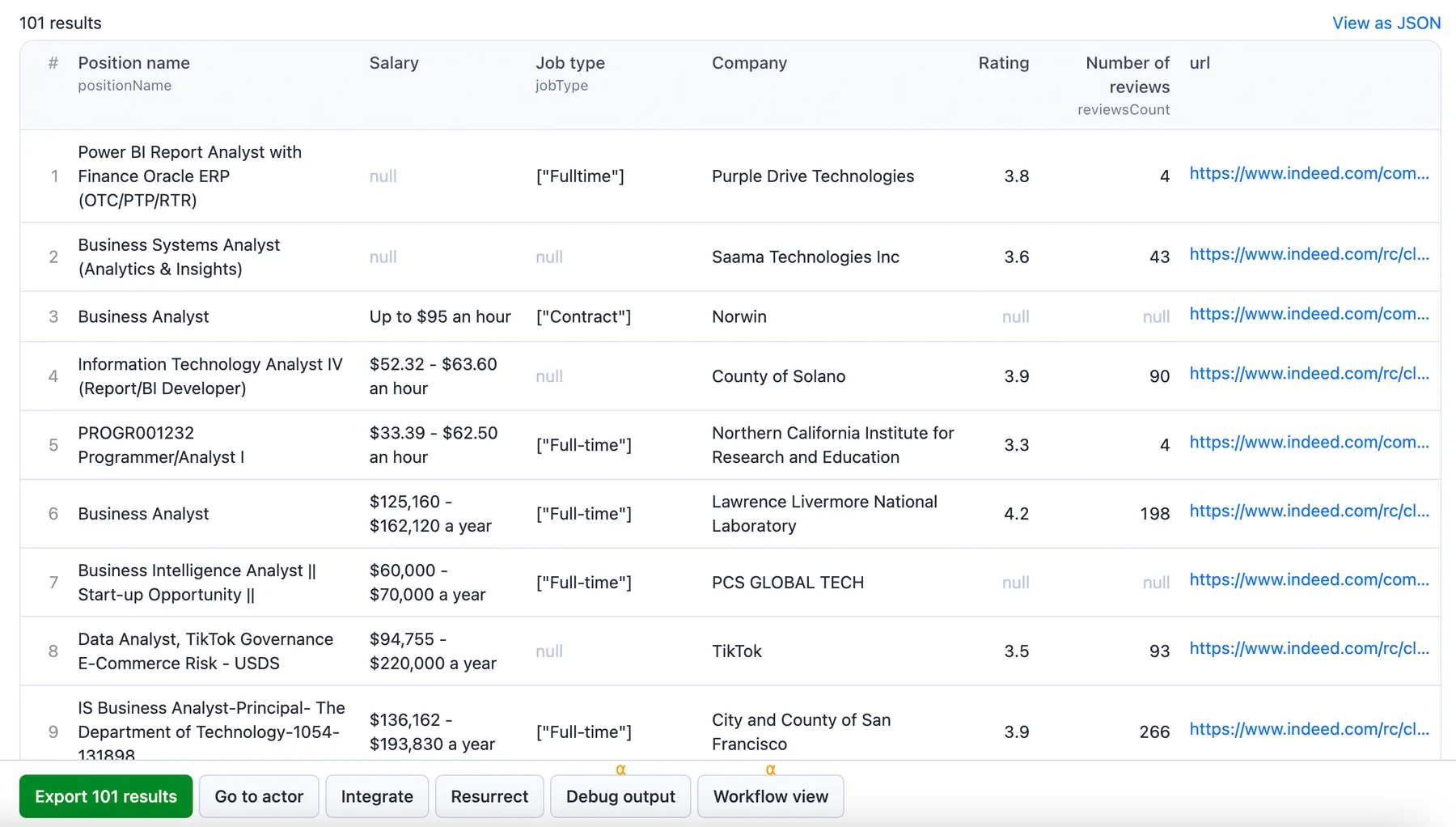

Output sample

The results will be wrapped into a dataset which you can find in the Storage tab. Here's an excerpt from the JSON dataset you'd get if you apply the input parameters above:

And here is the same data but in JSON. You can choose in which format to download your Indeed data: JSON, JSONL, Excel spreadsheet, HTML table, CSV, or XML.

How many results can you scrape with Indeed scraper?

Indeed scraper can return over 1000 results on average - on a good day.

You have to keep in mind that scraping Indeed is dynamic and subject to change. There’s no one-size-fits-all-use-cases number. The maximum number of results may vary depending on the complexity of the input, location, and other factors.

Therefore, while we regularly run Actor tests to keep the benchmarks in check, the results may also fluctuate without our knowing. The best way to know for sure for your particular use case is to do a test run yourself.

How much will scraping Indeed cost you?

When it comes to scraping, it can be challenging to estimate the resources needed to extract data as use cases may vary significantly. That's why the best course of action is to run a test scrape with a small sample of input data and limited output. You’ll get your price per scrape, which you’ll then multiply by the number of scrapes you intend to do.

Watch this video for a few helpful tips. And don't forget that choosing a higher plan will save you money in the long run.

Want to scrape Glassdoor or Upwork?

If you want to extract specific job listing data other than Indeed, you can use one of the specialized job listing scrapers:

| 📗 Upwork Scraper | 🔍 Google Jobs Scraper |

| 🚪 Glassdoor Scraper | 🧢 Crunchbase.com Scraper |

| 💼 Clutch.co Scraper | 👔 LinkedIn Company Search Scraper |

Integrations and Indeed Scraper

Indeed Scraper can be connected with almost any cloud service or web app thanks to integrations on the Apify platform. You can integrate with Make, Zapier, Slack, Airbyte, GitHub, Google Sheets, Google Drive, and more.

You can also use webhooks to carry out an action whenever an event occurs, e.g., get a notification whenever Indeed Scraper successfully finishes a run.

Using Indeed Scraper with the Apify API

The Apify API gives you programmatic access to the Apify platform. The API is organized around RESTful HTTP endpoints that enable you to manage, schedule, and run Apify actors. The API also lets you access any datasets, monitor actor performance, fetch results, create and update versions, and more.

You can use the apify-client NPM package to access the API using Node.js, or the apify-client PyPI package to access the API using Python. Check out the Apify API reference docs for full details, or click on the API tab for code examples.

Is it legal to scrape Indeed data?

Our scrapers are ethical and do not extract any private user data. They only extract what the user has chosen to share publicly, on the website. However, you should be aware that your results could contain personal data. You should not scrape personal data unless you have a legitimate reason to do so.

If you're unsure whether your reason is legitimate, consult your lawyers. You should not scrape personal data unless you have a legitimate reason to do so. If you’re unsure whether your reason is legitimate, consult your lawyers. You can also read our blog post on the legality of web scraping and ethical scraping.

Not your cup of tea? Build your own scraper.

Indeed scraper doesn’t exactly do what you need? You can always build your own! We have various scraper templates in Python, JavaScript, and TypeScript to get you started. Alternatively, you can write it from scratch using our open-source library Crawlee. You can keep the scraper to yourself or make it public by adding it to Apify Store (and find users for it).

Or let us know if you need a custom scraping solution.

Your feedback

We’re always working on improving the performance of our Actors. So if you’ve got any technical feedback for Indeed Scraper or simply found a bug, please create an issue on the actor’s Issues tab in Apify Console.