Google AI Overview Tracker & AI Visibility API

Pricing

from $7.00 / 1,000 prompt checks

Google AI Overview Tracker & AI Visibility API

Google AI Overview tracker and AI search visibility API for paid weekly SEO, GEO, AEO, and LLM visibility reports. Check prompt packs, extract AIO status. Guide: https://konabayev.com/tools/google-ai-overview-tracker/?utm_source=apify_info&utm_medium=referral&utm_campaign=google-ai-overview-tracker

Pricing

from $7.00 / 1,000 prompt checks

Rating

0.0

(0)

Developer

Tugelbay Konabayev

Maintained by CommunityActor stats

0

Bookmarked

9

Total users

7

Monthly active users

2 hours ago

Last modified

Share

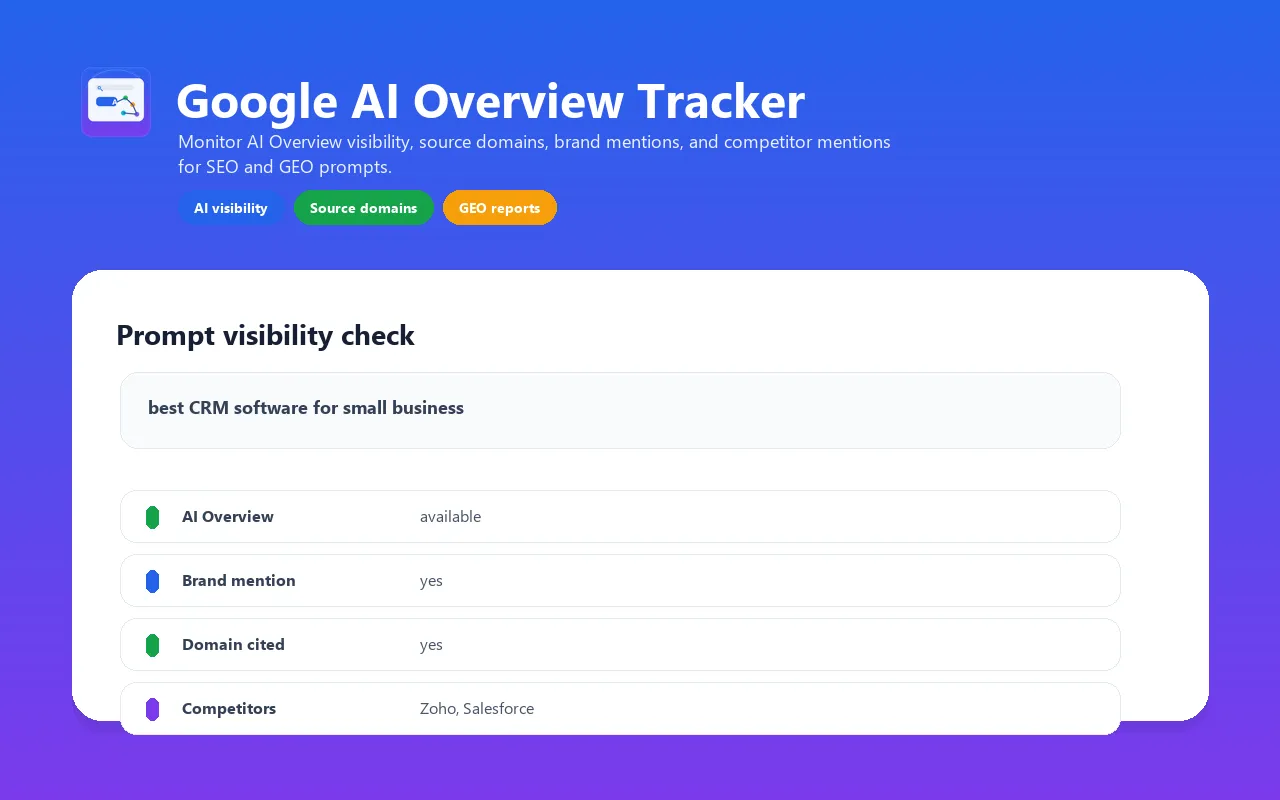

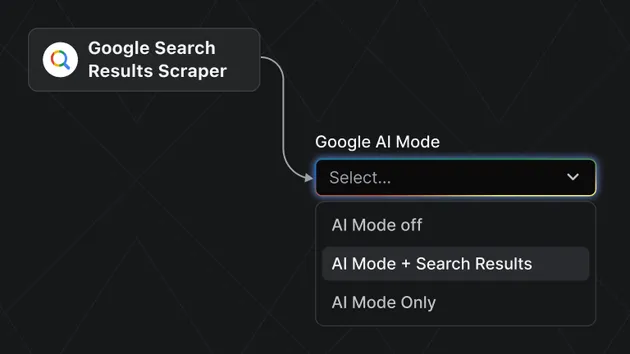

Track whether Google shows an AI Overview for your SEO, GEO, AEO, answer engine optimization, AI search visibility, and LLM visibility prompts, which external source domains appear on the rendered SERP, and whether your brand, domain, or competitors appear in the generated answer.

Use it as a lightweight Google AI Overview visibility tracker: prompt in, AIO status out, with answer text, source domains, brand mentions, domain citation checks, competitor mentions, and a clean aiOverviewAvailable=false result when Google does not show an AI Overview.

This actor is intentionally narrow. It supports Google AI Overview only. It does not claim ChatGPT, Gemini, Perplexity, Claude, Copilot, Grok, or Meta AI coverage.

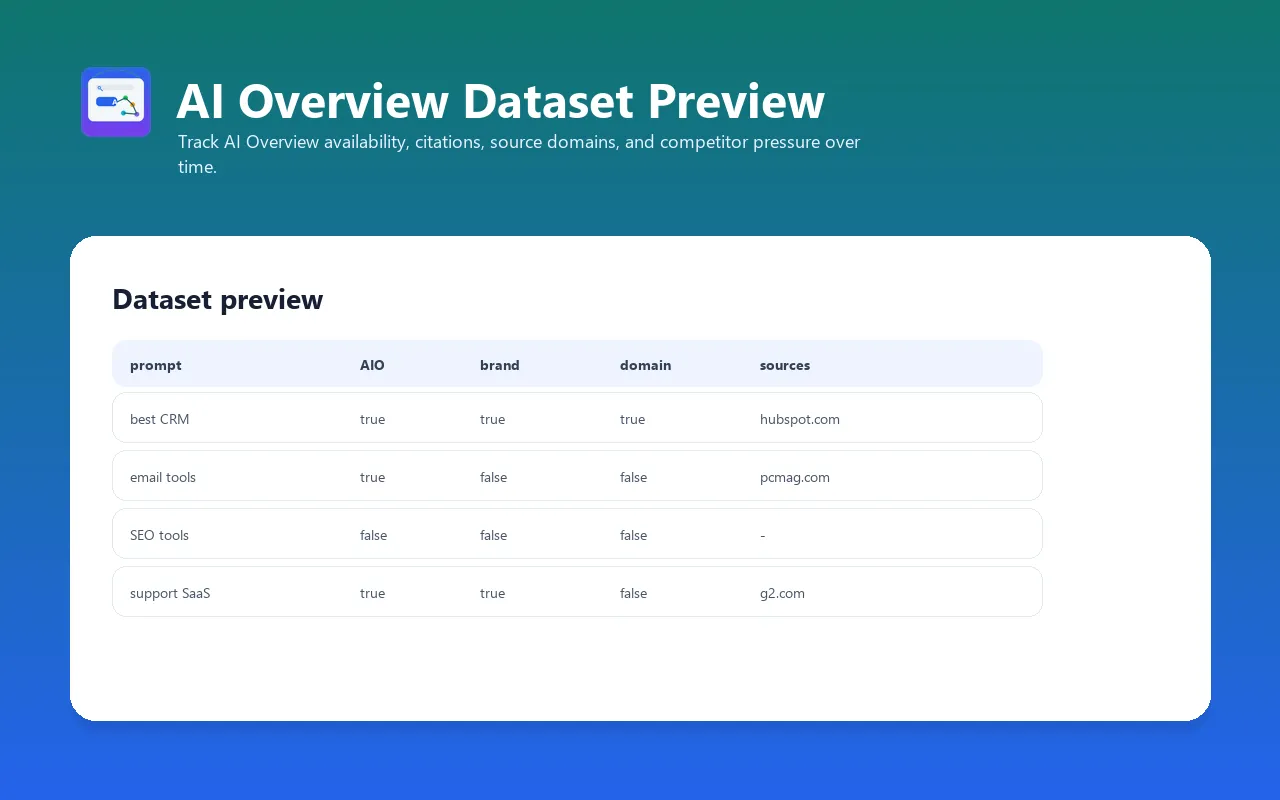

Best fit: paid weekly AI visibility monitoring for commercial prompts, client reporting, SEO/GEO dashboards, and source-domain gap analysis. Default run: a 5-prompt monitoring pack that produces one row per prompt, with visibility status and score fields ready for dashboards. Pricing: pay per prompt checked. Each prompt produces one dataset row and one

prompt-checkbilling event.

Search Demand This Targets

Local DataForSEO baseline generated on 2026-05-08 shows commercial demand around this workflow:

| Query | Location | Monthly volume | CPC |

|---|---|---|---|

answer engine optimization | US | 1,900 | $17.35 |

ai overview tracker | US | 590 | $22.65 |

ai search visibility | US | 390 | $38.33 |

ai visibility tracker | US | 320 | $22.15 |

llm visibility | US | 260 | $25.98 |

Use this actor when those queries map to a real reporting job: weekly prompt checks, source-domain gap analysis, client SEO/GEO reporting, or competitor visibility monitoring.

Fast Answers

What is a Google AI Overview tracker?

A Google AI Overview tracker checks specific Google search prompts and records whether an AI Overview appears, what answer text was visible, which external source domains were extracted from the rendered SERP, and whether your brand, target domain, or competitors appeared.

Can this track AI visibility for SEO and GEO reports?

Yes. This actor is built for SEO, GEO, AEO, and AI visibility reporting where you need repeatable prompt checks, brand mention tracking, domain citation checks, competitor mention detection, and CSV/API-ready datasets.

Does it track ChatGPT, Gemini, Perplexity, or Claude?

No. This actor is intentionally limited to Google AI Overview. That narrower scope keeps the output honest and avoids mixing Google SERP behavior with unrelated AI answer engines.

Starter monitoring run

Start with the 5-prompt monitoring pack below. Each prompt opens a rendered Google SERP, so this is still small enough to validate quickly while producing a useful report shape.

What it returns

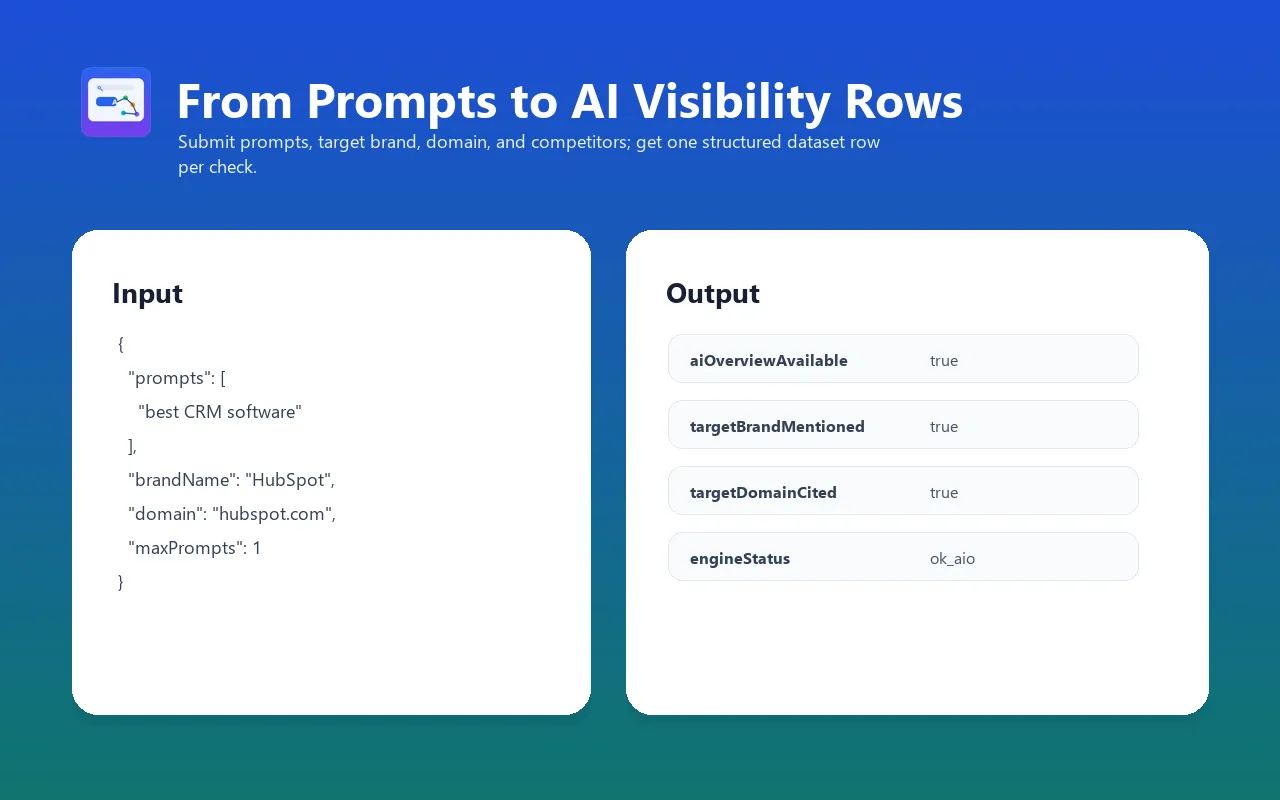

For each prompt, the actor returns one dataset item with:

aiOverviewAvailable- whether Google displayed an AI OverviewanswerText- extracted AI Overview text when availablesourceLinksandsourceDomains- external URLs found on the rendered SERPtargetBrandMentioned- whether your brand appears in the AI Overview texttargetDomainCited- whether your domain appears in source linkscompetitorMentions- which competitor names appearedvisibilityStatusandvisibilityScore- dashboard-ready classification for owned, competitor, source-gap, no-AIO, blocked, timeout, or error outcomessourceCountandcompetitorCount- quick numeric fields for BI tools and weekly reportingengineStatus-ok_aio,ok_no_aio,blocked,timeout, orerror

If Google does not show an AI Overview for a prompt, the run still succeeds and returns aiOverviewAvailable=false.

Larger input example

For a quick validation, reduce maxPrompts to 1. For a useful paid report, keep the default 5 prompts or run 10-50 prompts weekly.

Paid monitoring workflow

Use the default 5-prompt pack to verify that the actor can render Google for your country/language and that the output fields fit your report. For production monitoring, use 10-50 prompts per run and schedule the task weekly.

Useful paid prompt sets usually come from:

- Google Search Console queries with commercial intent

- paid-search keywords where CPC is high

- DataForSEO or Google Ads keyword rows such as

answer engine optimization,ai search visibility, andllm visibility - "best X", "X alternatives", and "X vs Y" category prompts

- sales objections and buyer questions

- competitor pages and comparison pages

For agencies, create one Apify Task per client and send the dataset to Google Sheets, BigQuery, Slack, or a reporting webhook.

Output example

Output field reference

Each dataset item is one prompt check. The schema is intentionally flat enough for CSV, Google Sheets, webhooks, and BI dashboards, while still keeping source links and competitors as arrays for automation.

| Field | Type | Description |

|---|---|---|

prompt | string | The search prompt/query that was checked. |

engine | string | Always google_ai_overview for this actor. |

queryUrl | string | Rendered Google Search URL with country/language parameters. |

answerUrl | string | Final browser URL after redirects or consent flows. |

country | string | Google country code used for the check, for example US. |

language | string | Google interface language, for example en. |

aiOverviewAvailable | boolean | Whether an AI Overview block was detected. |

answerText | string | Extracted AI Overview text when present; empty when no AIO was shown. |

sourceLinks | array | External links found on the rendered SERP after Google/link wrappers are filtered. |

sourceDomains | array | Deduplicated domains from sourceLinks. |

targetBrand | string | Brand name supplied in input. |

targetDomain | string | Normalized target domain supplied in input. |

targetBrandMentioned | boolean | Whether the brand name appears in the extracted AI Overview text. |

targetDomainCited | boolean | Whether the target domain appears among extracted source domains. |

competitorMentions | array | Competitor names found in the AI Overview text or fallback SERP text window. |

visibilityStatus | string | Dashboard status such as owned_visibility, competitor_visible, source_gap, no_ai_overview, blocked, timeout, or error. |

visibilityScore | integer | 0-100 score for quick sorting and weekly trend dashboards. |

sourceCount | integer | Number of unique extracted source domains. |

competitorCount | integer | Number of detected competitor mentions. |

engineStatus | string | ok_aio, ok_no_aio, blocked, timeout, or error. |

error | string | Short error message when rendering or parsing fails. |

blockMarkers | array | Detected block/CAPTCHA phrases when Google blocks the session. |

checkedAt | string | UTC timestamp for the check. |

runtimeSeconds | number | Runtime for that prompt attempt. |

Use cases

- AI visibility tracking for target prompts

- GEO / AEO monitoring for target prompts

- AI Overview source tracking

- Brand visibility checks

- Domain citation checks

- Competitor visibility checks

- SEO source-gap research

- Weekly prompt monitoring for marketing teams

Who should use it

This actor is useful when the question is not "what rank do I have in classic organic search?" but "does Google's AI answer show up, which brands are mentioned, and which external domains appear around that AI answer?"

Typical users:

- SEO teams tracking AI Overview exposure for commercial prompts

- content teams checking whether their domain appears near answer-style SERPs

- agencies monitoring client and competitor visibility across prompt lists

- SaaS teams watching category prompts such as "best CRM for small business"

- affiliate teams checking which publisher domains are surfaced for buyer-intent queries

- internal growth teams building weekly AEO/GEO dashboards

Suggested monitoring workflow

- Start with 5-20 high-intent prompts from Search Console, paid-search terms, sales calls, or competitor pages.

- Run the actor with

maxConcurrency=1andtimeoutSeconds=30. - Export the dataset to Google Sheets, BigQuery, or a webhook.

- Track these weekly fields:

aiOverviewAvailable,targetBrandMentioned,targetDomainCited,sourceDomains, andcompetitorMentions. - Split prompts into buckets:

- AIO exists and your brand/domain appears: maintain and monitor.

- AIO exists but competitors appear: improve topical coverage and citation-worthy pages.

- AIO exists but only publishers/tools appear: identify source-gap opportunities.

- No AIO: monitor less often or test related prompt variants.

Prompt list examples

For SaaS:

For local services:

For affiliate/content sites:

Comparison with classic SERP rank tracking

| Need | Classic rank tracker | Google AI Overview Tracker |

|---|---|---|

| Track organic position | Yes | No |

| Detect whether AI Overview appears | No | Yes |

| Extract answer text | No | Yes, when AIO is present |

| Track brand mention in AI answer | No | Yes |

| Track domains surfaced near AI answer | Limited | Yes |

| Monitor competitor mentions in generated answer | No | Yes |

| Best cadence | Daily/weekly rankings | Weekly prompt visibility checks |

Use both when you need a complete search view: rank tracker for organic positions, this actor for AI Overview visibility.

API usage

Python

JavaScript

Webhook / scheduled monitoring

Create an Apify Task with your prompt list, then schedule it weekly. Use a webhook on run success to send the dataset to:

- Google Sheets

- BigQuery

- Slack

- Make/Zapier

- your internal dashboard

Validation

Validation snapshot from 2026-04-25. This actor was built only after a 20-prompt stability probe:

- 20/20 clean headless Google SERP checks

- 19/20 prompts showed an AI Overview

- 1/20 prompts cleanly returned no AI Overview

- Median local probe runtime: 3.0 seconds. Apify runtime varies with proxy/session state.

Related validation file:

docs/research/google-ai-overview-tracker-validation-results.md

Limitations

- Google decides whether AI Overview appears. Some queries, countries, or sessions may return no AI Overview.

- Google SERP layouts change. The actor returns

engineStatusanderrorfields so monitoring jobs can detect layout or block changes. - Browser rendering is required; this is not a raw HTTP-only scraper.

- Keep concurrency low. The default is

1, maximum is3. - The default input checks 5 prompts so the first run produces a useful monitoring report. Reduce

maxPromptsto1only for a tiny validation run.

Pricing

Pay per event:

prompt-check- one event per prompt checked

Base price: $0.01 / prompt-check.

Cost examples

| Run size | Input | Prompt-check events | Approx. prompt cost |

|---|---|---|---|

| Tiny validation | 1 prompt | 1 | ~$0.01 |

| Default starter monitor | 5 prompts | 5 | ~$0.05 |

| Small category monitor | 10 prompts | 10 | ~$0.10 |

| Weekly SaaS prompt set | 50 prompts | 50 | ~$0.50 |

| Four weekly 50-prompt checks | 200 prompts/month | 200 | ~$2.00/month |

Actual platform cost also depends on Apify compute/runtime and your account plan. Keep prompt batches small until you know how often AIO appears for your niche.

Troubleshooting

| Issue | Likely cause | What to do |

|---|---|---|

aiOverviewAvailable=false | Google did not show an AI Overview for that query/session/country | This is a valid result. Try related prompts or another country/language. |

engineStatus=blocked | Google showed CAPTCHA, unusual-traffic, or block text | Keep maxConcurrency=1, use residential proxy, retry later, or reduce batch size. |

engineStatus=timeout | SERP or AIO did not finish rendering within timeout | Increase timeoutSeconds to 45-60 for harder prompts. |

Empty answerText with source links | Classic SERP links were available but no AIO text was detected | Treat as no-AIO or inspect the rendered SERP manually. |

| Competitor not detected | Name spelling differs from input | Add short brand variants to competitors, for example Salesforce and Salesforce CRM. |

| Target domain not cited | Domain does not appear in extracted external source links | Use sourceDomains to identify which domains are currently surfaced. |

FAQ

Does this track ChatGPT, Gemini, Perplexity, Claude, Copilot, Grok, or Meta AI?

No. This actor intentionally supports Google AI Overview only. Other engines require separate validation because login, browser mode, rendering behavior, and source extraction differ.

Is sourceLinks exactly the same as AI Overview citations?

No. The actor returns external source links found on the rendered SERP after Google wrapper links are cleaned and Google-owned domains are filtered. This is useful for source-domain monitoring, but it should not be treated as a guaranteed one-to-one list of AIO citation cards.

Why does the same prompt sometimes return different results?

Google personalizes and experiments with SERP layouts. Country, language, time, browser session, proxy, and Google's own AIO rollout can change whether an AI Overview appears.

Should I run this daily?

For most teams, weekly is enough. AI Overview visibility can move, but daily checks may add noise and cost before you have a stable prompt set.

Can I use this for client reporting?

Yes, if you explain the metric correctly: it tracks AI Overview availability, answer text, brand/domain visibility, and extracted external source domains for specific prompts at a specific time.

What is a good first prompt set?

Start with 10-20 prompts that already matter to the business: high-impression Search Console queries, paid search terms, "best X" category searches, sales objections, and competitor-comparison prompts.

Data quality notes

- No-AIO is a valid output, not a failed run.

engineStatusshould be monitored; filter outblocked,timeout, anderrorbefore calculating visibility rates.- Use exact brand names and common short variants in

competitors. - Keep prompt wording stable over time if you want trend charts.

- Store raw datasets for repeatable historical reporting.

Reporting template

For a weekly report, group the dataset by prompt and track these columns:

| Metric | How to calculate |

|---|---|

| Visibility score | Average visibilityScore across valid rows, or trend it by prompt week over week. |

| AIO coverage | Count rows where aiOverviewAvailable=true divided by total valid rows. |

| Brand visibility | Count rows where targetBrandMentioned=true divided by rows with AIO. |

| Domain visibility | Count rows where targetDomainCited=true divided by rows with source domains. |

| Competitor pressure | Count prompts where competitorMentions is not empty. |

| Source diversity | Count unique sourceDomains across the prompt set. |

| Block/error rate | Count blocked, timeout, and error statuses divided by total rows. |

Keep the report honest by separating visibility changes from collection quality. A week with many blocked or timeout rows should be marked as a data-quality issue, not as a real visibility drop.

Good prompt hygiene

- Use searcher language, not internal product jargon.

- Avoid changing prompt wording every week if you need trend lines.

- Include buying-intent prompts, comparison prompts, and problem-aware prompts.

- Keep country/language stable for each tracked prompt set.

- Do not mix unrelated markets in one dashboard; separate SaaS, local, ecommerce, and affiliate prompts.

- Add competitor aliases when brand names have common abbreviations.

- Review no-AIO prompts monthly and replace prompts that never trigger useful AI Overview data.

Operational guardrails

Large prompt lists are supported, but small batches are easier to debug. A practical operating pattern is:

- run the default 5-prompt starter monitor;

- inspect

visibilityStatus,visibilityScore, andengineStatusdistribution; - expand to 25-50 prompts;

- schedule weekly;

- alert only on valid rows, not on blocked/error rows.

If you need hundreds of prompts, split them into multiple Tasks by category, country, or client. That keeps datasets easier to inspect and reduces the chance that one transient block affects the whole report.

Changelog

- 0.1.23 (2026-05-11) - switched the Store/input example to a 5-prompt paid monitoring pack and added dashboard-ready visibility status, score, source count, and competitor count fields.

- 0.1.10 (2026-04-26) - expanded README, clarified source-link semantics, and kept one-prompt first-run path.

- 0.1 (2026-04-26) - initial Google AI Overview-only MVP.