AEO Lite — Track My Brand in AI Search (3 Fields, No JSON)

Pricing

from $10.00 / 1,000 perplexity resolutions

AEO Lite — Track My Brand in AI Search (3 Fields, No JSON)

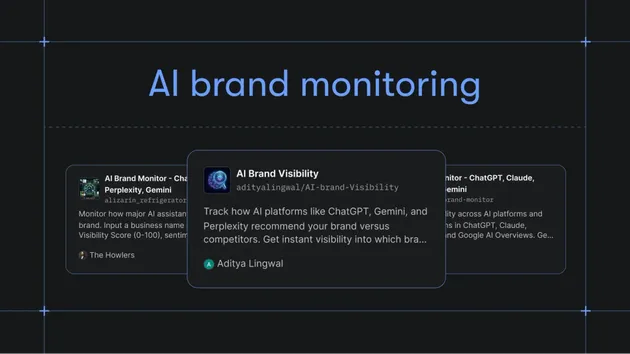

Beginner-friendly AEO Citation Monitor. Type your brand, pick your industry, click Start. Same 6-engine AI search tracking (ChatGPT, Claude, Gemini, Perplexity, Grok, Google AI Overviews) — minimal-input form for non-technical users.

Pricing

from $10.00 / 1,000 perplexity resolutions

Rating

0.0

(0)

Developer

Alex Lowe

Maintained by CommunityActor stats

0

Bookmarked

2

Total users

1

Monthly active users

14 days ago

Last modified

Categories

Share

AEO Lite — Track My Brand in AI Search

Type your brand name, pick your industry, click Start. The Actor runs ~25 prompts customers might ask about brands like yours across 4 fast AI engines (Perplexity, Anthropic, xAI Grok, Google AI Overviews) and shows you exactly how each engine describes you, ranks you, and cites you.

No JSON. No engineering. ~$0.26 per first run.

🎯 5-minute quickstart

1. Fill in 3 fields and click Start

The form has 3 required fields and a few optional ones:

| Field | What to enter |

|---|---|

| Brand name | The exact name customers know you as. e.g. "Clash Coach AI" or "HubSpot". |

| Industry | Pick the closest match: B2B SaaS, e-commerce, local services, agency, news/media, or fintech. |

| Competitors (optional) | One name per line. We track how each is mentioned alongside your brand. |

| Brand website (optional) | e.g. acme.com. Lets us flag when AI engines cite YOUR own pages as sources. |

| Country | Default US. Change to bias responses toward another country. |

That's it. Click Start. Cost: ~$0.26. Wall-clock: <90 seconds.

2. Watch records arrive in Storage → Dataset

While the run executes, click Storage → Dataset in the run page. You'll see records appear as each AI engine responds.

A demo run produces 16 records (~4 prompts × 4 engines). Each record has the full AI response, your brand's mention count, competitor mention counts, and every URL the AI cited.

3. The 4 numbers that matter

When the run completes, look at any record:

| Field | What it tells you |

|---|---|

brandMentions[].mentionCount | How many times this AI engine mentioned your brand in this response |

brandMentions[].rankPosition | What position you appeared at if the AI listed multiple brands (1 = first) |

competitorMentions[] | The same for each competitor — direct comparison of how often you vs them get mentioned |

citations[] | Every URL the AI cited as a source. isOwned: true means the AI cited YOUR site. |

If your brand has zero mentions across all records, see Why isn't my brand mentioned? below.

🔍 What does a record look like?

Here's a real record from a Clash Coach AI run on Perplexity. Real data, annotated.

The "winning" record looks like: non-zero mentionCount + rankPosition ≤ 3 + at least one isOwned: true citation. That signals "the AI knows your brand, ranks you well, and pulls from your own content."

What does this Actor do (full version)

For each prompt × engine, AEO Lite:

- Sends the prompt to the AI engine via its sanctioned API

- Parses the response for your brand's mentions (count, list rank, surrounding text)

- Captures competitor mentions in the same response

- Extracts every cited URL with owned/competitor flags

- Records cost, latency, transport, and grounding state

One record per (prompt × provider × run). The 4 fast engines (Perplexity, Anthropic, Grok, AI Overviews) cover the consumer/SMB AI search landscape. For ChatGPT and Gemini grounded coverage, use the main AEO Citation Monitor.

Pricing

Pay only for what you use:

| Event | Price |

|---|---|

| Perplexity record | $0.010 |

| Google AI Overview record | $0.015 |

| Anthropic / Grok record | $0.020 |

| Gemini record (if you add it) | $0.025 |

| OpenAI record (if you add it) | $0.075 + grounding bracket |

Default 4-engine demo: ~$0.26 for 16 records. Add OpenAI or Gemini: scales with their per-record price.

Operator FAQ

🔧 Why isn't my brand mentioned?

If brandMentions is empty across most of your records, it's real signal — not a bug. Three quick fixes:

- Spell-variant check. AI engines may say "Clash Coach" when your brand is "Clash Coach AI" or vice versa. Add every variant to the Competitor names field — wait, that's for competitors. For your own brand variants, you'll need the main Actor which exposes the

brand.aliasesarray. The Lite variant matches yourbrandNameliterally. - Prompt phrasing. Different prompts surface different things. "Best coaching apps" pulls product mentions; "How do I improve at X" pulls strategy advice. The lite Actor auto-generates ~25 prompts based on your industry, so you should see varied results — but if every category returns zero, the issue is below.

- Grounding sources. Look at

citations[].domain. If the AI is citing review sites and competitor blogs but never your domain, your brand isn't yet in the source pool the AI grounds against. AEO content work pays off here — get cited by the sites that show up incitations[].

📅 How do I track my brand weekly?

Apify Console → Schedules → New Schedule. Pick this Actor, set cron 0 9 * * 1 (Mondays 9am UTC), and use your filled-in input. Apify runs it weekly and stores each week's dataset.

For multiple brands or clients, create one Schedule per brand. Apify charges per run, so 4 brands × weekly = 16 runs/month at this Actor's pricing.

If you want only changed records between weeks (smaller datasets, easier to spot movement), use the main Actor which exposes deltaMode: true — Lite doesn't expose this in v1.

📲 How do I get a Slack message after each run?

Apify ships a built-in Slack integration. Console → Integrations → Slack → connect → pick this Actor → "Send notification on success." When a run finishes, the Actor sets a status message like "Run complete: 16 records, $0.26 spent. Clash Coach AI cited 4 times. Top competitor: Royale Buddy (8 mentions)." — that's what lands in your Slack channel.

You can do the same with email (Integrations → Email) or any webhook (Integrations → Webhook for Zapier, Make, n8n).

Other questions

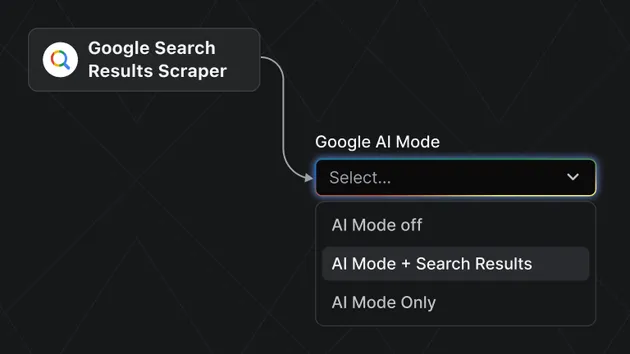

Why are some engines off by default? ChatGPT and Gemini grounded calls take 30-180 seconds each, which can push runs past the 5-minute Apify maintenance ceiling. The 4 default engines (Perplexity, Anthropic, Grok, AI Overviews) all complete in under 30s per call. Add ChatGPT/Gemini to the engines field for full coverage — accept the longer wall-clock.

What's the difference between this and the main AEO Citation Monitor? Same engine, simpler form. Lite caps demo runs at 16 records and uses an enum-driven industry template. The main Actor exposes the full input shape (custom prompts, competitor aliases, sentiment tagging, prompt discovery from URL, delta mode). Switch to main when you outgrow Lite.

Can I monitor a person rather than a brand? No. Anthropic and OpenAI's terms prohibit using their APIs for surveillance, tracking, or profiling of individuals. This Actor is for tracking public brands, products, and services in AI responses.

Where's the schema? @apify-portfolio/aeo-schema on npm — same record shape between Lite and the main Actor.

ToS attestation

acknowledgePublicBrandsOnly: true is required. Anthropic's usage policy and OpenAI's terms forbid using their APIs for surveillance, tracking, or profiling of individuals. This Actor is for tracking public brands in AI responses.

Schema reference

@apify-portfolio/aeo-schema on npm — semver-stable Zod definitions of AICitationRecord. Use directly in TypeScript pipelines.