Article Extraction API

Pricing

from $3.50 / 1,000 articles

Article Extraction API

Convert article URLs to clean Markdown, text, or HTML for RAG and LLM pipelines. Extract title, author, date, images, links, word count, canonical URL, Open Graph, and JSON-LD metadata while removing ads and boilerplate.

Pricing

from $3.50 / 1,000 articles

Rating

0.0

(0)

Developer

Tugelbay Konabayev

Actor stats

0

Bookmarked

24

Total users

13

Monthly active users

a day ago

Last modified

Categories

Share

Article Extraction API — URL to Markdown for RAG & LLMs

Clean article content from known URLs — extract title, author, date, Markdown/text/HTML, images, links, and metadata from news, blogs, docs, and knowledge-base pages. Built for AI ingestion — compact Markdown output for RAG pipelines, vector databases, LLM prompts, content monitoring, and research datasets. Fast HTTP extraction — no browser overhead; process batches with configurable concurrency up to 50 URLs.

Convert article URLs into clean, readable content. Article Extractor removes ads, navigation, sidebars, and boilerplate, then returns the main article text with source metadata. Output as Markdown, plain text, or clean HTML for AI/LLM workflows, content analysis, and data pipelines.

Perfect for building RAG pipelines, AI training datasets, knowledge bases, and content monitoring systems.

Article Extraction API for RAG and AI Agents

Call it from the Apify API, MCP tools, LangChain, Make, Zapier, or scheduled Apify runs. Give the actor known article URLs and get structured records that are ready to index, summarize, classify, or store.

URL to Markdown, Text, or Clean HTML

Paste a URL and get clean article text with title, author, publish date, body content, images, links, Open Graph data, canonical URL, and word count. Markdown is the default because it preserves headings and lists while keeping token usage lower than raw HTML.

Web Content Scraper for RAG Pipelines

Feed clean article text directly into your vector database or LLM prompt. Output as Markdown or plain text — ready for embedding.

Bulk Article Extraction (Up to 10K URLs)

Process hundreds or thousands of URLs in a single run. Perfect for news monitoring, content aggregation, and research datasets.

What does Article Extractor do?

This actor takes a list of URLs and extracts the main article content from each page using Mozilla's Readability algorithm (the same technology behind Firefox Reader View). It returns structured data including:

- Article text in Markdown, plain text, or clean HTML

- Metadata: title, author, published date, description, language

- Structured data: JSON-LD and Open Graph metadata parsing

- Media: images, Open Graph image, links found in the article

- Stats: word count, HTTP status code, extraction timestamp

You provide article-style public URLs and the actor extracts clean content without custom selectors or per-site CSS parsing. Some protected, app-rendered, or non-article pages may return partial content.

Why use this instead of a generic web scraper?

| Need | Generic scraper | Website crawler | Article Extractor |

|---|---|---|---|

| Main content extraction | Usually custom CSS selectors | Whole-page or crawl-oriented extraction | Readability-based article detection |

| Output cleanup | You remove nav, ads, cookie banners, and footers | Good for broad site ingestion | Focused on clean article body text |

| Setup time | Write and maintain selectors per site | Configure crawl scope and depth | Add URLs and run |

| LLM-ready output | Requires post-processing | Good for site-wide RAG | Markdown/text/HTML per known URL |

| Metadata | Manual extraction | Varies by crawler | Author, date, description, language, OG, JSON-LD |

| Best fit | Site-specific scraping logic | Crawling whole websites | News, blogs, docs, research, monitoring |

vs. Website Content Crawler

Apify's Website Content Crawler crawls entire websites and extracts content across discovered pages. Article Extractor is different:

- Focused extraction: Only extracts the main article content, not the entire page

- Cleaner output: Strips navigation, ads, sidebars, related articles — just the article

- Richer metadata: Automatically extracts author, publish date, JSON-LD, Open Graph

- Faster: Uses HTTP requests (no browser), processes pages in parallel

- Known-URL workflow: Optimized when your pipeline already has article URLs from RSS, search, sitemaps, feeds, monitoring, or another actor

When to use which:

- Use Article Extractor when you need clean article text from known URLs (news, blogs, docs)

- Use Website Content Crawler when you need to crawl an entire website following links

- Use RAG Web Browser when pages require browser rendering or search-to-content workflows

Best-fit workflows

- URL to Markdown API — convert a queue of article URLs into clean Markdown documents.

- News and blog monitoring — combine with RSS, search, or scheduled runs to archive new articles.

- RAG ingestion — push Markdown content and metadata into vector stores with source traceability.

- Content research — collect article titles, authors, dates, descriptions, word counts, and clean bodies.

- SEO content analysis — compare competitor articles without scraping menus, ads, and unrelated widgets.

Features

- Smart article extraction using Mozilla Readability algorithm

- Markdown output optimized for LLM consumption and RAG pipelines

- Automatic metadata extraction (author, date, description, language)

- JSON-LD and Open Graph metadata parsing

- Image and link extraction from article body

- Concurrent processing (up to 50 pages in parallel)

- Proxy support for geo-restricted content

- Best for public article-style pages such as news sites, blogs, and documentation

- 5MB page size limit to prevent memory issues

- Pay-per-event pricing for extracted articles

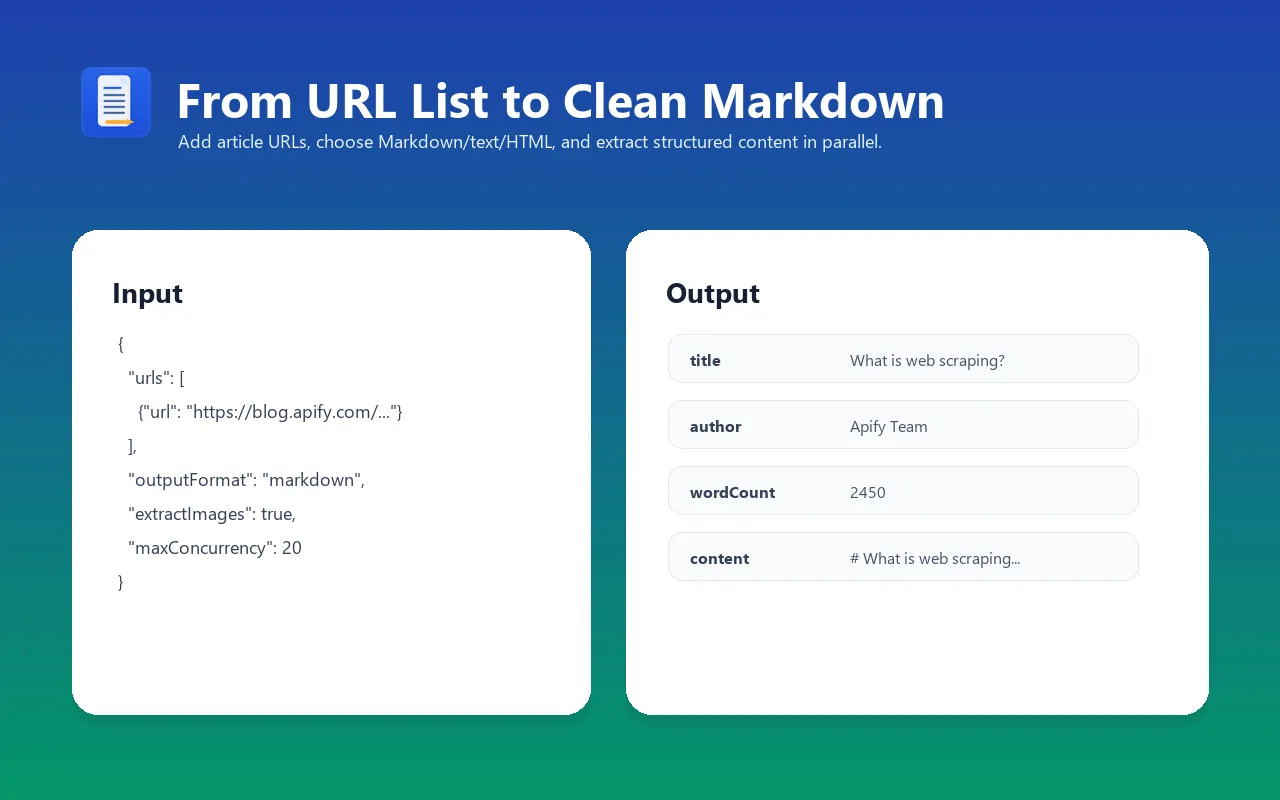

Input examples

Extract articles as Markdown (default)

Extract as plain text for NLP analysis

Bulk extraction with proxy (100+ articles)

Extract with all metadata (images + links)

Input parameters

| Parameter | Type | Default | Required | Description |

|---|---|---|---|---|

urls | Array | — | Yes | List of article/page URLs to extract content from |

outputFormat | String | "markdown" | No | Output format: "markdown", "text", or "html" |

maxItems | Integer | 10 | No | Maximum number of articles to extract (1–10,000) |

extractImages | Boolean | true | No | Include image URLs found in the article |

extractLinks | Boolean | false | No | Include links found in the article |

timeout | Integer | 30 | No | Maximum seconds to wait for each page to load (5–120) |

maxConcurrency | Integer | 10 | No | Number of pages to process simultaneously (1–50) |

proxyConfiguration | Object | None | No | Proxy settings for accessing geo-restricted content |

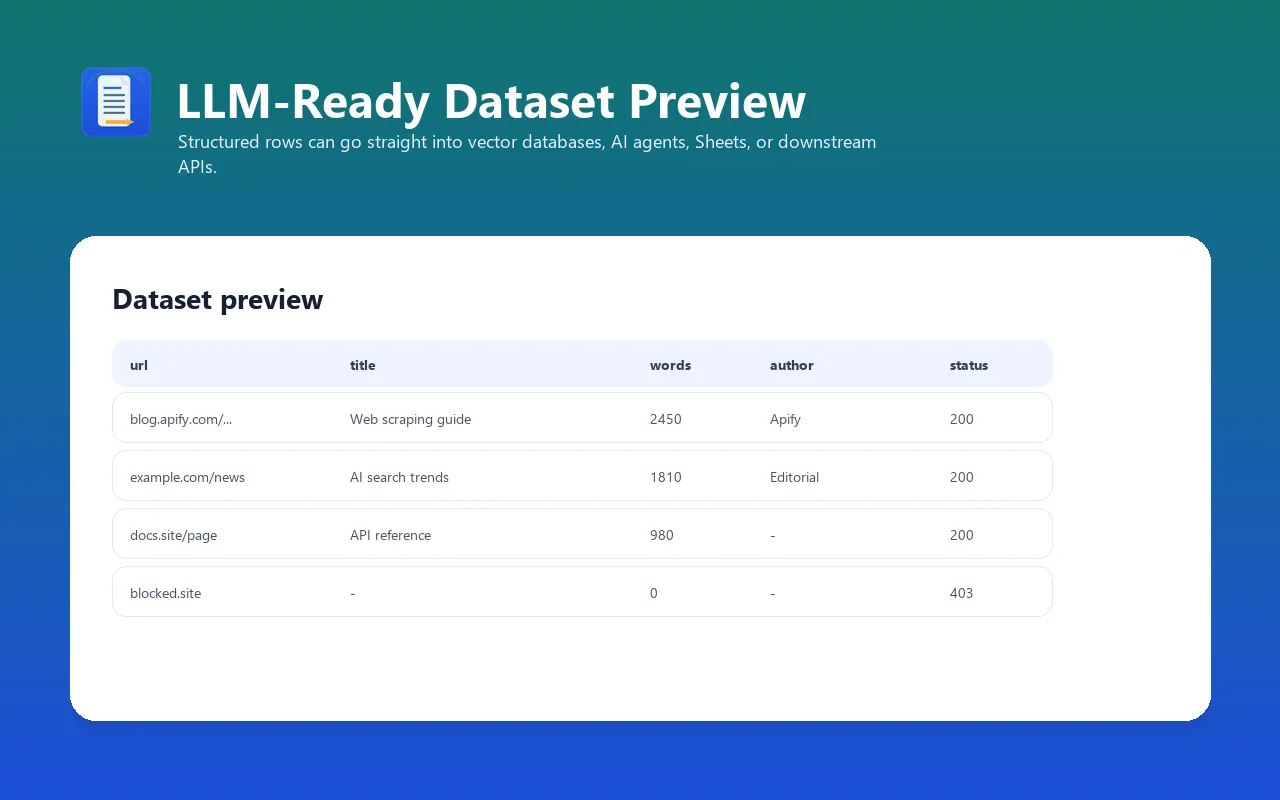

Output format

Each item in the dataset contains:

| Field | Type | Description |

|---|---|---|

url | String | Final page URL (after redirects) |

canonicalUrl | String | Canonical URL if specified by the page |

title | String | Article title |

author | String | Article author (from meta tags, JSON-LD, or byline) |

publishedDate | String | Publication date (ISO 8601) |

description | String | Meta description or article summary |

content | String | Extracted article in requested format (Markdown/text/HTML) |

wordCount | Integer | Number of words in the article |

language | String | Detected content language code |

siteName | String | Website name (from Open Graph) |

images | Array | Image URLs from the article (if extractImages: true) |

links | Array | Links from the article (if extractLinks: true) |

ogImage | String | Open Graph image URL |

statusCode | Integer | HTTP response status code |

error | String | Error message if extraction failed (null on success) |

extractedAt | String | Extraction timestamp (ISO 8601) |

Example output

Integrations

Apify MCP Server (Claude, AI agents)

Use as a tool in Claude Desktop, Claude Code, or any MCP-compatible AI agent framework. The actor is PPE-priced, making it native to AI agent workflows where each task triggers a separate extraction.

Python integration

JavaScript/TypeScript integration

LangChain (RAG pipeline)

Webhooks and integrations

The actor works with Apify's integration ecosystem:

- Google Sheets — export extracted articles directly to a spreadsheet

- Zapier / Make — trigger workflows on new results

- Slack — get notifications when extraction completes

- Email — receive dataset as email attachment

- API — call programmatically via Apify REST API

Use cases

- LLM training data — extract clean text from web pages for fine-tuning datasets

- RAG pipelines — feed article content into vector databases for retrieval-augmented generation

- Content analysis — analyze articles at scale for sentiment, topics, and trends

- News monitoring — extract and archive news articles automatically on a schedule

- Research — collect and structure academic or industry content for literature reviews

- SEO analysis — extract competitor content for gap analysis and content strategy

- Knowledge base — build searchable archives from documentation sites and blogs

- Content migration — extract content from legacy sites during CMS migrations

- AI agents — give your AI agent structured article content from public article-style URLs

- Newsletter curation — automatically extract and summarize articles for newsletters

- Compliance monitoring — track content changes on regulatory or competitor pages

Cost estimation (PPE pricing)

| Event | Description |

|---|---|

article-extracted | Each article successfully extracted |

Apify currently displays pricing from $3.50 / 1,000 articles. The exact price depends on the user's Apify pricing tier; the examples below show the displayed "from" price and exclude the small actor-start event.

Example costs at the displayed "from" price:

| Scenario | Articles | From cost |

|---|---|---|

| 10 blog posts | 10 | ~$0.04 |

| 100 news articles | 100 | ~$0.35 |

| 1,000 documentation pages | 1,000 | ~$3.50 |

| Daily news monitoring (50 articles/day) | 1,500/month | ~$5.25/month |

| Large-scale extraction | 10,000 | ~$35 |

Tip: Set extractImages: false and extractLinks: false to speed up extraction and reduce output size when you only need the text content.

FAQ

What types of pages work best?

Article Extractor works best on article-style pages: news articles, blog posts, documentation pages, Wikipedia articles, and similar content. The Readability algorithm is designed to identify the "main content" of a page and strip everything else.

Does it work on JavaScript-rendered pages (SPAs)?

No. Article Extractor uses fast HTTP requests (no browser). Pages that require JavaScript to render content (React SPAs, Angular apps) will return empty or minimal content. For those pages, use RAG Web Browser which has automatic browser fallback.

How fast is it?

Very fast. Since it uses HTTP requests (no browser), it can process 100 articles in 2–3 minutes with default concurrency. Increase maxConcurrency to 50 for even faster processing.

Can I extract content behind login walls or paywalls?

No. Article Extractor only works with publicly accessible pages. It cannot bypass login walls, paywalls, or CAPTCHA-protected content.

What's the maximum page size?

5MB per page. Larger pages are truncated to prevent memory issues. This covers 99%+ of normal web articles.

Can I run this on a schedule?

Yes. Set up a Schedule in Apify Console to run the actor at any interval — hourly, daily, or custom cron expressions. Perfect for news monitoring and content tracking.

Why Markdown output?

Markdown is the most LLM-friendly format:

- Preserves semantic structure (headers, emphasis, lists, code blocks)

- Compact — fits more content in LLM context windows

- Renders cleanly in chat interfaces and documentation tools

- Easy to parse for downstream processing

How does it handle errors?

If a page fails to load (timeout, 404, blocked), the actor returns the URL with an error field explaining what went wrong and a null content field. Other pages in the batch continue processing normally.

Troubleshooting

Empty or very short content extraction

- Cause: The page is a SPA (Single Page Application) that renders content with JavaScript

- Fix: Use RAG Web Browser instead, which has browser fallback

- Alternative: Very short pages (<100 words) may not have enough content for Readability to detect the main article

Missing author or publish date

- Cause: The page doesn't include author/date in meta tags, JSON-LD, or standard HTML patterns

- Fix: This is expected — not all pages provide this metadata. The fields will be null.

Timeout errors on some pages

- Cause: The target page is slow to respond

- Fix: Increase the

timeoutparameter (default: 30 seconds, max: 120 seconds) - Alternative: Reduce

maxConcurrencyif you're scraping many pages from the same domain

Proxy-related errors

- Cause: Some sites block datacenter IPs

- Fix: Enable Apify proxy with residential proxy groups in

proxyConfiguration

Limitations

- Only works with publicly accessible pages (no login-protected or paywalled content)

- JavaScript-rendered content (SPAs) will not extract fully — use a browser-based solution for those

- Very short pages (under 100 words) may not have enough content for Readability to detect

- Maximum page size: 5MB (larger pages are truncated)

- Maximum 10,000 articles per run (use multiple runs for larger datasets)

- Metadata extraction depends on the page having proper meta tags, JSON-LD, or Open Graph markup

Changelog

v1.0 (2026-03-29)

- Initial release

- Markdown, plain text, and clean HTML output formats

- Mozilla Readability-based article extraction

- Metadata extraction (author, date, description, JSON-LD, Open Graph)

- Image and link extraction

- Concurrent processing with configurable concurrency (1–50)

- Proxy support

- Pay-per-event pricing

Related Actors

- Website Content Crawler — Crawl websites and extract Markdown for RAG/LLMs

- RAG Web Browser — Search Google + extract as Markdown for AI agents

- YouTube Transcript Extractor — Bulk extract video transcripts as SRT/VTT/Markdown

- Website Tech Stack Detector — Identify 80+ technologies on any website

- Google Maps Lead Extractor — Extract business leads with emails from Google Maps

See all actors: apify.com/tugelbay